What is MCP and MCP servers and How do they work: A complete in-depth guide on MCPs

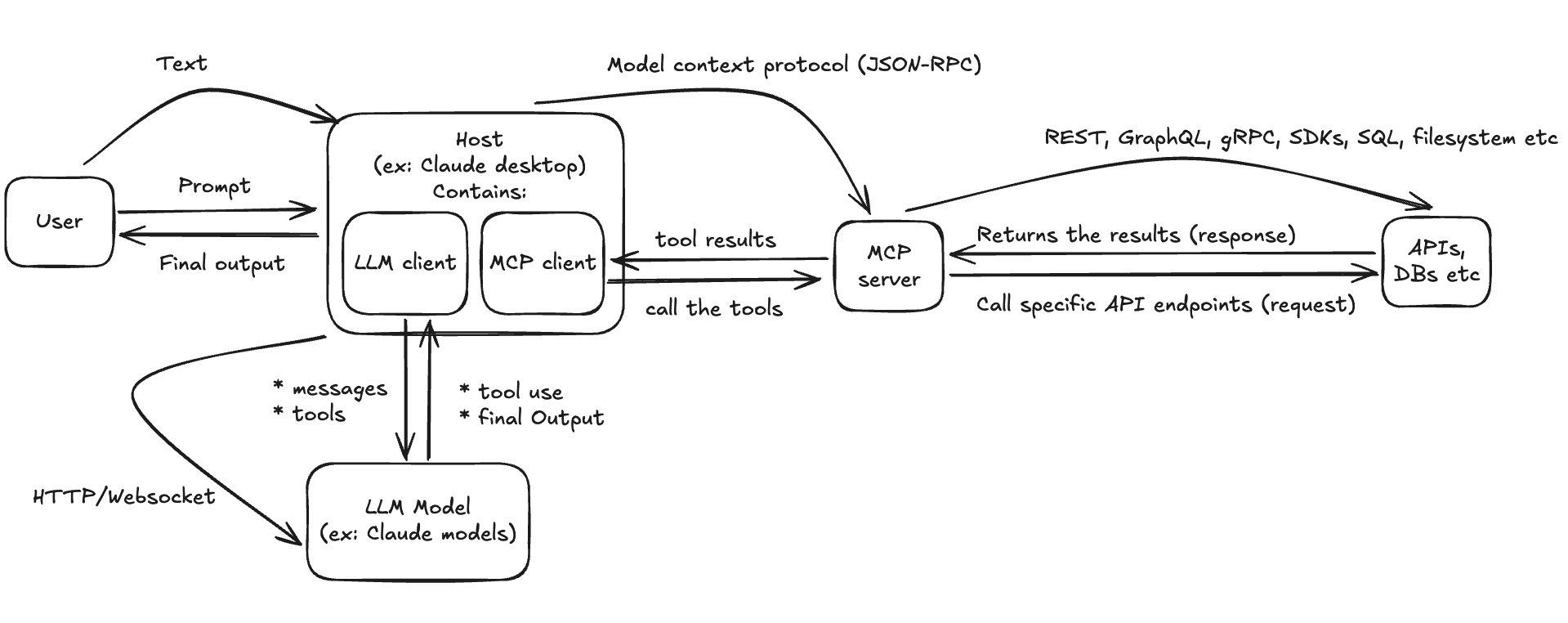

MCP, or Model Context Protocol, gives AI assistants a standard way to use external apps and data safely. This guide explains how hosts, servers, and tools interact, how JSON validation and structured results keep calls reliable, and why Unified APIs make integrations faster and easier to manage.

What is MCP

MCP, or Model Context Protocol, is a simple way for AI assistants or LLM models to work with external applications in a safe and reliable way. It gives the assistant a clear list of what an external application can do, and the exact details it needs to run those actions. The AI assistant asks for that list, checks what is allowed, and then makes a request in a standard format. The application runs the request and sends back a clean result. In short, MCP is the bridge that lets an LLM use tools and data from other apps in a controlled, reliable way.

MCP includes:

-

Host:

The host is the app that owns the chat and the user experience. It sends the user prompt to the model, gets a tool request back, runs that request through the MCP client, and then shows the final answer. The host might include the model itself, or it might only include a small client that talks to a model running elsewhere. For example, Claude Desktop sends your request to Anthropic’s servers, waits for the result, and shows it in the app. -

MCP server:

This is a small service that is self-hosted or hosted by a vendor. It is where your tools and resources live. It knows how to talk to real systems like REST APIs, databases, file systems, or SDKs. It also handles policy, input checks, and logging so calls stay safe and traceable. -

MCP client:

A component inside the host that talks to the MCP server. It discovers what the server offers, validates the model’s requested inputs, calls the right tool, and returns structured results back to the model. -

Tools:

Named actions with typed inputs and outputs. Examples. createContact, searchTickets, generateInvoice. Tools are how the model asks the outside world to do something, using JSON-shaped arguments that can be checked before anything runs.

How it works

Taking Claude desktop as the host, here is how the entire MCP architecture works:

Startup and discovery

Claude Desktop starts and quietly connects to all the MCP servers listed. It opens a JSON-RPC session and initializes the MCP connection, then fetches all the available tools (tools/list). The result is a small catalog of actions and readable things. No model call yet.

User prompt

User enters a prompt. Claude Desktop (the host) builds a request for the Claude LLM model API that includes two things: the chat history and the tool catalog fetched from the MCP server. This tells the model exactly which actions exist and what inputs they expect.

Model decides what to do

Claude LLM model reads the prompt plus the tool list and does one of two things:

-

If it can answer directly, it returns the final text.

-

If it needs more data or an action, it returns a tool intent. That is the tool name and a JSON object with arguments that match the schema.

Host validates and calls the server

When the model returns a tool intent, the host checks the JSON against the tool’s schema. Required fields must be there, types must match, and extra fields are dropped. If it passes, the host sends a single tools/call to the MCP server. If it fails, the host returns a clear error so the model can try again.

Server does the work safely

The MCP server runs your code with the credentials and scopes that were set. It validates inputs again, applies policy, and then talks to REST, GraphQL, gRPC, SDKs, SQL, or the filesystem. It uses retries and idempotency to avoid duplicates and smooth over hiccups, and it can stream progress. Finally, it returns a structured result or a well-shaped error and writes a clean log for audit.

Results go back to the model

The MCP server returns a structured result. The host forwards that result to the model as a tool result message. Claude updates its reasoning. Sometimes it asks for another tool. Sometimes that one result is enough to finish the job.

Final answer

Once the model is satisfied, it returns plain text. The host shows that to you in the chat.

How unified APIs relate to MCP

Unified APIs let you ship hundreds of integrations through one consistent interface. They pair naturally with MCP. The unified layer handles the heavy lifting for each integration, such as authentication, normalization, security, and sync behavior. MCP then exposes those normalized capabilities to AI hosts as clear tools and resources that are easy to discover and use.

Data normalization

A unified API standardizes data from many providers into one shared model. This gives the model a clean, predictable shape to work with, which reduces guesswork and improves accuracy.

With consistent fields, the model can reliably answer prompts like, “List the marketing team’s first names and email addresses,” because it does not need to reconcile different vendor schemas on the fly.

Security

Unified APIs provide strong controls over what data is accessible and who can access it. You can define scopes, limit fields, and let customers toggle specific attributes off if they do not want them retrieved or synced. This keeps data usage aligned with policy and customer preferences.

Observability

Most unified APIs include robust monitoring that helps support teams manage MCP-powered integrations. Expect automated issue detection, searchable logs, and other diagnostics that make it easier to investigate and resolve problems quickly.

Performance

Unified APIs are built for efficient data movement. Many offer fast incremental syncs and webhooks for near real-time updates. That means the model can work with current information, not stale snapshots, which leads to better answers and smoother workflows.

Give your LLM unified access to customer data with Truto MCP

Ready to put this into practice? Wrap your Unified API behind a Truto MCP server, expose a few high-impact tools, and let any MCP-aware host guide your LLM to safe, validated actions with current customer data. You get one integration that travels across model vendors, strong guardrails for security and observability, and faster paths from prompt to result.

FAQ

- What is the Model Context Protocol (MCP)?

- MCP is a protocol that allows AI assistants to connect with external applications and data sources in a secure, standardized way by defining specific tools and resources available to the LLM.

- What is the difference between an MCP host and an MCP server?

- The host is the application that manages the user chat and model interaction, while the MCP server is a service that contains the actual tools and logic to communicate with external APIs and databases.

- How do Unified APIs improve MCP integrations?

- Unified APIs normalize data from various providers into a consistent format, making it easier for AI models to understand and interact with external data without reconciling different vendor schemas.