What is MCP (Model Context Protocol)? The 2026 Guide for SaaS PMs

MCP is the open standard that lets AI agents talk to your SaaS product. Here's what B2B product managers need to know about the protocol, the M×N problem it solves, and why you need an MCP strategy now.

The Model Context Protocol (MCP) is an open standard that defines how AI models connect to external tools, data sources, and SaaS applications. If you're a product manager at a B2B SaaS company hearing the acronym everywhere and wondering whether it matters for your roadmap, here's the short version: MCP is the reason AI agents can now read your CRM data, create Jira tickets, and pull invoices from your accounting software — all without your engineering team building bespoke connectors for every AI platform.

Before MCP, exposing your SaaS product to an AI model meant building custom, point-to-point API connectors for OpenAI, Anthropic, Google, and every custom LangChain wrapper your customers used. MCP replaces that fragmented mess with a single, universal protocol.

This is the guide you need if you're deciding whether to invest engineering cycles in MCP — or whether to keep pretending your REST API is enough.

What is the Model Context Protocol (MCP)?

Anthropic launched MCP in November 2024 to solve the "N×M" data integration problem. In the past, if N different AI models needed to talk to M different data sources, developers had to build N×M custom integrations. Earlier stop-gap approaches — such as OpenAI's 2023 "function-calling" API and the ChatGPT plugin framework — solved similar problems but required vendor-specific connectors.

MCP killed that fragmentation. The analogy that stuck — for good reason — is "USB-C for AI." Before USB-C, every device had its own charging cable. Before MCP, every AI model had its own way of connecting to external tools. MCP acts as a universal adapter, reducing the N×M complexity to a simple hub-and-spoke model. Build one MCP server for your product, and it works with Claude, ChatGPT, Gemini, Cursor, and every other MCP-compatible client.

The adoption numbers tell the story clearly. Anthropic launched MCP in November 2024 with about 2 million monthly SDK downloads. OpenAI adopted it in April 2025, pushing downloads to 22 million. Microsoft integrated it into Copilot Studio in July 2025 at 45 million. AWS added support in November 2025 at 68 million. By March 2026, all major providers were on board, and Anthropic reported over 10,000 active public MCP servers and 97 million monthly SDK downloads across Python and TypeScript.

Then, in December 2025, Anthropic made the move that cemented MCP's long-term viability: they donated MCP to the newly formed Agentic AI Foundation (AAIF) under the Linux Foundation. The AAIF was co-founded by Anthropic, Block, and OpenAI, with support from Google, Microsoft, AWS, and Cloudflare. For a PM evaluating long-term bets, this matters. MCP isn't a single vendor's side project anymore. It sits alongside Kubernetes and PyTorch in the Linux Foundation's portfolio of open infrastructure.

Key definitions for product managers:

- Universal standard: You build one MCP server, and any compliant AI client (Claude Desktop, custom enterprise agents, Cursor) can interact with your product.

- Action-oriented: MCP doesn't just read data. It exposes "tools" that allow the LLM to take actions inside your product, like creating a ticket or updating a CRM record.

- Client-driven validation: The protocol forces the AI model to request a list of available tools, check the required JSON schema, and submit a strictly formatted request before any action is executed.

How Does MCP Work? The Client-Server Architecture Explained

The Model Context Protocol operates on a strict client-server architecture communicating over JSON-RPC 2.0 — the same message format family that inspired the Language Server Protocol (LSP) powering code editors like VS Code. This design intentionally separates the AI model from the external data source, ensuring that the LLM never has direct, unmediated access to a database.

Four components every PM should be able to whiteboard:

- The Host: The application the end user interacts with. Claude Desktop, ChatGPT, Cursor, or your own internal AI agent. The host runs the LLM and manages the conversation.

- The MCP Client: A component inside the host that discovers MCP servers, validates the model's requested inputs, calls the right tool, and returns structured results back to the model.

- The MCP Server: A standalone service that exposes your product's capabilities as "tools" with typed inputs and outputs. It knows how to talk to your actual API, database, or SDK.

- Tools: Named, discrete actions exposed by the MCP server. Every tool has a typed input schema and a clear description. Examples:

list_all_salesforce_contacts,create_a_jira_issue,get_single_hubspot_deal_by_id.

Here's what the interaction looks like in practice:

sequenceDiagram

participant User

participant Host as Host (Claude / ChatGPT)

participant Client as MCP Client

participant Server as MCP Server

participant API as Your SaaS API

User->>Host: "Show me all open deals over $50k"

Host->>Client: Model requests tool call

Client->>Server: JSON-RPC: tools/call<br>(list_deals, {status: open, min_amount: 50000})

Server->>API: GET /api/deals?status=open&min_amount=50000

API-->>Server: JSON response

Server-->>Client: Structured result

Client-->>Host: Tool output

Host-->>User: "Here are your 12 open deals..."The JSON-RPC 2.0 Protocol

When an AI agent connects to an MCP server, it first sends an initialize request to discover the server's capabilities. The server responds with a list of available tools, including their descriptions and required JSON schemas.

When the LLM decides to take action, the MCP client sends a tools/call request. Here's what that payload actually looks like over the wire:

{

"jsonrpc": "2.0",

"id": 142,

"method": "tools/call",

"params": {

"name": "update_salesforce_opportunity",

"arguments": {

"id": "0068c00000bbA3EAAU",

"stage": "Closed Won",

"amount": 50000

}

}

}The MCP server receives this, executes the update against the Salesforce REST API, and returns a structured success message to the LLM. The LLM then uses that context to reply to the user. The key thing to internalize: your SaaS product's API still does the real work. MCP just standardizes how AI models find and call it.

MCP vs. Traditional API Integrations: Solving the M×N Problem

This is the section that should change how you think about your integration strategy.

Traditional integrations follow a point-to-point model. Your product has a REST API. A customer wants their AI agent to use it. Their engineering team writes a custom connector — handling your specific auth flow, pagination scheme, error codes, and data format. Now multiply that by every AI platform the customer uses. That's the M×N problem: M AI models times N tools equals M×N custom integrations.

AI developers face a paralyzing version of this challenge where every new AI model requires custom code to connect with every external tool. This combinatorial explosion is slow, expensive, and creates a mountain of technical debt that stifles innovation.

MCP collapses M×N into M+N. Each AI model implements the MCP client protocol once. Each tool implements the MCP server protocol once. Any client can talk to any server. No bespoke glue code.

| Traditional API | With MCP | |

|---|---|---|

| 5 AI models × 20 tools | 100 custom integrations | 25 implementations (5 clients + 20 servers) |

| Adding 1 new AI model | Build 20 new connectors | Build 1 MCP client |

| Adding 1 new tool | Build 5 new connectors | Build 1 MCP server |

| Vendor lock-in | High (format tied to each AI platform) | Low (open protocol, works everywhere) |

The productivity impact is real. Analysts estimated that connecting a legacy service via traditional REST APIs consumed three to five days of senior developer time, per integration, before factoring in ongoing maintenance. Industry reports indicate that adopting MCP can reduce initial integration development time by up to 30% and lower ongoing maintenance costs by up to 25%, simply by eliminating the need to write custom connector code for every new AI platform that hits the market.

Traditional APIs are designed for deterministic, hardcoded workflows. A developer writes code that says "fetch endpoint A, map field B to field C, and POST to endpoint D." AI agents don't work this way. They're non-deterministic. They need to explore an API surface, read descriptions of what endpoints do, and dynamically construct payloads based on natural language prompts. MCP's self-describing interface — where the LLM reads tool descriptions and figures out how to use them on the fly — is built specifically for this paradigm.

Why B2B SaaS Product Managers Need an MCP Strategy in 2026

Here's the business case, stripped of hype.

Your enterprise buyers are already asking for this. Gartner predicts 40% of enterprise applications will include task-specific AI agents by end of 2026, up from less than 5% today. Those agents need to interact with the SaaS tools their organizations already pay for — including yours.

Forrester predicts that 30% of enterprise app vendors will launch their own MCP servers, noting that vendors adopting this open standard for AI agent collaboration will have a higher probability of early, enterprise-wide adoption of cross-platform agentic workflows.

If your product doesn't expose an MCP server, your customers' AI agents can't discover or use your product's data. Imagine a customer deploying a custom customer success AI agent to monitor account health. That agent needs to read billing data from Stripe, usage data from Mixpanel, and support tickets from your SaaS platform. If your platform doesn't have an MCP server, the customer's AI agent can't talk to it. Your product becomes a blind spot — an isolated data silo. That's a churn risk for you and a buying objection for prospects.

Gartner's research reinforces this from the infrastructure side: by 2026, 75% of API gateway vendors, and 50% of iPaaS vendors, will have MCP features. The middleware and platform layers are moving fast. SaaS products that don't keep pace will be the bottleneck.

Strategic takeaway: Don't build custom OpenAI GPTs or Claude integrations. Build one MCP server. That single investment makes your product compatible with the entire ecosystem of enterprise AI tools overnight.

The Hidden Costs of Building a Custom MCP Server

Here's where PMs get tripped up. MCP standardizes the protocol — how an AI model discovers and calls a tool. It does not standardize the underlying API complexity your server has to handle.

Building an MCP server that works in a demo takes a weekend. Building one that works in production takes months. As detailed in our build vs. buy analysis for custom MCP servers, here's what the protocol doesn't solve for you:

- OAuth token lifecycle management: AI agents run asynchronously. If an agent tries to execute a tool at 2:00 AM but the underlying OAuth access token expired at 1:59 AM, the tool call fails. Your custom MCP server must track token expiration (TTL), handle refresh token rotation, and manage exponential backoff for failed refreshes — for every connected tenant.

- Rate limit handling: Salesforce, HubSpot, and Jira all have different rate limiting strategies (per-user, per-org, per-endpoint, sliding window, fixed window). AI models are incredibly fast and can easily trigger

429 Too Many Requestserrors. Your server needs circuit breakers and retry logic to prevent the LLM from getting stuck in an error loop. - Pagination: A

list_contactstool that returns 25 results is useless if the customer has 50,000 contacts. LLMs have finite context windows. Your server must normalize cursor-based, offset, and keyset pagination — whatever each provider demands — and explicitly instruct the LLM on how to handlenext_cursortokens. - Error normalization: Every API returns errors differently. Your MCP server needs to translate provider-specific error codes into something an LLM can actually reason about.

- Tenant isolation and security: Each customer's data must be scoped correctly. A misconfigured MCP server that leaks data between tenants is a career-ending security incident.

Gartner notes that early adopters of MCP found it lacking basic enterprise management and governance features, with authentication and authorization optional in the specification.

The bottom line: if the MCP server is your product (you're building an AI-native tool), build it. If MCP is a means to expose your existing SaaS product to AI agents, the engineering cost of doing it from scratch is almost certainly not worth it.

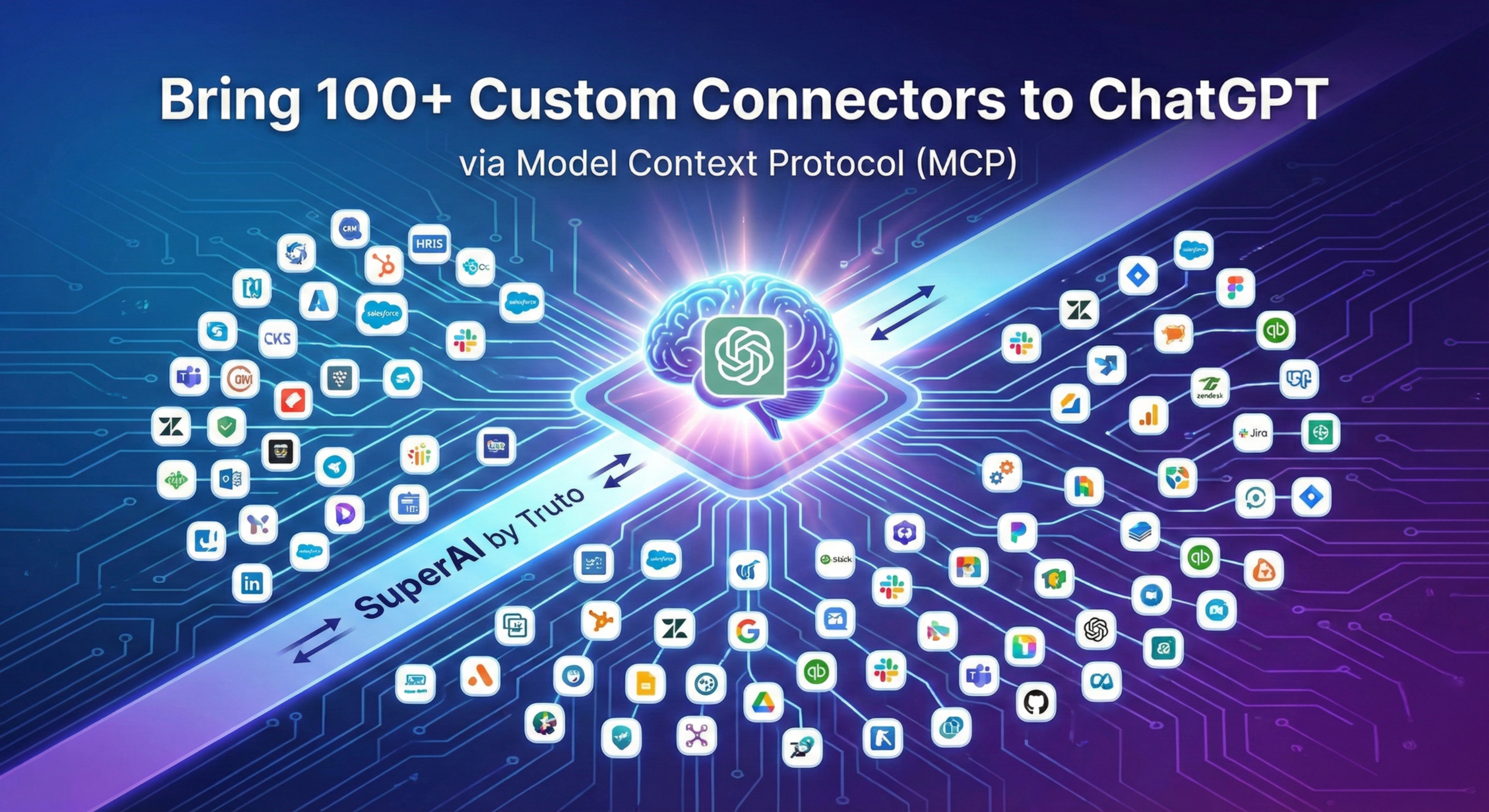

Deploying Managed MCP Servers with Truto

Instead of burning engineering cycles building custom infrastructure, modern SaaS teams use managed MCP infrastructure to expose third-party integrations to AI agents instantly.

Truto operates as a proxy layer that sits between the AI model and the third-party SaaS API. When a customer connects their Salesforce, HubSpot, or Jira account through Truto, the platform automatically generates a fully functional MCP server for that specific integrated account — with full JSON Schema definitions for parameters, pagination support, and automatic cursor handling.

flowchart LR

A["AI Agent<br>(Claude, ChatGPT, Cursor)"] -->|JSON-RPC 2.0| B["Truto MCP Server<br>(/mcp/:token)"]

B -->|Dynamic tool<br>generation| C["Integration Config<br>+ Documentation"]

B -->|API call with<br>auth + rate limiting| D["SaaS API<br>(Salesforce, Jira, etc.)"]

C -->|Defines resources,<br>schemas, descriptions| BA few specific design decisions worth understanding:

Dynamic Tool Generation (Zero Custom Code)

Truto doesn't use hardcoded integration scripts. Tool generation is dynamic and documentation-driven. When an AI client requests a list of tools, Truto inspects the underlying integration's resource definitions and documentation records. It automatically generates descriptive, snake_case tool names (e.g., get_single_hub_spot_contact_by_id), extracts the query and body schemas, and compiles them into valid JSON Schema for the LLM.

A resource method only becomes an MCP tool if it has a corresponding documentation record with a description and schema. This is intentional. It prevents half-baked or undocumented endpoints from being exposed to LLMs, which would lead to hallucinated parameters and failed tool calls. This acts as a strict quality gate, ensuring the LLM only sees curated, highly reliable endpoints.

Method and Tag Filtering

Enterprise security teams rarely want to give an AI agent full read/write access to a CRM. Truto allows you to apply granular configuration filters when generating an MCP server.

- Method Filtering: Restrict the server to only expose

readoperations (GET, LIST) while blocking allwriteoperations (CREATE, UPDATE, DELETE). - Tag Filtering: Group tools logically. A support team might get an MCP server with only

ticketsandticket_commentstools. A sales team getscontactsanddeals. Sensitive financial data stays hidden from the agent.

This is a meaningful security control — you're not handing an AI agent the keys to every endpoint.

Cryptographic Token-Based Auth and Automatic Expiration

Each MCP server URL contains an opaque token that encodes which connected account to use, which tools to expose, and when access expires. The raw token is hashed before storage — even a database breach wouldn't expose usable credentials. For higher-security environments, you can require a second authentication layer (API token in the Authorization header) on top of the URL token.

MCP servers can be created with a TTL. Give a contractor access for a week, and the server auto-deletes after expiry. No manual cleanup, no dangling access.

Truto handles OAuth token refresh, rate limiting, pagination, and error normalization at the platform level. The MCP protocol doesn't solve any of these problems — they're the operational tax that makes self-hosted MCP servers expensive to maintain. For a walkthrough of how this works with Claude specifically, see our guide on managed MCP for Claude.

The honest trade-off: A managed approach means you're routing tool calls through a third-party platform. That adds a network hop and a dependency. For teams that need complete control over the request path — or that operate in air-gapped environments — building in-house might be the right call. For everyone else, the math favors buying: you skip months of integration plumbing and ship MCP access to your customers in days, not quarters.

The MCP Security Surface You Can't Ignore

No responsible guide should skip this. MCP opens a new attack surface, and PMs need to understand the risk profile before greenlighting a rollout.

In April 2025, security researchers released an analysis identifying multiple outstanding security issues with MCP, including prompt injection, tool permissions that allow combining tools to exfiltrate data, and lookalike tools that can silently replace trusted ones.

Three risks to brief your security team on:

- Prompt injection via tool output. A malicious data source could return content designed to manipulate the LLM into calling other tools or exfiltrating data. If your MCP server returns user-generated content (support tickets, CRM notes), the LLM sees it as context.

- Tool poisoning. A rogue MCP server could register tools with names mimicking trusted ones (

get_salesforce_contactsvs.get_saIesforce_contacts). The LLM picks the wrong one. - Over-permissioned access. An MCP server that exposes both read and write tools to an AI agent that only needs read access is an unnecessary blast radius.

Mitigation strategies include scoping MCP servers to read-only operations by default, requiring explicit approval for write tools, and enforcing the principle of least privilege at the tool level. For a deeper discussion, see our MCP server security guide.

Your Next Move: An MCP Checklist for SaaS PMs

MCP is not a future consideration. Every major AI provider already supports it, and the integration work done once transfers across all compliant providers. Here's a practical checklist to bring to your next planning cycle:

- Audit your API surface. Which resources do your enterprise customers most want AI agents to access? Contacts, deals, tickets, invoices — start with the 20% of endpoints that cover 80% of use cases.

- Assess build vs. buy. If your team has capacity for 3–6 months of MCP infrastructure work (OAuth management, rate limiting, pagination, security) — and MCP is core to your product — build it. If not, evaluate managed providers.

- Define your security posture. Decide which operations should be read-only for AI agents. Set tool-level permissions before you ship anything.

- Talk to your enterprise customers. Ask which AI platforms they're adopting. If the answer is Claude, ChatGPT, or Copilot — they're all MCP-compatible. The demand signal is already there.

- Ship incrementally. Start with read-only tools for your most-requested resources. Expand to write operations once you've validated the access patterns.

The SaaS products that become "agent-ready" in 2026 will win the enterprise deals that require AI interoperability. The ones that don't will spend 2027 explaining to their board why they're losing RFPs.

Frequently Asked Questions

- What does MCP stand for in AI?

- MCP stands for Model Context Protocol. It is an open standard introduced by Anthropic in November 2024 that standardizes how AI models like Claude and ChatGPT connect to external tools, data sources, and SaaS applications using JSON-RPC 2.0.

- Is MCP the same as an API?

- No. MCP is a protocol layer that sits on top of APIs. It standardizes how AI agents discover and call tools, while the underlying API still handles the actual data operations. Think of MCP as the common language AI models use to interact with many different APIs through a single interface.

- What is the difference between an MCP server and an MCP client?

- An MCP server exposes tools and data to AI models (e.g., a Salesforce MCP server that lets agents read contacts). An MCP client lives inside the AI host application (e.g., Claude Desktop or ChatGPT) and handles discovering servers, validating inputs, and routing tool calls.

- Do I need to build a custom MCP server for my SaaS product?

- If enterprise buyers expect AI agents to interact with your product's data, you need MCP support. Forrester predicts 30% of enterprise app vendors will launch their own MCP servers in 2026. You can build from scratch or use a managed MCP provider like Truto to expose your existing APIs as MCP tools without writing integration-specific code.

- Who maintains MCP now that Anthropic donated it?

- MCP is governed by the Agentic AI Foundation (AAIF) under the Linux Foundation, co-founded by Anthropic, Block, and OpenAI, with support from Google, Microsoft, AWS, and Cloudflare. The project maintainers retain full technical autonomy.