Managed MCP for Claude: Full SaaS API Access Without the Security Headaches

Native LLM connectors only scratch the surface. Learn how managed MCP servers give Claude full access to 100+ SaaS APIs — no custom infrastructure required.

Native LLM connectors are convenient until they aren't. Claude's built-in Slack connector lets you search messages and send replies. That's about it. You can't manage channels, list files, query user presence, or interact with Slack's 200+ API methods. The same story plays out with every native integration — you get 10% of the API surface, and for the other 90%, you're on your own.

A managed MCP server is a hosted infrastructure layer that implements the Model Context Protocol, automatically handling OAuth token lifecycles, API rate limits, and dynamic tool generation to expose the full surface area of third-party SaaS APIs to AI models — without requiring custom integration code. If you need your AI agent to query custom Salesforce objects, parse threaded Slack metadata, or execute complex Jira transitions, this is the architecture that actually works.

This post walks through why native connectors fall short, the real costs of going the self-hosted route, and a practical tutorial for connecting Claude to Slack in under three minutes using Truto's SuperAI.

The Wall: Why Native LLM Connectors Aren't Enough

Native connectors are product integrations, not vendor API parity.

Anthropic's connector docs describe the model clearly: prebuilt integrations are ready to use after authentication. That's useful, and for many teams it's enough. But you should treat a productized connector as a curated tool list, not as a blanket promise that every vendor endpoint, custom field, or niche operation makes it through unchanged.

Take Slack. The Slack Web API has over 200 methods spanning conversations, files, reminders, workflows, user groups, admin tools, and more. Claude's native Slack connector gives you search_messages, post_message, and list_channels. Try asking Claude to download a file from Slack, manage user group memberships, or list emoji reactions on a thread — the native connector simply can't do it.

This isn't a knock on Anthropic. Curating connectors takes real engineering effort. Every endpoint needs schema definitions, error handling, pagination logic, and testing. Scaling that across hundreds of apps and thousands of endpoints is a multi-year, multi-team project.

Then there's the vendor side making things worse. Slack moved conversations.history to a much tighter rate limit for new non-Marketplace apps on May 29, 2025 — cutting the default and max page size to 15 objects and limiting calls to 1 request per minute. Existing installations outside the Slack Marketplace fell under the same rule on September 2, 2025. That's the kind of detail that quietly wrecks a demo the moment an enterprise customer asks for a two-year retrospective across a busy channel.

Anthropic created the Model Context Protocol specifically because connecting AI models to data sources required building custom, brittle integrations for every single tool. MCP standardizes how AI models discover and call external tools, so you're not locked into whatever a vendor has pre-built.

But MCP is a protocol, not a product. Someone still has to build the server.

The Infrastructure Trap of Self-Hosted MCP Servers

Self-hosting one MCP server is easy. Running a fleet is where things get ugly.

MCP is no longer a niche experiment. Within a year of launch, the ecosystem passed 10,000 active servers and 97 million monthly SDK downloads, and MCP moved under the Linux Foundation's Agentic AI Foundation. That's real adoption — which is exactly why teams are discovering the production pain, not just the hackathon upside.

When you build a custom MCP server for a tool like Slack or Salesforce, you're taking ownership of:

- OAuth lifecycle management — Handling authorization code grants, securely storing access tokens, and reliably executing refresh token rotations before they expire

- Pagination quirks — Translating the LLM's desire to "read all records" into the specific pagination strategy of each vendor (cursor-based, offset-based, or page-based). Get this wrong and the LLM will happily infinite-loop

- Rate limit compliance — Implementing exponential backoff and retry logic so the agent doesn't stall on

429 Too Many Requestsor get the API key revoked entirely - Schema maintenance — Manually updating your MCP tool definitions every time the vendor adds a required field or deprecates an endpoint

- Monitoring and logging — Load balancing, failover, and independent observability across dozens of server processes

The operational burden looks suspiciously like microservices all over again.

Security is worse. A security assessment by Equixly found that 43% of tested MCP implementations contained command injection vulnerabilities, 30% suffered from Server-Side Request Forgery (SSRF), and 22% had path traversal flaws. When developers rush to expose internal APIs to LLMs, they frequently fail to sanitize the JSON arguments generated by the model. If your custom MCP server takes an LLM-generated string and concatenates it into a database query or shell command, you've just given an autonomous agent remote code execution capabilities.

These aren't theoretical risks. In mid-2025, Asana faced a serious MCP-related privacy breach — after launching an MCP-powered feature, a bug caused customer data to bleed into other customers' MCP instances, forcing them to pull the integration offline for two weeks. A critical vulnerability in the popular mcp-remote npm package (CVE-2025-6514, rated CVSS 9.6) allowed remote code execution when connecting to untrusted MCP servers. Even Anthropic's own help docs tell users to only connect to remote MCP servers from trusted organizations and review requested permissions closely.

For a single internal tool, building a custom MCP server is reasonable. For 10+ SaaS integrations across multiple customers, it's an engineering nightmare.

Why Managed MCP Servers Are the Practical Path Forward

The shift from self-hosted to managed MCP servers mirrors what happened with databases (self-hosted Postgres → RDS), CI/CD (Jenkins → GitHub Actions), and auth (homegrown → Auth0). Teams realize the undifferentiated heavy lifting isn't worth the maintenance burden.

| Concern | Self-Hosted | Managed (e.g., Truto) |

|---|---|---|

| OAuth + token refresh | You build it | Handled automatically |

| Pagination across vendors | Per-vendor implementation | Pre-built for 100+ APIs |

| Rate limiting | Manual backoff logic | Built into proxy layer |

| Tool schema generation | Hand-coded per endpoint | Auto-generated from API docs |

| Security (token storage, expiry) | Your responsibility | Cryptographic tokens + TTL |

| New endpoint support | Write code, deploy, test | Available as soon as docs exist |

Not all managed approaches are equal, though. Here's how the market is splitting:

- Native connectors — Fastest path for common apps. Anthropic ships first-party connectors for Slack, Google Drive, Gmail, GitHub, and Microsoft 365. Great for everyday workflows, but you get the tool surface the connector vendor chose to ship.

- MCP hosting and control planes — Platforms like Metorial offer serverless hosting for enterprise MCP servers. They abstract deployment and scaling, but you still write and maintain the custom integration logic for every endpoint. You're outsourcing infrastructure, not integration code.

- Common-model MCP platforms — Merge.dev offers MCP connectors that route all AI tool calls through rigid unified API schemas. This fundamentally limits the LLM. Unified schemas strip away vendor-specific features to fit a common denominator — your LLM loses the ability to interact with custom objects that only exist in your specific Salesforce instance.

- Managed raw-API MCP — Truto's approach: managed MCP servers that expose the raw vendor APIs directly to the LLM, injected with highly optimized semantic descriptions. We handle the OAuth flows, token refreshes, pagination, and rate limiting out of the box. You get a secure, hosted MCP URL that gives your AI agent access to the full, unadulterated power of the underlying SaaS platform.

The trade-off is real. With a self-hosted server, you have total control over every request and response. With a managed server, you're trusting a third party with API credentials and data flow. That's a legitimate concern for security-sensitive environments — and it's why features like scoped access controls, expiring tokens, and dual authentication matter.

Tutorial: Connecting Claude to Slack in 3 Minutes with SuperAI

Here's the actual workflow. No boilerplate, no infrastructure setup. Watch the walkthrough below, or follow the steps.

Step 1: Establish the OAuth Connection

In the Truto dashboard, navigate to Integrated Accounts and click Connect account. Set a Tenant ID (e.g., slack-claude-demo) and generate a connection link. This link initiates the OAuth flow — when you open it, select Slack from the integration catalog. Truto redirects you to Slack's authorization screen. Review the requested scopes, hit Allow, and Truto securely captures and encrypts the access and refresh tokens. The connection is now active, and Truto handles all future token rotations automatically.

Step 2: Generate a Remote MCP Server URL

Click into the connected Slack account and navigate to the MCP Servers tab. Click Create MCP Server and name it (e.g., slack-claude). Truto immediately provisions a dedicated, cryptographically secure Remote MCP URL.

This URL is self-contained. It encodes the authentication context, the connected account, and optionally an expiration time. No additional client-side configuration is needed. Copy it.

Step 3: Add the MCP Server to Claude

You have two options depending on your setup.

Option 1 — Claude Desktop/Web: Go to Settings > Connectors > Add custom connector, paste the Truto MCP URL, and click Add. Custom connectors via remote MCP are available on Free, Pro, Max, Team, and Enterprise plans (Free is limited to one custom connector). On Team and Enterprise, an owner adds the connector at the org level first, then individual users authenticate.

Option 2 — Anthropic API (programmatic): If you're building an agent via the Anthropic API, pass the MCP server directly using the mcp_servers array with the beta header mcp-client-2025-11-20:

const message = await anthropic.messages.create({

model: 'claude-sonnet-4-20250514',

max_tokens: 1000,

messages: [

{ role: 'user', content: 'Summarize engineering feature work from Slack since 2024-01-01' }

],

mcp_servers: [

{

type: 'url',

name: 'slack-truto',

url: process.env.MCP_URL!,

authorization_token: process.env.MCP_TOKEN!

}

],

tools: [

{ type: 'mcp_toolset', mcp_server_name: 'slack-truto' }

]

})This currently supports tool calls over public HTTP MCP servers (not local stdio). Note that this API path is beta-only and excluded from Anthropic's Zero Data Retention policy.

Step 4: Execute Complex Agentic Workflows

Once connected, Claude discovers all available Slack tools — searching messages, listing channels, listing files, fetching user profiles, and more. Now issue a prompt that would be impossible with a native connector:

"Document all the features built by the engineering team in the last two years based on Slack conversations in #engineering."

Claude identifies the appropriate search tools from the MCP server, asks for permission to execute them, and begins paginating through messages. Behind the scenes, Truto translates Claude's JSON-RPC tool calls into raw Slack API requests, handling cursor-based pagination and rate limits automatically. Claude parses the raw JSON responses, extracts technical feature mentions, and compiles a structured report.

For production use, be more specific with your prompts:

Search #engineering from January 1, 2024 through today. Extract product features shipped or prototyped by engineering. Group them into released, experimental, and infrastructure work. For each item include evidence, approximate timeframe, and confidence. Ignore jokes, duplicate mentions, and one-off brainstorms.

Managed MCP doesn't fix vague prompts. It just stops you from writing the Slack plumbing by hand. If your question is broad and the workspace is noisy, expect multiple tool calls and iterative narrowing — not one magic response. Slack's history endpoint is cursor-based and, for some app types, heavily rate-limited, so your prompts and tool scope should reflect that reality.

If you want the same pattern for ChatGPT, see Bring 100+ Custom Connectors to ChatGPT with SuperAI by Truto.

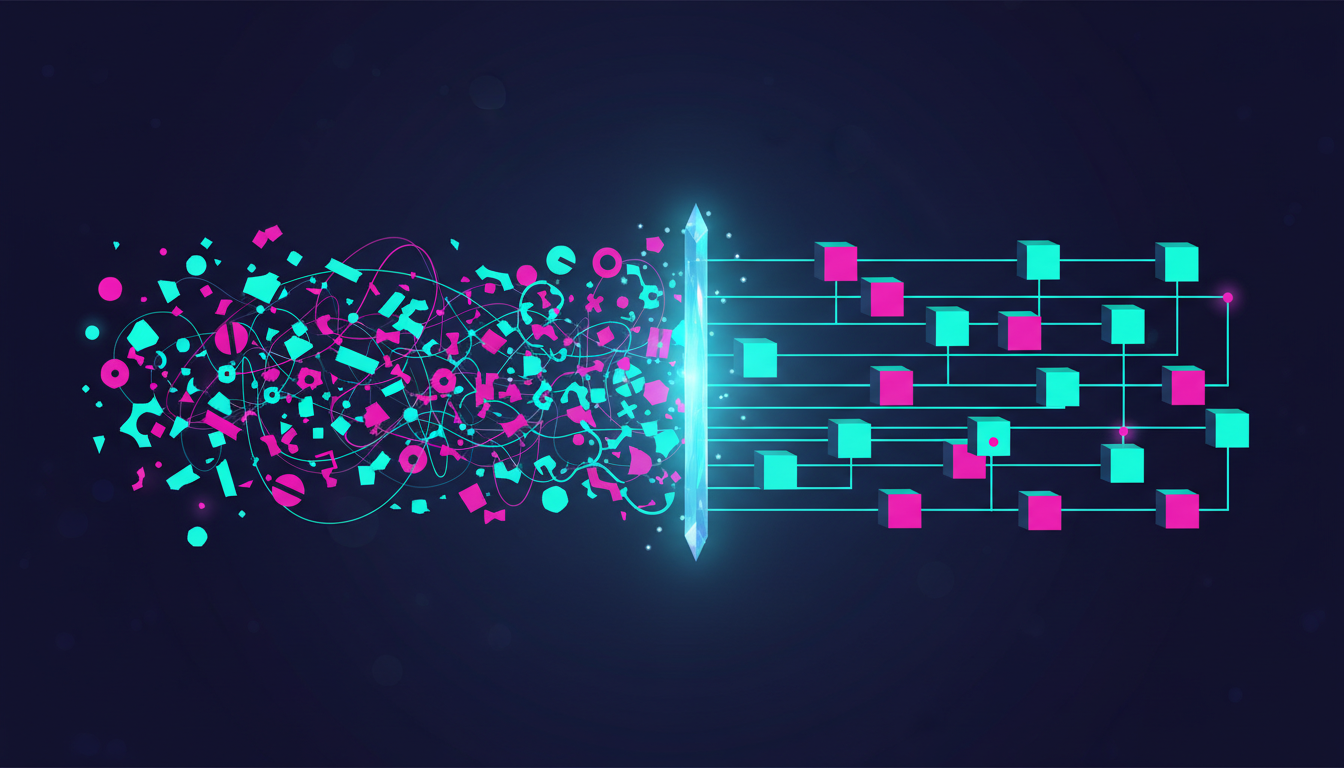

Raw APIs vs. Unified Schemas: Giving AI the Full Picture

Traditional integration platforms rely on unified APIs. They map the contacts endpoint from HubSpot, Salesforce, and Pipedrive into a single, standardized Contact object. This is incredibly useful when you're writing deterministic code that needs to sync data into your own database.

But LLMs don't need unified schemas. They're reasoning engines. They're surprisingly good at understanding vendor-specific data structures — Salesforce SOQL queries, Zendesk ticket custom fields, Jira JQL filters — provided they have accurate tool descriptions and schemas. What they hate is vague tools, missing parameter docs, and hidden pagination behavior.

When you force an LLM to use a unified API, you're actively degrading its capabilities. If a customer has a highly customized Jira instance with specific transition states and custom fields required for compliance, a unified ticketing schema drops those fields entirely. The LLM fails to update the ticket because it can't see the required custom parameters.

Truto's managed MCP servers bypass the unified layer entirely for AI workloads. We expose the Proxy API — the raw, normalized connection to the vendor's actual endpoints. We take the raw vendor schemas and automatically inject LLM-optimized semantic descriptions. The LLM sees the exact shape of the vendor's API, complete with every custom field and niche operation, formatted to minimize hallucinations.

Truto still offers Unified APIs for programmatic use cases where you want a normalized data model. But for agentic workflows, the raw proxy API with semantic annotations gives the LLM far more to work with. Read more about why schema normalization is the hardest problem in SaaS integrations.

How Truto's Dynamic Tool Generation Works Under the Hood

Most MCP server implementations hard-code tool definitions. Truto doesn't. Tools are generated dynamically at request time based on two data sources:

- Resource definitions — A structured configuration defining what API endpoints exist for each integration (e.g., Slack has

channels,messages,files,users) - Documentation records — Human-readable descriptions and JSON Schema definitions for each resource method

Automatic Schema Enhancements

Raw API schemas often lack the context LLMs need. Truto intercepts them during generation and adds clear descriptions to parameters, responses, and resources so the AI understands what each field means and how to use it.

Handling pagination is notoriously difficult for LLMs. When Truto generates a tool for a list method, it automatically injects limit and next_cursor properties into the query schema. Crucially, it injects explicit prompt engineering directly into the schema description:

{

"properties": {

"next_cursor": {

"type": "string",

"description": "The cursor to fetch the next set of records. Always send back exactly the cursor value you received (nextCursor) without decoding, modifying, or parsing it. This can be found in the response of the previous tool invocation as nextCursor."

}

}

}This semantic injection guarantees that the LLM understands exactly how to pass pagination tokens back, preventing models from trying to "helpfully" decode or modify opaque cursor values.

Flat Input Namespace Resolution

The MCP protocol dictates that all arguments from the LLM arrive as a single, flat JSON object. REST APIs require parameters to be strictly separated into query strings, path variables, and request bodies.

When Claude invokes a tool via tools/call, Truto's routing layer intercepts the flat argument object, loads the dynamically generated query and body schemas for that specific tool, and maps the flat arguments into their correct HTTP transport locations. The HTTP mechanics are completely abstracted away from the LLM.

sequenceDiagram

participant LLM as Claude (MCP Client)

participant Router as Truto MCP Router

participant Proxy as Truto Proxy API

participant Vendor as SaaS API (e.g., Slack)

LLM->>Router: tools/call { name: "list_slack_messages", arguments: { channel_id: "C123", limit: 50 } }

Router->>Router: Split flat arguments using JSON schemas

Router->>Proxy: Execute GET /messages?limit=50<br>Path: /C123

Proxy->>Vendor: Authenticated HTTP Request

Vendor-->>Proxy: Raw API Response + Pagination Cursor

Proxy-->>Router: Standardized JSON Result

Router-->>LLM: MCP JSON-RPC ResponseThis architecture means adding support for a new endpoint doesn't require code changes — just a documentation record. And if you need to customize tool descriptions for your environment, per-environment documentation overrides let you do that without affecting other tenants.

Securing Your Enterprise MCP Deployments

Given the security landscape of MCP servers, enterprise teams need more than "put an API key on it." If your MCP security model is "it's just a URL," you don't have a security model. Truto's MCP servers are designed with multiple layers of defense.

Cryptographic Token Isolation

Each MCP server is scoped to a single integrated account. When you generate an MCP server, Truto creates a random hex string. This raw string is never stored in the primary database. Instead, it's hashed using an HMAC signing key before being stored in a globally distributed KV store. The URL you provide to Claude contains the raw token, which is hashed at the edge during the request to validate authorization. Even a total database compromise would not expose your active MCP server tokens.

Method and Tag Filtering

You can scope an MCP server to specific operation types or functional areas:

// Read-only MCP server — AI can list and get, but never create/update/delete

{

name: "Slack Read-Only",

config: { methods: ["read"] }

}

// Support-only tools for a Zendesk integration

{

name: "Support Tools",

config: { tags: ["support"] }

}

// Combined: read-only access to CRM tools only

{

name: "CRM Viewer",

config: { methods: ["read"], tags: ["crm"] }

}Method categories (read, write, custom) can be combined with resource tags. The system validates at creation time that the filter combination produces at least one tool — you can't accidentally create an MCP server with zero capabilities.

For your first rollout, keep the server read-only, require human approval for tool use, and log every tool call. Let your product team earn write access later.

Time-Limited Access with TTL Expiration

One of the biggest risks with custom MCP servers is stale access. A developer spins up a server for a quick test, forgets about it, and leaves an active bridge to production data sitting on their local machine. Hard-coded credentials and long-lived tokens stored in config files are how incident reviews get written.

Truto solves this through automated Time-To-Live (TTL) expirations:

{

name: "Contractor Access",

config: { methods: ["read"] },

expires_at: "2026-04-15T00:00:00Z" // Auto-expires in 30 days

}Expiration is enforced at the infrastructure level. The token's entry in the distributed KV store is configured with a native expiration timestamp, ensuring lookups fail instantly once the time passes. Simultaneously, a scheduled cleanup process permanently sweeps and deletes the server configuration from the primary database. No dangling access, no manual cleanup required.

Optional Dual Authentication

By default, the unique MCP URL is sufficient to interact with the server — convenient for local testing with Claude Desktop. For production deployments where the MCP URL might be visible in internal configuration files or logs, Truto supports conditional API token authentication:

{

name: "High-Security CRM Access",

config: {

methods: ["read", "write"],

require_api_token_auth: true // URL alone isn't enough

}

}With this enabled, the MCP client must also provide a valid Truto API token in the Authorization header. Possession of the URL alone doesn't grant access — a critical second layer for any shared or production environment.

Stop Building Integration Infrastructure

The AI ecosystem is moving too fast to waste engineering cycles building OAuth handlers, reading vendor pagination docs, and patching command injection vulnerabilities in custom MCP servers.

If you only need common tasks in one or two apps, stick with the native connector — it's the fastest path. If you need full vendor coverage across Slack, CRM, HRIS, ticketing, accounting, and the long tail of customer-specific SaaS, managed MCP servers are the better bet. Especially when the alternative involves personally auditing every tool implementation for the OWASP MCP Top 10 vulnerability categories.

Start small. Connect Slack. Ship a read-only Claude custom connector. Watch the logs. Tighten the tool list. Then expand to the next app your customers actually use — not the next app that makes for a flashy demo.

Frequently Asked Questions

- What is a managed MCP server?

- A managed MCP server is a hosted service that implements the Model Context Protocol on your behalf, handling OAuth, pagination, rate limiting, tool schema generation, and security so you can connect AI agents to SaaS apps without building or maintaining custom server infrastructure.

- How do I connect Claude to Slack using MCP?

- Connect your Slack account via Truto's OAuth flow, create an MCP server in the Truto dashboard to get a remote MCP URL, then add that URL as a custom connector in Claude's settings (or via the Claude Desktop config file, or programmatically via the Anthropic API). Claude instantly discovers all available Slack tools.

- Are MCP servers secure for enterprise use?

- Self-hosted MCP servers carry real risks — Equixly found 43% of tested implementations had command injection vulnerabilities. Managed MCP servers like Truto's mitigate this with HMAC-hashed cryptographic tokens, method and tag filtering, automatic TTL expiration, and optional dual authentication.

- What is the difference between native Claude connectors and custom MCP connectors?

- Native connectors are pre-built by Anthropic and expose a limited subset of an app's API — typically a handful of common endpoints. Custom MCP connectors can expose the entire API surface, including niche endpoints, custom fields, and vendor-specific operations that native connectors don't cover.

- How does Truto generate MCP tools without writing code?

- Truto dynamically generates MCP tools at request time by combining integration resource definitions with curated documentation records containing descriptions and JSON schemas. A tool only appears if it has a reviewed documentation entry, acting as a quality gate. Adding a new endpoint requires only a documentation record — no code changes.