How to Architect a Multi-Tenant MCP Server for Enterprise B2B SaaS

Architect a multi-tenant MCP server for enterprise B2B SaaS with patterns for Oracle NetSuite, SAP, cryptographic URL scoping, and OAuth management.

Orchestrating an AI agent on your laptop is deceptively easy. You define a persona, hand it a few Python functions, paste a vendor API key into a .env file, and watch the agent reason through tasks. But deploying that same agent into a production B2B SaaS environment exposes a massive architectural gap.

If you've shipped a local Model Context Protocol (MCP) server with credentials baked into environment variables and now need to scale it to thousands of B2B customers, the gap between those two states is brutal. A multi-tenant MCP server is one where a single deployed service securely brokers AI agent access to thousands of customer accounts, each with their own OAuth tokens, scopes, and tool permissions, without ever leaking credentials between tenants or to the LLM itself.

The framework handles the agentic reasoning—whether you're using CrewAI, AutoGen, or LangGraph, as covered in our guide to multi-agent frameworks—but it does not solve the enterprise integration problem. When your AI agent needs to act on behalf of your users inside external systems—reading Jira tickets, updating Salesforce opportunities, or pulling BambooHR employee records—you suddenly have to manage multi-tenant OAuth 2.0 lifecycles, handle vendor-specific rate limits, and ensure strict isolation between what different agents are allowed to access.

This guide walks through the architectural patterns that actually hold up in production, drawing on what's been learned the hard way across the industry. We will cover cryptographically scoped URLs, abstracted OAuth token management, dynamic tool generation, and enforcing least privilege at the server level. We'll also show how these patterns apply to the hardest ERP integration targets - Oracle NetSuite and SAP - with concrete tool definitions, authentication migration guidance, and rate-limit orchestration strategies. The goal: a setup that survives both an enterprise security review and a Tuesday morning at 10x traffic.

The Multi-Tenant MCP Server Bottleneck

Most MCP demos use the stdio transport with credentials read at boot time. As the MCP specification notes: No explicit authentication takes place between the MCP client and the MCP server in that flow, and the authorization process for services behind the MCP server is managed by passing environment variables.

That works for a developer's laptop. It collapses the moment your CRM agent needs to act on behalf of 4,000 distinct Salesforce instances. The core problem is that the MCP server is no longer a tool—it is a multi-tenant gateway. Each request needs to be routed to the correct customer's credentials, scoped to that customer's permissions, and isolated from every other tenant's data.

The MCP specification deliberately leaves this to you: The exact mechanism for authentication and authorization for requests made by an MCP server is outside the scope of the MCP specification, but you need one.

This is the difference between an integration demo and an integration platform. If you want context on the broader category, our 2026 architecture guide for SaaS PMs is a good primer. The rest of this post is about what the demo doesn't show you.

The Security Reality of Enterprise AI Agents

The stakes for getting this architecture right are incredibly high. According to Gartner, by the end of 2026, 40% of enterprise applications will feature task-specific AI agents, up from less than 5% today. Yet, the infrastructure to secure these agents is lagging. A 2026 Gravitee survey highlights that only 24.4% of organizations have full visibility into which AI agents are communicating with each other or external systems.

Every one of those agents is going to need authenticated access to SaaS systems on behalf of end users, and most engineering teams are still figuring out how to do that without committing security malpractice.

The failure mode you most want to avoid is passing OAuth tokens or API credentials directly through the AI model's context window. Security experts at Kiteworks explicitly warn against this pattern. The model has no business seeing them, and just as with SaaS data PII redaction, tool-use logs, prompt caches, and agent traces become a credential leakage surface the moment you do. Passing credentials through the AI context exposes them to prompt injection attacks, where a malicious input could trick the model into leaking the token or performing unauthorized destructive actions.

The June 2025 MCP specification addresses this directly: When MCP servers need to call upstream APIs, they must act as OAuth clients to those services and obtain separate tokens. Never pass through the token received from the MCP client, as this creates confused deputy vulnerabilities where downstream services may incorrectly trust tokens not intended for them.

Which means your architecture has to satisfy three properties at once:

- Tenant isolation: A token issued to Customer A's MCP server can never be used to read Customer B's data.

- Credential opacity: The AI model and the MCP client never see raw API tokens, refresh tokens, or vendor secrets.

- Scope enforcement: Tools available through the server are constrained to what the customer (and their plan) authorized.

To build a secure system, the MCP server must act as an impenetrable boundary, ideally employing a zero data retention architecture. The AI model requests an action, and the server independently resolves the authentication context, enforces permissions, and executes the request.

Core Architecture: Cryptographically Scoped Server URLs

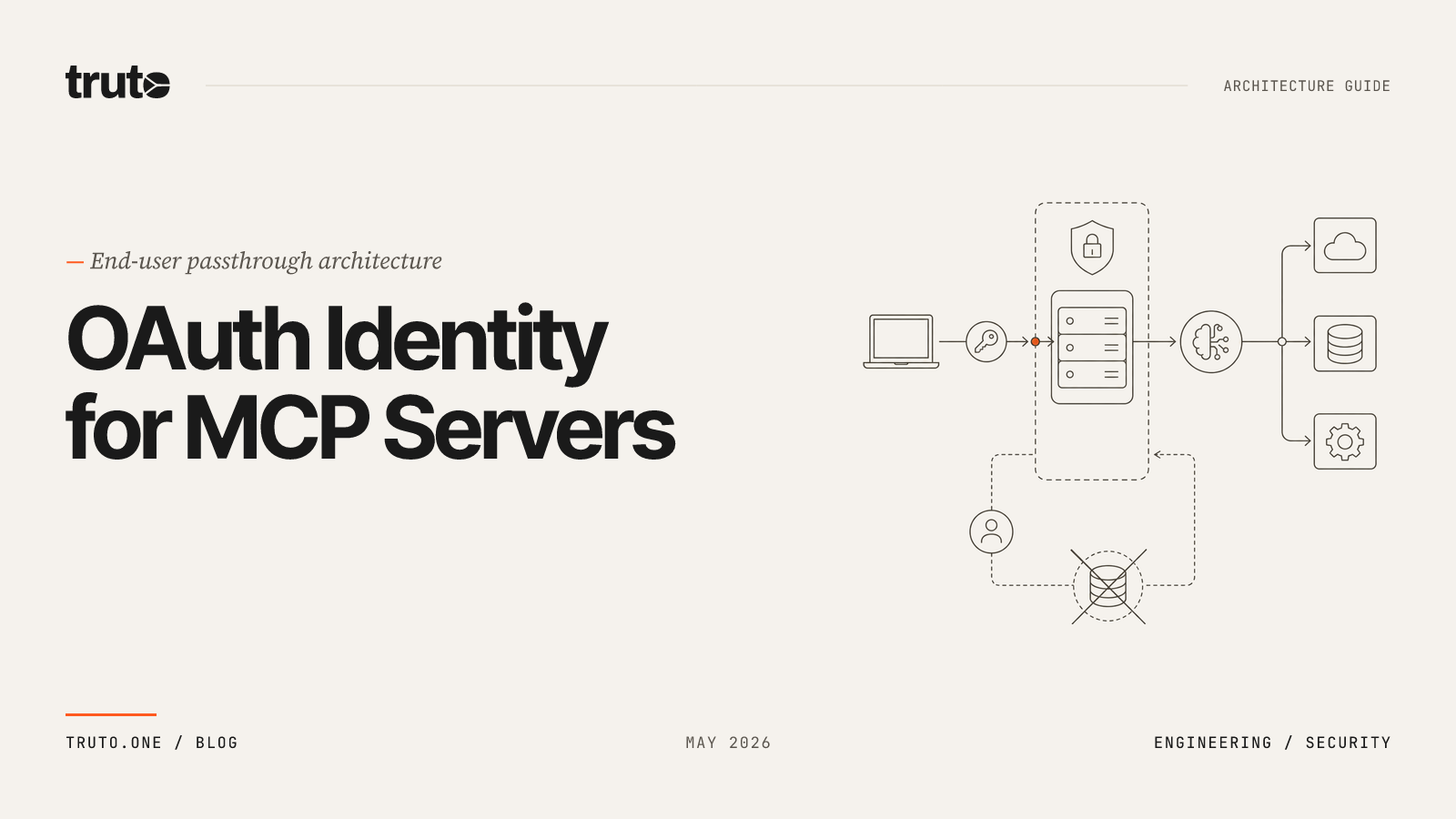

A cryptographically scoped MCP URL is an endpoint that uses a securely hashed token within the URL path to authenticate the client, identify the specific tenant, and define the exact set of allowed tools, completely removing the need for the AI model to handle underlying API credentials.

The cleanest tenant isolation primitive for remote MCP is to make the server URL itself the security boundary. In a multi-tenant environment, you cannot use a single global MCP server. If Tenant A and Tenant B both connect to https://api.example.com/mcp, the server has no reliable way to know which tenant's Salesforce instance to query unless the AI model explicitly passes a tenant ID—which is a severe security risk.

Instead, the architecture must generate unique, self-contained MCP servers for every connected account. When a user connects a third-party integration, the platform mints a unique URL per integrated account:

https://api.example.com/mcp/<random-hex-token>

Here is how the generation pipeline works:

- The system creates a database record linking the new server to the specific tenant and integration.

- A random hexadecimal string is generated server-side to act as the public token.

- Critically, you never store the raw token. This raw token is hashed using an HMAC signing key.

- The hashed token is stored in a fast, distributed Key-Value (KV) store, mapping it to the tenant's context and integrated account.

- The system returns the raw URL to the client:

https://api.example.com/mcp/<raw_hex_token>.

When a request hits /mcp/:token, the gateway hashes the token, looks up the hashed value, and resolves it to the tenant + integrated account it represents.

sequenceDiagram

participant Client as MCP Client<br>(Claude / ChatGPT)

participant Edge as MCP Gateway

participant KV as Token Store<br>(hashed lookup)

participant Vault as Credential Store

participant API as Third-Party API

Client->>Edge: POST /mcp/{raw_token}<br>JSON-RPC tools/call

Edge->>Edge: HMAC(raw_token, signing_key)

Edge->>KV: GET hashed_token

KV-->>Edge: {account_id, tenant_id, expires_at}

Edge->>Vault: load credentials for account

Vault-->>Edge: decrypted access_token

Edge->>API: authenticated upstream call

API-->>Edge: response

Edge-->>Client: JSON-RPC resultThree properties fall out of this design:

- Stolen KV data is useless. If the lookup store leaks, attackers get HMAC digests they can't reverse. The raw token is only ever returned once—in the response that creates the server.

- No session sprawl. The URL is the session. There's no separate "login" step on the MCP client side.

- Cheap revocation. Deleting the KV entry kills the server instantly. No token blacklist, no propagation delay.

For higher-trust deployments, you can layer a second factor: require the MCP client to also send a Bearer token (your platform's API token) in the Authorization header on top of the URL token. That covers the case where the URL might leak through screenshots, logs, or config files.

Do not use predictable URLs (UUIDs derived from the account ID, sequential IDs, or anything timestamp-based). The token should be high-entropy random bytes. Treat the URL like a bearer credential because that's exactly what it is.

Abstracting OAuth Token Management and Proactive Refresh

Handling OAuth 2.0 lifecycles is notoriously difficult. Once a request is routed to the right tenant, the next problem is keeping that tenant's upstream credentials alive. Most B2B SaaS APIs hand out access tokens with 30-60 minute lifetimes. If your AI agent tries to call a tool and the token is expired, the request fails. You cannot expect an LLM to handle a 401 Unauthorized error, parse the invalid_grant response, execute a token refresh flow, and retry the request.

The pattern that scales: treat OAuth lifecycle as platform infrastructure, completely abstracted from the MCP layer. The platform must ensure that whenever the MCP server receives a tool call, the underlying credentials are valid. Read our deep dive on OAuth at Scale: The Architecture of Reliable Token Refreshes for a complete breakdown, but practically, this means:

1. Encrypted Credential Storage at Rest

Access tokens, refresh tokens, client secrets, and any other sensitive context fields get encrypted before they hit your database. They're decrypted only inside the request path that actually needs to call the upstream API.

2. Concurrency Control with Distributed Locks

Because AI agents often execute multiple tools in parallel, you will encounter severe race conditions. If an agent calls five tools simultaneously and the token is expired, all five requests will attempt to use the refresh token at the exact same time. The first request succeeds, but the integration provider immediately invalidates the refresh token. The other four requests fail, and your integration is now completely disconnected.

You need a per-account distributed lock (a mutex) around the refresh operation. When the first request detects an expired token, it acquires the lock. The other concurrent requests hit the lock and await the result. Once the first request finishes refreshing the token and updates the database, the lock releases, and the waiting requests proceed using the newly minted access token.

3. Proactive Scheduled Alarms

Relying solely on on-demand refreshes increases latency for the end user, as the AI agent has to wait for the HTTP round-trip of the OAuth exchange. Don't wait for a 401 from the upstream API. Schedule a background worker or durable alarm to proactively refresh tokens 60 to 180 seconds before they expire. Combine that with a just-in-time check (with a 30-second safety buffer) on every API call as a fallback. By the time the AI agent makes a request, the token is already fresh.

4. Failure-Mode Webhooks

When a refresh ultimately fails (revoked grant, expired refresh token, account disabled), flip the integrated account into a needs_reauth state and notify the customer's app via webhook. Don't silently 500 every agent request after that.

Dynamic Tool Generation vs. Hardcoded Endpoints

If your engineering team is writing custom handler functions for every MCP tool, you are building legacy technical debt. You write list_hubspot_contacts, then list_salesforce_contacts, then list_pipedrive_contacts, and a year later you have 4,000 lines of near-identical TypeScript that all break differently when a vendor rotates their pagination format.

Dynamic tool generation is the architectural pattern of deriving MCP tool definitions directly from an integration's API documentation and JSON schemas at runtime, eliminating the need to hand-code individual API connectors.

The scalable approach is to generate tools as data, from two sources you already maintain:

- Resource configuration: which endpoints exist, what HTTP methods they accept, which path placeholders they need.

- Documentation records: human-readable descriptions plus JSON Schema for query parameters and request bodies.

When an MCP client sends a tools/list request, the server dynamically constructs the available tools by intersecting the integration's defined endpoints with the available documentation records. If an endpoint lacks documentation, it is excluded from the MCP server. This acts as a strict quality gate, ensuring AI models only see endpoints with clear instructions.

Tool names are derived consistently:

// Pseudocode for tool name derivation

function toolName(integration: string, resource: string, method: string): string {

switch (method) {

case 'list': return snakeCase(`list all ${integration} ${resource}`)

case 'get': return snakeCase(`get single ${integration} ${singular(resource)} by id`)

case 'create': return snakeCase(`create a ${integration} ${singular(resource)}`)

case 'update': return snakeCase(`update a ${integration} ${singular(resource)} by id`)

case 'delete': return snakeCase(`delete a ${integration} ${singular(resource)} by id`)

default: return snakeCase(`${integration} ${resource} ${method}`)

}

}Schemas are injected with context-aware instructions. For list operations, you inject limit and next_cursor properties into the query schema automatically, with descriptions that explicitly tell the LLM how to handle pagination cursors ("Pass back exactly the cursor value you received, do not decode or modify"). For individual record operations (get, update, delete), you inject an id property. This is the boring infrastructure work that decides whether agents actually paginate correctly or hallucinate cursor values.

For more on this pattern, review our guide on Auto-Generated MCP Tools: Documentation-Driven Tool Creation for AI Agents (2026).

Enforcing Least Privilege: Method and Tag Filtering

AI models are unpredictable. If you expose a full CRUD (Create, Read, Update, Delete) API to an autonomous agent, it will eventually hallucinate a destructive action. You cannot rely on prompt engineering to prevent data loss. Security must be enforced at the infrastructure level.

A multi-tenant MCP server with full read/write access to every endpoint is a liability. When authorization is required and not yet proven by the client, servers MUST respond with HTTP 401 Unauthorized, but that's only the outer perimeter. Inside the perimeter, multi-tenant MCP servers require granular, server-level filtering. Two orthogonal axes of filtering give you most of what you need:

Method-Level Filtering

Beyond restricting resources, you must restrict operations. Let customers create read-only servers, write-only servers, or specific-method servers. The implementation is a small predicate:

function isMethodAllowed(method: string, allowed?: string[]): boolean {

if (!allowed?.length) return true

return allowed.some(rule => {

switch (rule) {

case 'read': return ['get', 'list'].includes(method)

case 'write': return ['create', 'update', 'delete'].includes(method)

case 'custom': return !['get','list','create','update','delete'].includes(method)

default: return method === rule

}

})

}Tag-Based Resource Filtering

Integrations contain dozens of resources. A CRM integration might have endpoints for contacts, deals, tickets, and internal user directories. You rarely want an AI agent to have access to all of them. By tagging resources in your integration configuration, you can scope MCP servers to specific functional areas:

tool_tags: {

contacts: ['crm', 'sales'],

deals: ['crm', 'sales'],

tickets: ['support'],

ticket_comments: ['support'],

users: ['directory']

}A server created with { tags: ['support'] } only ever sees tools for tickets and ticket_comments. Combine with { methods: ['read'] } and you've got a strictly read-only support agent that can't touch your CRM data.

| Server profile | methods |

tags |

Example use case |

|---|---|---|---|

| Read-only support agent | ['read'] |

['support'] |

Triage assistant |

| CRM data writer | ['write'] |

['crm'] |

Lead enrichment agent |

| Full directory | ['read'] |

['directory'] |

Org-chart Q&A bot |

| Custom searches only | ['custom'] |

- | Search-augmented retrieval |

Validate filters at server-creation time. If the requested combination of methods and tags produces zero tools, reject the request with a clear error. This is also where short-lived servers shine. A contractor who needs MCP access for a week should get a server with expires_at set seven days out, after which the underlying token entries vanish on their own.

Handling Flat Input Namespaces in JSON-RPC

A subtle but frustrating technical reality of the Model Context Protocol is how it structures arguments. When an AI client invokes a tool via a tools/call message, all arguments are delivered as a single, flat JSON object.

REST APIs, however, do not use flat namespaces. They strictly separate query parameters from request bodies. If an AI agent wants to update a Salesforce contact and also paginate the response, it might send:

{

"contact_id": "12345",

"first_name": "Alice",

"limit": 50

}The proxy API layer needs to know that first_name goes in the JSON body, limit goes in the URL query string, and contact_id belongs in the URL path. Your gateway has to disambiguate. The pragmatic approach is to use the tool's JSON schemas as the splitter:

function splitArguments(

args: Record<string, unknown>,

querySchema: { properties: Record<string, unknown> },

bodySchema: { properties: Record<string, unknown> }

) {

const queryKeys = new Set(Object.keys(querySchema?.properties ?? {}))

const bodyKeys = new Set(Object.keys(bodySchema?.properties ?? {}))

const query: Record<string, unknown> = {}

const body: Record<string, unknown> = {}

for (const [k, v] of Object.entries(args)) {

if (queryKeys.has(k)) query[k] = v

else if (bodyKeys.has(k)) body[k] = v

}

// For get/update/delete, lift `id` out of query into the URL path

return { query, body, id: query.id }

}A few real-world edge cases worth flagging:

- Name collisions. If a query schema and a body schema both define a

nameproperty, pick one as canonical (typically query) or throw a validation error depending on your strictness. Document the precedence so integration authors know. - Cursor passthrough. LLMs love to "helpfully" decode opaque cursor strings. Your

next_cursordescription must explicitly forbid this. Most production-grade agents follow descriptions if they're firm enough. - Custom methods. Things like

search,download, orimportdon't fit CRUD shapes. Treat them as a separate dispatch path that takes the full args object and lets the underlying integration config decide what to do with it. - Rate limit headers. Your gateway should pass upstream rate-limit signals to the caller, not absorb them. Surface standardized

ratelimit-limit,ratelimit-remaining, andratelimit-resetheaders per the IETF spec and let the calling agent (or its host framework) decide how to back off. Trying to silently retry inside the MCP gateway just hides problems and burns through quotas faster.

Connecting AI Agents to Oracle NetSuite and SAP

ERP systems are the last mile for enterprise AI agents. When your agent needs to check overdue invoices in NetSuite, create purchase orders in SAP, or pull employee records across both, you hit every architectural challenge described above - amplified by fragmented API surfaces, legacy authentication schemes, and concurrency-based rate limits that don't follow the standard HTTP 429 pattern.

For a detailed evaluation of NetSuite-specific options, see our guide to MCP servers for Oracle NetSuite. This section focuses on the multi-tenant architecture patterns that matter when you're connecting AI agents to these ERP systems at scale.

MCP Server Options for Enterprise ERPs

The right MCP server architecture depends on whether you're building internal tools for your own team or shipping a multi-tenant B2B SaaS product. Here's how the available options compare.

| Capability | NetSuite First-Party | SAP First-Party | CData Connect AI | Unified API Platform |

|---|---|---|---|---|

| Multi-tenant B2B SaaS | No - single account | No - single instance | Managed platform available | Yes - per-account MCP URLs |

| Auth model | OAuth 2.0 per account | SAP BTP OAuth 2.0 | JDBC connection string | Platform-managed OAuth lifecycle |

| NetSuite access | Native SuiteQL + REST | N/A | Via JDBC driver (SQL layer) | SuiteQL + REST + RESTlet |

| SAP access | N/A | Native HANA, BTP, S/4HANA | Via JDBC/OData drivers | OData + REST connectors |

| Tool generation | Fixed SuiteApp tools | Joule Studio custom agents | Auto-discovered from schema | Dynamic from docs + JSON schemas |

| Rate-limit handling | Respects account governance | BTP quotas | Connection-level | Platform-orchestrated queuing |

| Best for | Internal single-tenant use | SAP-native development | SQL/BI-oriented queries | Multi-tenant SaaS products |

NetSuite AI Connector Service: Oracle's first-party MCP server lets AI clients like Claude and ChatGPT connect to NetSuite data, with access governed by role permissions. The MCP Standard Tools SuiteApp handles record operations (create, read, update via REST), saved searches, and SuiteQL queries. It's well-suited for internal teams querying their own ledger. The limitation: each connection is scoped to a single NetSuite account and user role. If you're a B2B SaaS company serving hundreds of customers' NetSuite instances, each with distinct credentials, the first-party connector doesn't solve the multi-tenant isolation problem.

SAP MCP Ecosystem: SAP announced extensive MCP support at TechEd 2025, giving AI agents access to SAP data from all business applications - including third-party agents, not just SAP's own. MCP support for SAP HANA Cloud is now available, providing direct access to rich multi-model engines. New local MCP servers for SAP Build give developers the ability to use agentic tools with preferred code assistants like Cursor, Windsurf, and Claude Code. At Sapphire 2026, SAP opened the AI Agent Hub as a vendor-agnostic command center for governing AI agents, LLMs, and MCP servers. These are powerful for SAP-native development but require SAP BTP infrastructure and are focused on the SAP ecosystem.

CData: CData's open-source NetSuite MCP server is read-only, connecting to NetSuite data from Claude Desktop through JDBC drivers. Their managed Connect AI platform connects agents to 350+ enterprise data sources through one fully hosted remote MCP server, with semantic intelligence that understands metadata and relationships across systems. CData operates at the SQL/data connectivity layer, which works well for analytics and reporting but adds an abstraction away from the ERP's native business object APIs.

Unified API Platforms (like Truto): multi-tenant by design, with per-account cryptographic MCP URLs and dynamic tool generation from a single deployment. The platform handles the OAuth lifecycle, tenant isolation, and tool curation. The trade-off is an additional layer between your application and the ERP - but that layer absorbs the auth migration, rate-limit orchestration, and schema normalization you'd otherwise build yourself.

Sample MCP Tool Definitions for ERP Data

When an MCP server dynamically generates tools from an ERP integration, the resulting definitions look like this. These are produced at runtime from resource configuration and documentation records - not hand-coded per ERP.

NetSuite invoice tool (list):

{

"name": "list_all_netsuite_invoices",

"description": "List all invoices from Oracle NetSuite. Returns invoice number, entity, status, total amount, due date, and currency. Supports filtering by status and date range.",

"inputSchema": {

"type": "object",

"properties": {

"status": {

"type": "string",

"description": "Filter by invoice status (e.g., open, paidInFull)"

},

"limit": {

"type": "string",

"description": "The number of records to fetch"

},

"next_cursor": {

"type": "string",

"description": "The cursor to fetch the next set of records. Always send back exactly the cursor value you received without decoding or modifying it."

}

}

}

}SAP purchase order tool (create):

{

"name": "create_a_sap_purchase_order",

"description": "Create a new purchase order in SAP S/4HANA. Requires supplier number, purchasing organization, and at least one line item.",

"inputSchema": {

"type": "object",

"properties": {

"supplier": {

"type": "string",

"description": "SAP supplier (vendor) number"

},

"purchasing_org": {

"type": "string",

"description": "Purchasing organization code"

},

"items": {

"type": "array",

"description": "Line items for the purchase order",

"items": {

"type": "object",

"properties": {

"material": { "type": "string" },

"quantity": { "type": "number" },

"unit": { "type": "string" }

}

}

}

},

"required": ["supplier", "purchasing_org", "items"]

}

}The AI model never sees the underlying OAuth tokens, account IDs, or API endpoint URLs. It calls list_all_netsuite_invoices and the platform resolves authentication, constructs the SuiteQL query or REST call, and returns the result. The same pattern applies to SAP OData calls routed through BTP.

ERP Authentication: NetSuite's TBA-to-OAuth 2.0 Migration and SAP BTP

The OAuth section earlier in this post applies to every SaaS API. ERP systems add their own auth complications that directly affect how you architect a multi-tenant MCP server.

NetSuite: TBA deprecation and the OAuth 2.0 transition

NetSuite has historically relied on Token-Based Authentication (TBA) - an OAuth 1.0-style scheme requiring HMAC-SHA256 signatures computed per request from five credentials (consumer key, consumer secret, token ID, token secret, and account ID), a nonce, and a timestamp. A single encoding error causes an opaque authentication failure.

As of 2027.1, no new integrations using TBA can be created for SOAP web services, REST web services, and RESTlets. Existing TBA integrations continue to work past that date, but all new integrations must use OAuth 2.0. Also in 2027.1, all new integrations using the OAuth 2.0 authorization code grant flow will require PKCE parameters.

For multi-tenant MCP servers, this creates a transition period: some customer NetSuite instances are still on TBA, others have migrated to OAuth 2.0. Your platform needs to handle both auth schemes transparently. If you build this from scratch, you're maintaining two completely different signing and token refresh implementations. A managed platform that abstracts the auth mechanism lets you serve both without conditional logic in your MCP layer. For the full migration timeline, see our NetSuite SOAP migration guide.

SAP: BTP OAuth 2.0 Client Credentials

SAP BTP uses OAuth 2.0 authentication with the Client Credentials flow (client ID and secret) for server-to-server integrations. Your MCP server obtains a bearer token from the SAP authorization server, then uses that token for API calls. The proactive token refresh pattern described earlier applies directly: schedule background refreshes before the access token expires, use distributed locks to prevent concurrent refresh races, and fall back to just-in-time refresh if the proactive job misses.

Starting with the 2026.1 NetSuite release, all newly built integrations should use REST web services with OAuth 2.0. With the 2028.2 NetSuite release, all SOAP endpoints will be disabled, and SOAP-based integrations will stop working. Between the TBA cutoff and the SOAP shutdown, any platform serving NetSuite MCP tools must handle a multi-year auth migration transparently.

Rate-Limit Orchestration for ERP Concurrency Models

NetSuite's rate-limiting model is fundamentally different from typical SaaS APIs. Instead of daily request quotas or per-minute caps, NetSuite uses a concurrency slot model where all API types (SOAP, REST, RESTlets) share a single pool of concurrent request slots at the account level.

The default concurrency limit is 15 requests per account, increasing by 10 for each SuiteCloud Plus license. Higher service tiers get more capacity (Tier 5 allows 55 concurrent requests). By default, all integrations fight for the same "Unallocated" pool of threads, which creates a noisy neighbor problem where a low-priority sync can consume all available threads, blocking high-priority requests.

This means your multi-tenant MCP server needs a per-account concurrency queue, not a per-account rate limiter. When an AI agent fires five tool calls in parallel against a customer's NetSuite instance on the default tier, those five requests consume a third of the available pool. Other integrations sharing that account will start getting rejected.

The architecture that works:

- Per-account semaphore: Track active requests per integrated account. Before dispatching an upstream call, acquire a slot. Release it when the response arrives.

- Conservative defaults: Use 5 of a customer's 15 default slots, leaving room for their other integrations, scheduled scripts, and UI-driven API calls.

- Backpressure to the agent: When the queue is full, return a structured error telling the AI agent to wait and retry. Agents handle explicit backpressure better than silent timeouts.

- Exponential backoff on 429s: Immediately retrying after a 429 causes both the retry and the next queued request to fail. Exponential backoff is mandatory.

SAP applies similar concurrency governance through BTP quotas. The same queuing pattern applies: track in-flight requests per tenant, enforce a configurable ceiling, and surface backpressure honestly.

Zero Data Retention for Financial Data

ERP data is financial data - invoices, journal entries, payroll records, vendor payments. This is the most sensitive category of business information your AI agents will touch. When agents read this data through MCP tools, a zero data retention architecture becomes non-negotiable for enterprise customers.

The MCP server should act as a pass-through proxy: the agent's request flows to the ERP API, the response flows back, and nothing is written to disk, cached, or logged in plaintext. Request metadata (which tool was called, response status code, latency) is fine for observability. The actual financial records should transit through the MCP layer without being persisted.

This is particularly important for SOC 2 and GDPR compliance. If your MCP server caches a customer's NetSuite invoice data and you suffer a breach, you're liable for financial data you never needed to store. The zero-retention pattern eliminates this risk entirely.

Infrastructure for the Agentic Era

A multi-tenant MCP server is, fundamentally, an infrastructure problem dressed up as an AI problem. The actual LLM integration requires very little code. The hard parts—URL-based tenant isolation, encrypted credential vaulting, proactive token refresh with concurrency control, declarative tool generation, least-privilege filtering, schema-driven argument splitting—are all classic distributed systems work. The MCP protocol just sits on top.

If you're building this in-house, give yourself a realistic estimate. A team of two senior engineers can usually get a working MVP in 6-8 weeks for a single integration. Each additional integration adds 1-3 weeks of OAuth quirks, pagination edge cases, and webhook verification work. Multiply by the number of CRMs, HRIS systems, ticketing tools, and accounting platforms your customers care about, and the headcount math gets uncomfortable.

The alternative is a managed unified API platform that hands you all of this as a primitive. Leveraging a platform that handles the OAuth lifecycle, provides per-account MCP URLs, and dynamically generates tools from normalized data models allows your engineers to focus on what actually matters: the agentic reasoning and the user experience.

Whichever path you pick, the architectural principles don't change. Isolate tenants cryptographically. Keep credentials out of the model context. Generate tools as data. Default to least privilege. Surface errors honestly. Get those right and your MCP layer holds up under enterprise scrutiny. Get them wrong and you're one prompt-injection demo away from a very bad week.

FAQ

- How do I connect AI agents to Oracle NetSuite via MCP?

- You have four options: Oracle's first-party AI Connector Service (single-tenant, role-based), CData Connect AI (SQL/JDBC-based), SAP-native MCP servers (for SAP ecosystems), or a unified API platform like Truto that provides multi-tenant, per-account MCP URLs with dynamic tool generation. The right choice depends on whether you need single-tenant internal access or multi-tenant B2B SaaS scale.

- What authentication does NetSuite require for MCP server integrations?

- NetSuite is transitioning from Token-Based Authentication (TBA, an OAuth 1.0-style scheme using HMAC-SHA256 signatures) to OAuth 2.0. As of NetSuite 2027.1, no new TBA integrations can be created. New builds must use OAuth 2.0, and PKCE will be mandatory for the authorization code flow. Existing TBA integrations continue working past the cutoff.

- Can a single MCP server deployment serve multiple ERP tenants securely?

- Yes, using cryptographically scoped URLs. Each connected ERP account gets a unique MCP endpoint containing a random hex token that is HMAC-hashed before storage. The URL itself acts as the authentication and tenant-isolation boundary. The AI model never sees underlying API credentials, and revoking access is as simple as deleting the token entry.

- How do you handle NetSuite's API concurrency limits with AI agents?

- NetSuite enforces concurrent thread limits (default 15) shared across all API types, not daily quotas. Your MCP server needs a per-account concurrency semaphore that tracks in-flight requests, acquires a slot before dispatching upstream calls, and applies exponential backoff on 429 errors. Default conservatively to avoid starving the customer's other integrations.