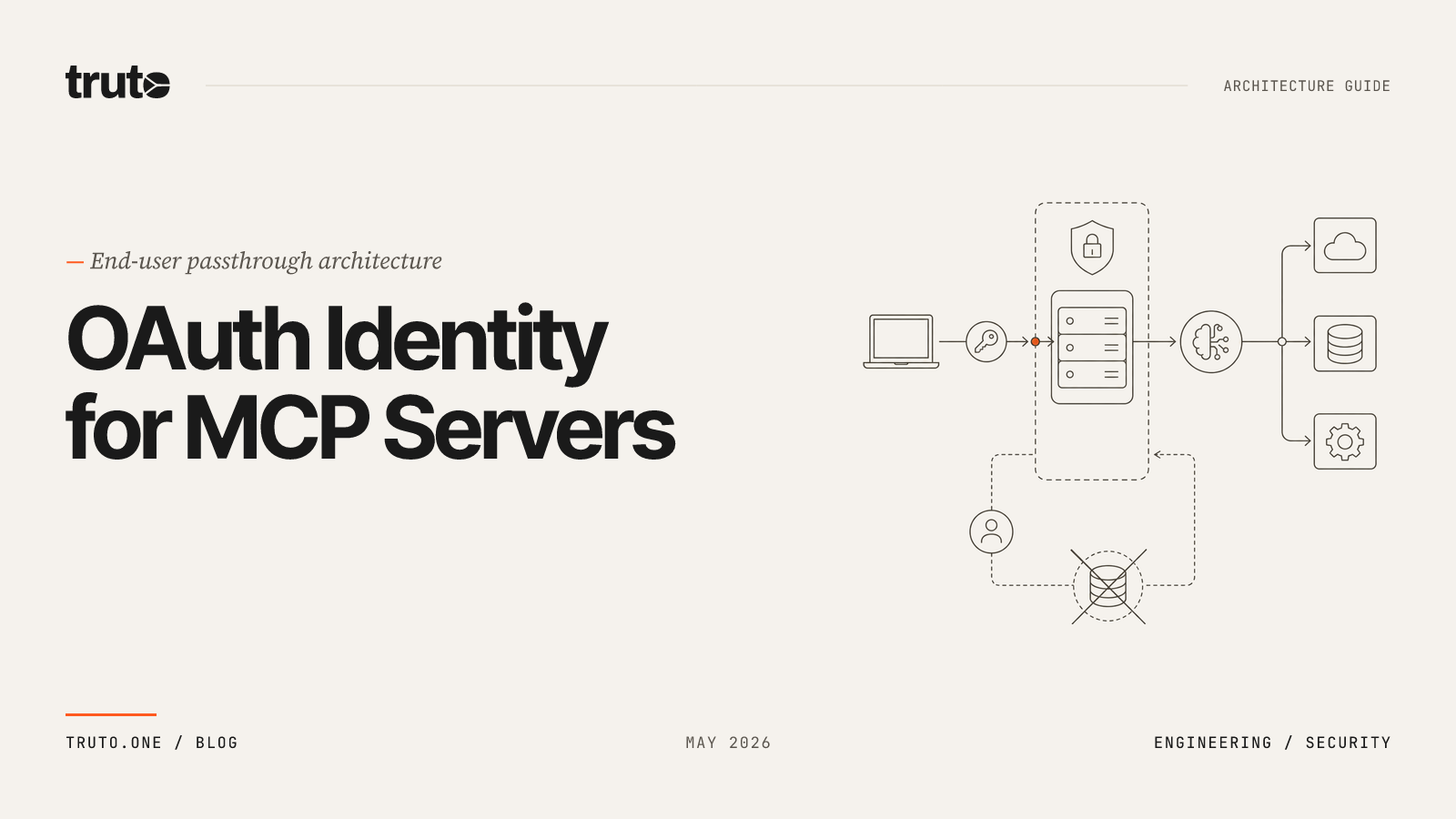

How to Handle Authentication and Tool Sharing in Multi-Agent MCP Systems (CrewAI, AutoGen, LangGraph)

Learn how to architect secure OAuth 2.0 authentication, tool routing, and rate-limit handling for multi-agent frameworks like CrewAI and AutoGen using MCP servers.

Orchestrating autonomous agents using frameworks like CrewAI, AutoGen, or LangGraph feels incredibly powerful during local development. You define a persona, assign a few Python functions, and watch the agent reason through complex tasks. But deploying that multi-agent system into a production B2B SaaS environment exposes a massive architectural gap.

The framework handles the agentic reasoning, but it does not solve the enterprise integration problem. When your agents need to act on behalf of your users inside external systems—reading Jira tickets, updating Salesforce opportunities, or pulling BambooHR employee records—you suddenly have to manage multi-tenant OAuth 2.0 lifecycles, handle vendor-specific rate limits, and ensure strict isolation between what different agents are allowed to access.

Hand-rolling that infrastructure is where multi-agent projects quietly die. Building point-to-point custom API connectors for every agent capability is an engineering dead end, and the industry has rapidly aligned on the Model Context Protocol (MCP) as the standard middleware layer for this exact problem.

This guide walks through the architectural patterns that actually work for multi-agent authentication and tool sharing over MCP—what CrewAI's MCPServerAdapter and AutoGen's McpWorkbench give you out of the box, where they leave the heavy lifting to you, and how to design a production setup that won't melt during an enterprise security review.

The Multi-Agent Integration Bottleneck

Most multi-agent demos use standard input/output (stdio) MCP servers with environment variables for credentials. The developer hardcodes an API key into a .env file, and the local agent reads it. That works on a laptop. It does not work when your CRM agent needs to act on behalf of 4,000 customers, each with their own Salesforce instance, refresh tokens, and scopes.

Before MCP, connecting AI models to external data sources required a custom integration for every combination. As we've seen with native LLM connectors falling short, if you supported five LLMs and needed to connect them to fifty enterprise SaaS applications, you were staring down the barrel of 250 custom API wrappers.

In a multi-agent framework, the pain compounds across three axes:

- Per-tenant OAuth: Every customer connects their own Salesforce, HubSpot, Jira, and BambooHR. You need a token vault, refresh logic, and a way to map an agent run to the correct user's credentials.

- Tool routing: A

SalesAgent,SupportAgent, andHRAgentshould not all see the same 400-tool flat list. The model wastes tokens reasoning over irrelevant capabilities and frequently picks the wrong one. - Rate limits and failure modes: When an agent loops, it can burn a customer's API quota in seconds. HTTP 429s need to flow back to the framework cleanly so the planner can back off instead of retrying blindly.

MCP is the protocol-level answer to the first two. The third is where most platforms get it wrong.

How MCP Solves the M x N Connector Problem

The Model Context Protocol (MCP) is an open standard from Anthropic (now governed under the Linux Foundation's AAIF) that lets any AI client talk to any tool server using JSON-RPC 2.0. With MCP, the M * N integration nightmare transforms into an M + N standard. It requires 110 standardized implementations—one client per model, one server per tool surface—instead of 1,000 custom integrations.

For a deeper primer on the architecture, see our 2026 architecture guide for SaaS PMs.

What matters for multi-agent frameworks is that every major orchestrator now natively ships an MCP client:

- CrewAI Tools supports the Model Context Protocol, giving access to tools from hundreds of MCP servers built by the community, exposed through the

MCPServerAdapter. - AutoGen provides McpWorkbench that implements an MCP client, which you can use to create an agent that uses tools provided by MCP servers.

- LangGraph nodes can be wrapped around MCP clients to invoke tools as part of a graph step.

graph TD

subgraph Multi-Agent Framework

A[CrewAI Agent]:::client

B[AutoGen Agent]:::client

C[LangGraph Executor]:::client

end

subgraph MCP Middleware Layer

D[MCP Client Interface]:::middleware

end

subgraph Remote MCP Servers

E[Salesforce MCP Server]:::server

F[Zendesk MCP Server]:::server

G[BambooHR MCP Server]:::server

end

A -->|JSON-RPC| D

B -->|JSON-RPC| D

C -->|JSON-RPC| D

D -->|HTTP/SSE| E

D -->|HTTP/SSE| F

D -->|HTTP/SSE| G

classDef client fill:#f9f9f9,stroke:#333,stroke-width:2px;

classDef middleware fill:#e1f5fe,stroke:#0288d1,stroke-width:2px;

classDef server fill:#e8f5e9,stroke:#388e3c,stroke-width:2px;The protocol standardizes the communication, but it leaves the heavy lifting of authentication, token management, and security entirely up to the developer.

Handling Authentication: From Local Stdio to Production OAuth 2.0

Local MCP setups inject API keys via environment variables. Look at any AutoGen GitHub MCP example: the agent passes a GITHUB_PERSONAL_ACCESS_TOKEN through env to a Docker-launched MCP server. This approach is useless for B2B SaaS.

In a production multi-tenant system, agents operate on behalf of specific end-users. You must use remote MCP servers communicating over HTTP or Server-Sent Events (SSE). This requires a highly secure authentication architecture that handles the OAuth 2.0 authorization code flow for hundreds of different third-party providers.

The OAuth Token Lifecycle Problem

When an AI agent connects to a remote MCP server to execute a tool (e.g., update_hubspot_contact), the server must attach a valid OAuth access token to the outbound HTTP request. Access tokens typically expire in 30 to 60 minutes.

The naive implementation—check expiry before each call, refresh inline if stale—falls apart fast. If multiple agents attempt to call the API simultaneously right as the token expires, you will encounter race conditions. Two requests racing through token.expired() will both attempt to refresh, and most identity providers invalidate the old refresh token the moment a new one is issued. Now both calls fail with an invalid_grant error, the entire token chain is revoked due to reuse detection, the account flips to needs_reauth, and your customer's CSM is on the phone.

Managed platforms solve this by treating token refreshes as a distributed systems problem. The correct architecture has three properties:

- Proactive refresh: The platform schedules work to refresh credentials 60 to 180 seconds before they expire, complete with jitter to avoid thundering herds.

- Mutex-protected refresh: Mutex locks per integrated account ensure that concurrent agent requests queue cleanly behind a single in-flight refresh operation instead of duplicating it.

- Graceful reauth signaling: When a refresh token genuinely dies, the system fires a webhook so your app can prompt the user, rather than silently failing mid-agent-run.

For a deeper architectural treatment, see OAuth at Scale: The Architecture of Reliable Token Refreshes.

Remote MCP with HTTP Transport

For multi-tenant agents, you want remote MCP servers, not stdio. AutoGen supports this directly via SseServerParams:

from autogen_ext.tools.mcp import McpWorkbench, SseServerParams

from autogen_agentchat.agents import AssistantAgent

server_params = SseServerParams(

url="https://api.truto.one/mcp/<hashed_token>",

headers={"Authorization": "Bearer <platform_api_token>"},

)

async with McpWorkbench(server_params) as mcp:

agent = AssistantAgent(

"crm_agent",

model_client=model_client,

workbench=mcp,

reflect_on_tool_use=True,

)CrewAI follows the exact same shape:

from crewai_tools import MCPServerAdapter

server_params = {

"url": "https://api.truto.one/mcp/<hashed_token>",

"headers": {"Authorization": "Bearer <platform_api_token>"}

}

with MCPServerAdapter(server_params) as tools:

agent = Agent(role="CRM Analyst", tools=tools, ...)Securing the MCP Endpoint

Exposing an MCP server over HTTP introduces a security risk: anyone with the URL could theoretically execute tools against your customer's SaaS account. To lock this down, your architecture should implement a layered authentication model.

In enterprise setups, the MCP URL itself acts as a per-tenant capability token, cryptographically hashed to encode which connected account the server is bound to. Combined with a second-factor flag (like require_api_token_auth), possession of the URL alone is not enough. The agent framework must also pass a valid platform API token in the Authorization header. This ensures that only your authenticated backend services can invoke the MCP server, which is critical when MCP URLs end up in logs, dotfiles, or LangSmith traces.

Trust boundary reality check. Always make sure that you trust the MCP Server before using it. Using an STDIO server will execute code on your machine. Using SSE is still not a silver bullet with many injection possibilities into your application from a malicious MCP server. Pin remote MCP URLs to known origins and authenticate the channel.

Tool Sharing and Routing in Multi-Agent Frameworks

A common mistake when building multi-agent systems is dumping every available API endpoint into the context window of every agent. If your SalesAgent sees BambooHR's leave-balance tool, two things happen: token cost balloons, and the LLM occasionally hallucinates an argument to call it anyway.

You must explicitly route tools to the specialized agents that need them. There are three architectural patterns for tool scoping:

1. One MCP Server Per Agent Role

Generate a separate MCP URL per role, each scoped to a different connected account or filtered toolset. The SalesAgent gets a Salesforce-only URL; the HRAgent gets a BambooHR-only URL. Frameworks like AutoGen support multiple workbenches on a single agent, so you can also compose them: pass a list of workbenches to one agent to give it both web browsing and filesystem tools.

2. Tag-Based Filtering on a Single Server

When one agent legitimately needs cross-system tools (e.g., an OnboardingAgent that touches BambooHR, Okta, and Slack), filter the toolset by functional tag. On a tag-aware MCP server, the URL configuration includes tags=["directory", "messaging"] and the server dynamically generates highly scoped tools matching those tags. This keeps the tool list tight without spinning up multiple connections.

3. Method-Level Filtering for Write-Safety

For read-only analytics agents, scope the server to methods=["read"]. The agent can list and get but cannot create, update, or delete. This is one of the cleanest ways to enforce the principle of least privilege for AI agents—far simpler than attempting to validate writes after the fact.

Dynamic Tool Generation and Documentation Gates

Maintaining tool definitions manually is a massive technical debt trap. Third-party APIs change constantly. If you hardcode the JSON Schema for a Jira issue creation tool, it will eventually break when Jira adds a required custom field.

A practical lesson from running this in production: tool descriptions are not optional decoration. The LLM picks tools by reading their descriptions. If your MCP server auto-emits 200 tools with empty schemas, the planner will hallucinate arguments and waste retries.

Auto-Generated MCP Tools: Documentation-Driven Tool Creation for AI Agents (2026) outlines why tool generation must be dynamic. Advanced platforms derive MCP tools directly from the integration's resource definitions and documentation records on every tools/list request. If an endpoint lacks documentation, the tool is skipped. This acts as a strict quality gate, ensuring your agents are only exposed to well-documented tools with accurate query and body schemas. When the framework requests the tool list, schemas are dynamically enhanced—injecting pagination parameters like limit and next_cursor complete with LLM instructions to pass the cursor back exactly as received.

Managing Rate Limits and Context Windows

AI agents are inherently aggressive multi-agent systems are rate-limit machines. An AutoGen loop tasked with analyzing 5,000 customer records will try to execute 5,000 parallel tool calls as fast as the LLM can generate them. Enterprise APIs will immediately reject this traffic with HTTP 429 Too Many Requests errors.

How your integration middleware handles these 429 errors dictates whether your multi-agent system succeeds or fails.

The Danger of Middleware Retries

A common instinct is to have the integration middleware automatically absorb rate limit errors, apply exponential backoff, and retry the request silently. For standard application code, this is a good pattern. For AI agents, it is fatal.

If the middleware blocks the HTTP connection for 45 seconds while waiting for a rate limit window to reset, the LLM client will likely time out. Even worse, it hides backpressure from the agent's planner, which is the only component that knows whether to abandon a step, take a different path, or escalate. The LLM does not know why the tool is taking so long, so it loses agency.

Passing Rate Limits to the Agent

The correct architectural approach is radical transparency. The integration layer should not retry, throttle, or apply backoff on rate limit errors. When an upstream API returns an HTTP 429, it should immediately pass that error back to the caller.

To make this actionable for the agent framework, the chaotic, vendor-specific rate limit headers (like X-RateLimit-Remaining-Day or Sforce-Limit-Info) must be normalized into standardized IETF headers. The IETF draft draft-ietf-httpapi-ratelimit-headers defines the standard:

RateLimit-Limit: The total request quota in the current window.RateLimit-Remaining: The number of requests left.RateLimit-Reset: The seconds until the quota replenishes.

By passing the 429 error and these standardized headers back to the agent framework, the caller (your CrewAI tool wrapper, your LangGraph node, your AutoGen tool adapter) can handle backoff gracefully. Here is a reusable retry decorator for tool calls:

import asyncio, random

async def call_with_backoff(fn, *args, max_attempts=4, **kwargs):

for attempt in range(max_attempts):

resp = await fn(*args, **kwargs)

if resp.status_code != 429:

return resp

reset = int(resp.headers.get("ratelimit-reset", "2"))

jitter = random.uniform(0, 1)

await asyncio.sleep(min(reset, 30) + jitter)

raise RuntimeError("Rate limit budget exhausted")This pattern lets the agent framework's planner observe the failure—useful for circuit-breaking a runaway loop—while still recovering automatically when the window resets.

Rule of thumb. Treat the unified API as a thin, honest pass-through. Retry policy belongs in the agent runtime, not the integration layer. Frameworks already support tool-level retries, error reflection (reflect_on_tool_use=True in AutoGen), and bounded iteration caps (max_iter in CrewAI).

Why Managed MCP Infrastructure Wins for B2B SaaS

If you are evaluating how to connect your multi-agent architecture to your customers' enterprise systems, you have to decide where to spend your engineering cycles. As explored in our buyer's guide to MCP server platforms, the build-vs-buy math for multi-agent infrastructure is not subtle.

To ship a production CrewAI or AutoGen system against enterprise SaaS, you need:

- A token vault with encryption at rest and concurrency-safe refresh per account.

- Per-customer OAuth app management.

- A tool generation layer that produces JSON Schemas the LLM can actually use, with descriptions curated per resource and method.

- Tag and method filtering so each agent role gets a scoped toolset.

- Standardized error and rate-limit handling across 100+ APIs that each behave differently.

Building custom MCP servers requires implementing JSON-RPC 2.0 protocol handlers, normalizing API schemas into LLM-friendly formats, building a stateful OAuth token refresh system, and writing custom logic to map flat LLM arguments into complex nested request bodies.

Managed unified API platforms (like those compared in our guide to the best MCP server platforms) abstract the infrastructure entirely. With a platform like Truto, adding a new integration to your multi-agent system is a data operation, not a code operation. Every connected account can be turned into an MCP server URL via a single API call. You configure the integration, define the tag-based routing for your specific agents, and the platform dynamically generates the MCP server. It handles the proactive token refreshes ahead of expiry, normalizes the pagination, standardizes the rate limit headers, and enforces multi-tenant isolation.

Your engineering team can focus entirely on prompt engineering, agent orchestration, and business logic—leaving the brutal realities of third-party API maintenance to the infrastructure layer.

Where to Go From Here

If you're standing up a multi-agent system this quarter, here are three concrete next steps:

- Define agent-to-toolset boundaries before you write any code. Sketch which agents read, which write, and which cross domains. This becomes your tag and method filter map.

- Pick the auth boundary early. Decide whether MCP URLs travel inside your trust boundary (URL-only auth) or whether you need the second-factor API token check. Retrofitting this is painful.

- Wire rate-limit-aware retries at the framework layer. Standard IETF headers make this a 30-line utility, not a custom integration project per provider.

FAQ

- How do you authenticate AI agents with remote MCP servers?

- Remote MCP servers in B2B SaaS require OAuth 2.0 authentication. Managed platforms handle the authorization code flow and proactively refresh tokens 60-180 seconds before they expire, using per-account mutex locks to ensure concurrent agents always have valid credentials without causing race conditions.

- How does CrewAI connect to an MCP server?

- CrewAI uses the `MCPServerAdapter` from `crewai-tools[mcp]`. You pass server parameters (stdio, SSE, or streamable HTTP), and the adapter exposes the server's tools as native CrewAI tools. For remote multi-tenant setups, point it at an HTTPS MCP URL and pass an API token in the Authorization header.

- How do you restrict which tools an agent can use in CrewAI or AutoGen?

- You should use tag-based or method-based tool filtering to dynamically generate scoped MCP servers. By assigning a server with only 'support' tags or 'read' methods to a specific agent, you enforce least privilege, prevent context window bloat, and reduce hallucinated API calls.

- Should MCP middleware automatically retry rate-limited requests?

- No. Middleware should pass HTTP 429 errors and standardized IETF rate limit headers (RateLimit-Limit, RateLimit-Remaining, RateLimit-Reset) directly back to the agent framework. This allows the framework's planner to handle exponential backoff natively without causing LLM timeouts or hiding backpressure.

- How are MCP tools generated for enterprise APIs?

- Instead of hardcoding tool definitions, advanced platforms dynamically generate MCP tools by reading integration documentation and resource schemas. This acts as a quality gate, ensuring the LLM always receives up-to-date query and body parameters with accurate descriptions.