Why SaaS Integrations Break After Launch: Root Causes and Architectural Fixes

Discover the hidden costs of API maintenance, why SaaS integrations break in production, and how declarative architectures prevent silent data failures.

The PR is merged. The sales team is notified. Your integration worked flawlessly in staging, passed QA, and the release notes just went out. You finally shipped the highly requested API connector.

And then, three weeks later, the 2 AM PagerDuty alerts start.

A customer's HubSpot sync stopped pulling contacts, and nobody noticed until the sales team opened a frantic ticket the next afternoon. Broken integrations are one of the fastest paths to customer churn in B2B SaaS. When a third-party API sync fails, your customer does not open a ticket with the upstream vendor. They open a ticket with you. If the connection keeps dropping, they cancel their subscription.

SaaS integrations break after launch because they are living dependencies on systems you do not control. The root causes of these failures rarely stem from bad code written by your team. They stem from a fundamentally flawed architectural approach that treats external APIs as static systems rather than volatile, continuously mutating dependencies.

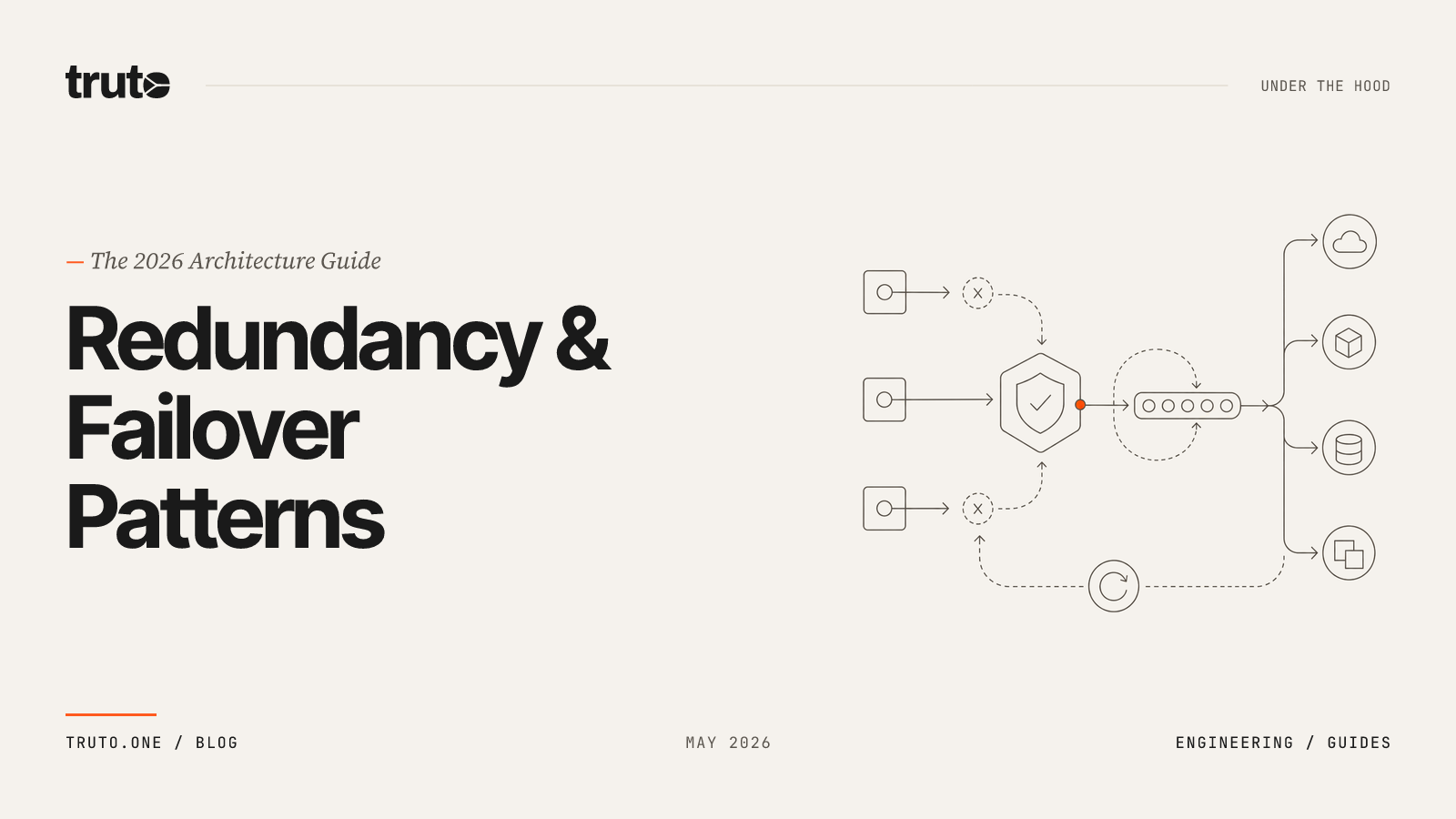

This guide breaks down exactly why SaaS integrations fail in production, the hidden engineering costs of maintaining them, and the specific architectural patterns—declarative configuration, automated credential management, and multi-tiered override hierarchies—that prevent these silent failures.

The Hidden Cost of Launching SaaS Integrations

Building the initial connection to a third-party API is the cheapest part of its lifecycle. Launching an integration is simply day one of a multi-year maintenance commitment. The build phase gets all the attention—sprint planning, engineering estimates, QA cycles. But the moment that integration hits production, it enters a maintenance phase that most teams are structurally unprepared for.

APIs require continuous updates to remain secure and compatible with new systems. Bug fixes, security patches, dependency updates, and endpoint modifications are ongoing tasks that never fully stop. Engineering teams must plan for 15-20% of the original development cost annually just to keep a single integration functioning. For SaaS integrations specifically, that number skews higher because you do not control the other side of the wire.

Integration maintenance—including API connections, data syncing, and troubleshooting—accounts for 25-40% of total technology spend according to a 2024 Digital Applied analysis. A Gartner analysis estimated that mid-market SaaS companies spend 18 to 24 percent of their engineering capacity on API maintenance alone. That is one out of every five engineers dedicated strictly to keeping existing connections alive instead of building your core product. That is not feature work; that is keeping the lights on by rotating credentials, chasing down field renames, and rewriting pagination logic after a vendor's minor version bump.

The financial consequences of ignoring this reality are severe. A single SaaS integration failure at an e-commerce business in 2024 led to $35,000 in unprocessed orders before it was detected. When data stops moving, business operations halt. If your team is struggling to keep up with these maintenance demands, learning how to support SaaS integrations post-launch without a dedicated team requires a shift away from hardcoded logic and toward declarative systems.

Root Cause 1: OAuth Token Expiration and Silent Revocation

OAuth 2.0 is the industry standard for API authentication, but its implementation across vendors is entirely unstandardized. Access tokens expire. That is by design, typically occurring every 30 to 60 minutes. Developers handle this by storing a refresh token and using it to request a new access token when the old one dies.

What kills integrations is when tokens expire silently—with no alert, no retry, and no user-facing error—while your background sync keeps running as if everything is fine.

In interactive systems, token expiration typically prompts users to log in. In automation systems, there is no active user session available to recover from authentication failures. This is the core architectural mismatch. B2B integrations are background processes, not interactive sessions.

Most engineering teams build reactive authentication flows. The application makes an API call, receives a 401 Unauthorized error, pauses the request, uses the refresh token to get a new access token, and retries the original request.

This reactive pattern breaks at scale due to race conditions. If a customer triggers five concurrent sync jobs and the access token is expired, all five jobs hit a 401 Unauthorized simultaneously. All five jobs attempt to use the refresh token. Because most modern APIs enforce single-use refresh tokens (token rotation policies), the first job succeeds and receives a new token pair. The other four jobs fail permanently, their requests drop, and the customer experiences a silent sync failure.

Even worse, refresh tokens are frequently revoked without warning. Every OAuth provider handles token lifecycles differently:

- Google silently invalidates the oldest token when the device limit is exceeded.

- Microsoft Entra ID refresh tokens can expire due to inactivity. If you integrate with Microsoft using OAuth 2.0, you will eventually hit a refresh failure. It often appears as an

invalid_granterror, and it can break scheduled jobs until you manually recover. - A workspace admin might rotate security policies, or the vendor simply purges inactive sessions.

The Prevention Strategy: Proactive Refresh Scheduling

To prevent authentication failures, you must abandon reactive token refreshing. The system must maintain a durable state machine for every connected account. Instead of waiting for a 401 Unauthorized error to trigger a refresh attempt, the platform must schedule work ahead of token expiry.

# Proactive refresh: don't wait for failure

def should_refresh(token_record):

expires_at = token_record['expires_at']

# Schedule refresh 5 minutes before actual expiry

buffer = timedelta(minutes=5)

return datetime.utcnow() >= (expires_at - buffer)If an access token expires in 60 minutes, an isolated background job proactively requests a new token at the 55-minute mark. This ensures that when your application makes an API request, the token is always valid. For a deep dive into architecting this state machine, read our guide on handling OAuth token refresh failures in production for third-party integrations.

Root Cause 2: API Rate Limits and Throttling (HTTP 429)

Every third-party API protects its infrastructure using rate limits. When you exceed these limits, the API returns an HTTP 429 Too Many Requests error. Rate limits are the single most common cause of silent data loss in SaaS integrations, particularly for platforms moving upmarket. If you want to guarantee 99.99% uptime for third-party integrations in enterprise SaaS, handling these limits gracefully is mandatory. Your sync job hits a 429, retries once, fails again, and moves on—leaving records unsynced with no visible error in your product.

The problem is worse than most teams realize because rate limits are shared resources. When a customer connects your app, a marketing automation tool, and a billing system to their CRM, all three integrations share that exact same quota pool.

For example, for legacy public apps and apps using OAuth authentication distributed via the HubSpot marketplace, each HubSpot account that installs your app is limited to 110 requests every 10 seconds. Furthermore, if an account has multiple users or integrations making search requests simultaneously, the total number of requests cannot exceed five per second. Your integration will be heavily throttled simply because another app is consuming the limits.

flowchart LR

A[Your App] -->|API calls| D[Shared<br>Rate Limit Pool]

B[CRM Plugin] -->|API calls| D

C[Marketing Tool] -->|API calls| D

D -->|429 Too Many<br>Requests| A

D -->|200 OK| B

D -->|200 OK| CThe Prevention Strategy: Standardized Headers and Caller Backoff

Many developers attempt to build generic retry loops to handle 429 errors. This usually makes the problem worse. Blindly retrying a throttled request without knowing the actual limits consumes further quota and often triggers secondary penalty lockouts.

Implementing exponential backoff is difficult when every API returns rate limit data differently. Stripe uses RateLimit-Remaining, GitHub uses X-RateLimit-Remaining, and others bury the limit inside the JSON response body.

A resilient architecture normalizes upstream rate limit information into standardized IETF headers (ratelimit-limit, ratelimit-remaining, ratelimit-reset).

sequenceDiagram

participant App as Your Application

participant Proxy as Integration Proxy

participant API as Upstream API (e.g., HubSpot)

App->>Proxy: GET /crm/contacts

Proxy->>API: GET /crm/v3/objects/contacts

API-->>Proxy: 429 Too Many Requests<br>X-HubSpot-RateLimit-Remaining: 0

Proxy-->>App: 429 Too Many Requests<br>ratelimit-remaining: 0<br>ratelimit-reset: 10

Note over App: App reads standard headers<br>App initiates exponential backoff

App->>Proxy: Retry after 10sArchitectural Note on Rate Limits

A reliable integration layer should normalize rate limit headers, but it should not automatically retry or absorb HTTP 429 errors. When an upstream API throttles a request, that error must be passed directly to the caller. The caller (your application) holds the business context required to decide whether to apply exponential backoff, drop the request, or queue it for tomorrow.

By normalizing the headers, your application can write a single, unified backoff function that works across 100+ integrations. Learn more about best practices for handling API rate limits and retries across multiple third-party APIs.

Root Cause 3: API Deprecations and Schema Mutations

APIs are not static contracts. Vendors constantly release new versions, deprecate old endpoints, and silently mutate response schemas, and they rarely tell you in advance. Learning how to survive API deprecations across 50+ SaaS integrations is critical because an empirical study of RESTful APIs using the RADA framework found that on average, 46% of the operations within a target RESTful API are deprecation-related. That is nearly half of all API operations being touched by deprecation activity. Another empirical study published by IEEE found that close to 60% of APIs show a rising trend in the number of deprecated endpoints. Around 60% of organizations experience operational disruptions due to outdated software dependencies.

The changes that break integrations are not always full endpoint removals. More often, they are subtle mutations:

- A field renamed from

employee_idtoemployeeId(a silent casing change). - A response that used to return a flat object now nests it inside a

datawrapper. - A pagination cursor that was a string is now a base64-encoded JSON blob.

- A date field that returned ISO 8601 now returns a Unix timestamp.

Why Hardcoded Integrations Are Fragile

The traditional approach to building integrations—writing a dedicated handler function for each API—means that every schema mutation requires a code change, a code review, a deployment, and a prayer that the fix does not break another integration.

// Fragile: hardcoded field mapping

function mapHubSpotContact(response) {

return {

email: response.properties.email,

firstName: response.properties.firstname, // HubSpot uses lowercase

phone: response.properties.phone,

};

}

// When HubSpot adds a data wrapper or changes casing, this silently returns undefinedIf your integration is built using provider-specific code (if (provider === 'salesforce') { return data.Records }), every upstream mutation breaks your application. Your engineering team must write a patch just to fix a renamed field. This continuous cycle of code-level breakages drains engineering morale. Figuring out how to reduce customer churn caused by broken integrations requires removing this hardcoded logic entirely.

Root Cause 4: Custom Field Collisions in Enterprise SaaS

What works for SMB customers will fail spectacularly in the enterprise segment. When a startup connects their CRM to your product, they are likely using the default configuration. A rigid 1-to-1 data model that maps first_name, last_name, and email works perfectly.

Enterprise customers do not use default configurations. A Fortune 500 company's Salesforce instance is a highly customized database containing hundreds of custom objects, validation rules, and proprietary fields. It has likely been customized for eight years by three different consulting firms.

Suddenly, your integration hits a wall:

- Your

contact.phonemapping collides with their customPhone_Primary__cfield. - Their custom object

Deal_Registration__cdoes not map to any resource in your unified model. - They need

Industry__c(a picklist with custom values) included in every contact sync.

If your integration relies on a strict, inflexible unified data model, it will drop this custom data silently. When an enterprise buyer realizes your integration cannot sync their proprietary fields, they will not sign the contract. Custom field collisions are not just an engineering headache—they are a direct blocker to revenue.

How to Prevent Failures with Declarative Architecture

The root causes of integration failure—token expiration, rate limits, schema mutations, and custom fields—all share a common thread. They break integrations because developers treat API connections as code rather than data. Every if (provider === 'hubspot') branch is a liability. Every hardcoded field mapping is a future production incident.

The only sustainable way to scale third-party integrations is to adopt a declarative architecture with zero integration-specific code.

flowchart TD

subgraph Traditional["Code-Per-Integration"]

A[Salesforce Handler] --> E[Deploy]

B[HubSpot Handler] --> E

C[Pipedrive Handler] --> E

E --> F[Runtime]

end

subgraph Declarative["Declarative Config"]

G[JSON Config:<br>Auth + Endpoints] --> J[Generic<br>Execution Engine]

H[JSONata Mappings:<br>Field Transforms] --> J

I[Override Hierarchy:<br>Per-Customer] --> J

endConfiguration as Data

Instead of writing endpoint handler functions (hubspot_handler.ts), integration behavior should be defined entirely as JSON configuration blobs.

This configuration describes how to communicate with the API: its base URL, authentication scheme, pagination strategy, and available endpoints. The runtime engine is a generic pipeline that reads this configuration and executes it.

When an API deprecates an endpoint, you update a JSON string. No code is changed, compiled, or deployed. You can learn exactly how to ship new API connectors as data-only operations to eliminate maintenance overhead.

JSONata and the Override Hierarchy

To handle schema mutations and custom enterprise fields without per-customer code, the transformation layer must be decoupled from the application logic.

Using a functional query language like JSONata allows you to store data mapping logic as strings in your database. A single JSONata expression can handle complex transformations, date formatting, and conditional routing without a single if/else block in your codebase.

More importantly, this declarative approach enables a multi-tiered override hierarchy. This lets you customize mappings per customer without touching shared code:

| Level | Scope | Example |

|---|---|---|

| Platform Level | All customers | The default contact mapping that works for 90% of customers. |

| Environment Level | One customer's workspace | Overrides adding Industry__c to the contact response. |

| Account Level | One connected Salesforce org | Overrides mapping Phone_Primary__c directly to contact.phone. |

Each level deep-merges on top of the previous one at runtime. If an enterprise customer needs to map a custom Salesforce object, a product manager can apply an account-level JSONata override. The customer gets their custom data synced immediately, and the engineering team never has to touch the codebase.

The Power of Zero Code When every integration flows through the same generic execution pipeline, bugs get fixed once. An improvement to pagination logic instantly applies to all 100+ integrations. The maintenance burden grows linearly with the number of unique API patterns, not the number of integrations.

Stop Fixing Integrations. Start Architecting Them.

Broken integrations are a symptom of hardcoded architecture. As long as your team is writing provider-specific code, managing OAuth state in ad-hoc database tables, and building rigid data models, you will continue to lose engineering cycles to maintenance and troubleshoot silent data failures.

If you are an engineering leader or PM responsible for third-party integrations, the reality is that your integrations will break because the APIs on the other side of the wire will change. The build cost is only 20% of the total lifecycle cost. Hardcoded integration handlers are technical debt from day one.

By moving to a declarative, config-driven execution pipeline, you can absorb upstream API changes, handle enterprise custom fields natively, pass clean, standardized rate limit data to your application, and automate credential lifecycles. Your engineers should be building the features that differentiate your product, not reading Salesforce API changelogs at 2 AM.

FAQ

- Why do OAuth refresh tokens fail silently in production?

- Refresh tokens often fail due to single-use token rotation policies and race conditions. If multiple concurrent requests hit a 401 Unauthorized error and try to refresh simultaneously, all but the first request fail permanently. Furthermore, background automation systems lack active user sessions to recover from these authentication drops.

- How do shared API rate limits break SaaS integrations?

- Many APIs enforce account-level rate limits (e.g., HubSpot's 110 requests per 10 seconds) that are shared across all installed apps on a customer's account. When multiple integrations compete for this shared quota pool, your sync jobs get throttled or fail with HTTP 429 errors, causing silent data loss.

- How should my application handle API rate limits across different vendors?

- Your integration layer should normalize upstream rate limit data into standard IETF headers (ratelimit-limit, ratelimit-remaining, ratelimit-reset) and pass the HTTP 429 error directly to your application so it can execute unified exponential backoff based on its own business priorities.

- What is the best way to handle custom fields in enterprise SaaS integrations?

- Use a declarative architecture with a multi-level override system (Platform, Environment, Account). Instead of hardcoding data models, store mapping logic as JSONata expressions that can deep-merge and be modified per customer without requiring code deployments.

- What is a declarative integration architecture?

- A declarative integration architecture stores all integration-specific behavior as JSON configuration and transformation expressions rather than hardcoded handler functions. Adding or updating an integration becomes a data operation instead of a code deployment, heavily reducing maintenance burden.