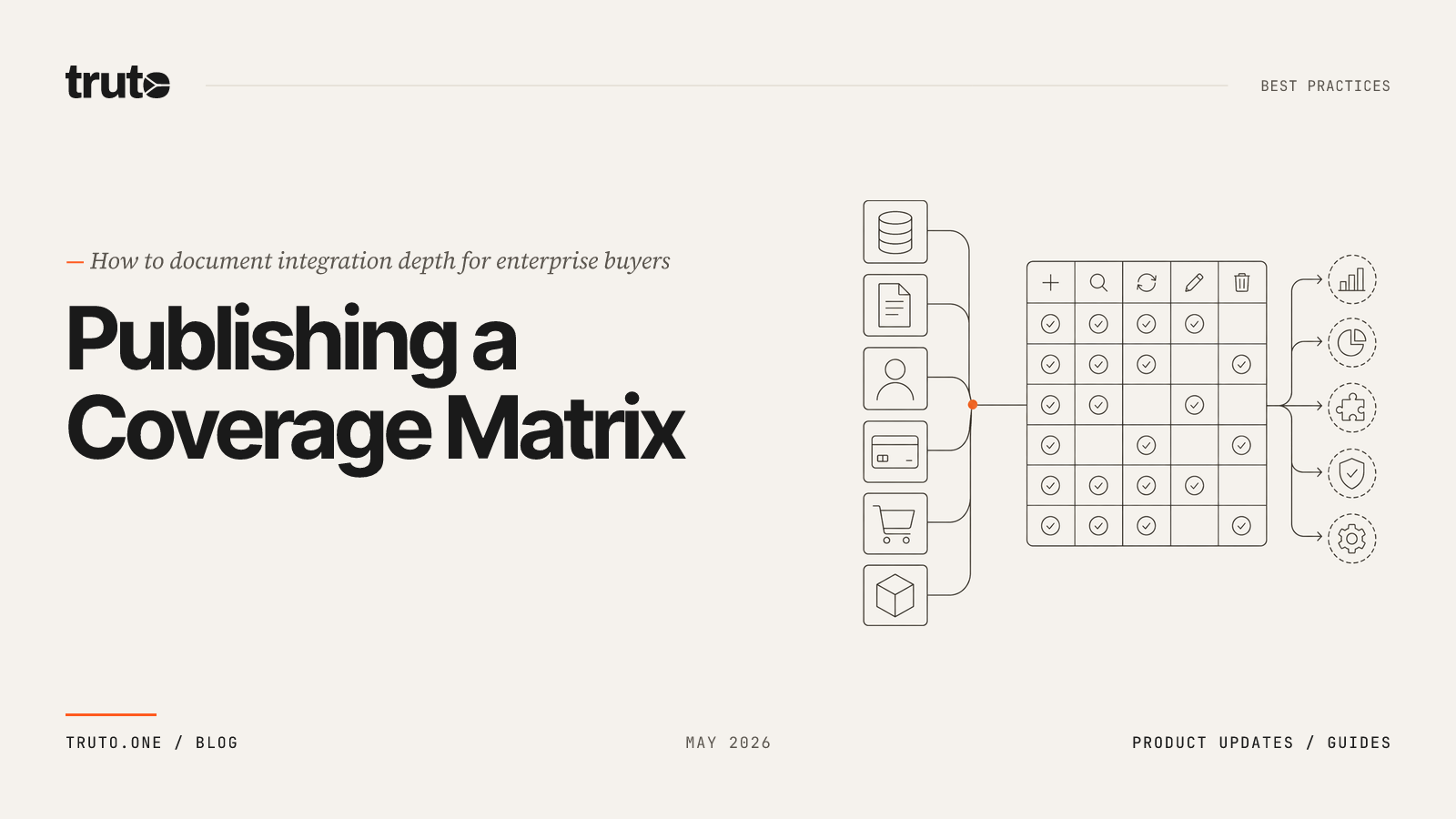

What Integrations Do Enterprise Buyers Expect in 2026? (Data & Architecture)

Enterprise buyers demand deep, real-time integrations with CRMs, HRIS, ERPs, and AI agent support. Here's the 2026 data on what to build and how to architect it at scale.

Enterprise buyers in 2026 expect your product to integrate with their CRM, HRIS, accounting platform, ticketing system, and — increasingly — their AI agents, out of the box. If you cannot demonstrate these integrations during a technical evaluation, you are already behind the competitor who can.

This is not a soft preference. The average company now spends $49M annually on SaaS, with the average portfolio growing to 275 applications. Large enterprises with 10,000+ employees spend an average of $284M and run 660 apps. Your product is competing for a slot in a massive, fragmented ecosystem — and the buyer's InfoSec team, procurement committee, and end users all have veto power over whether your tool gets adopted or shelved.

If you are a product manager staring at a spreadsheet of lost enterprise deals, the pattern is obvious. As we've seen in our analysis of how integrations close enterprise deals, prospects love your core product, complete a successful technical evaluation, and then ask how your software plugs into their highly customized Workday setup or legacy Salesforce instance. If your answer is that native connectivity is on the roadmap, you have already lost the deal.

This guide breaks down the exact integrations enterprise buyers demand in 2026, why AI is rewriting the requirements, and what architectural approach actually scales without burying your engineering team in maintenance debt.

The 2026 Enterprise SaaS Landscape: 660 Apps and Counting

To understand what enterprise buyers expect, you must first understand the sheer scale of the environment your product is entering. The era of the consolidated, all-in-one software suite is over.

In 2024, average SaaS spending grew 9.3% year over year. The average company now spends $49 million per year on SaaS, which translates to roughly $4,830 per employee. Meanwhile, the average number of SaaS applications per company rose only 2.2%, reaching around 275 apps.

But that is the average across all companies. Spend and portfolio size vary widely by company size. Smaller companies (1–500 employees) spend an average of $11.5M on SaaS and use 152 apps, while large enterprises (10,000+ employees) spend an average of $284M and use 660 apps.

Let that sink in: 660 applications. Your B2B SaaS product is just one of those 660 tools.

This extreme fragmentation is why how many integrations a B2B SaaS product needs in 2026 is a moving target. The average public SaaS company already ships around 350 integrations, and market leaders ship 2,000+. If your integration count is in the single digits, you are signaling to enterprise prospects that you are not ready for their environment.

Key factors driving the increase in SaaS spend include vendor price hikes, more complex licensing models, and increasing AI investments. If your product requires users to manually export CSVs, copy-paste data between browser tabs, or rely on fragile third-party Zapier workflows, enterprise IT teams will block the procurement. They are actively trying to reduce shadow IT and manual data entry, not add to it.

What Integrations Do Enterprise Buyers Expect in 2026?

Integrations are now the #3 most important factor for global software buyers when evaluating a new purchase, behind only security and ease of use, according to Gartner's Global Software Buying Trends research. 39% of buyers specifically identified integration with currently owned software as the most important factor when choosing a provider.

Why Category Coverage Defines Market Leaders

The companies that dominate customer-facing B2B integrations - the ones consistently winning enterprise deals - treat integration category coverage as a core product capability, not a backlog item. Customer Relationship Management accounts for 29.12% of B2B SaaS market share. HRIS and ERP follow closely as the next most connected categories. If your product cannot plug into the buyer's system of record in each of these categories, you are invisible during the evaluation.

Market leaders in B2B integration platforms ship broad coverage across multiple categories simultaneously. They do not build one CRM connector and call it done. They cover CRM, HRIS, accounting, ticketing, identity, file storage, and communication - because enterprise buyers use all of them and expect a single vendor to connect across the board. During this assessment, buyers are primarily concerned with a software provider's ability to provide integration support (44%). That number makes integration support the single most important factor during the evaluation stage, ahead of sales team engagement and product demos.

But "integrations" is a broad term. Here are the specific categories and platforms that are mandatory to unblock enterprise deals today:

| Category | Must-Have Platforms | Why Buyers Care |

|---|---|---|

| CRM | Salesforce, HubSpot, Microsoft Dynamics 365, Pipedrive | Sales data is the system of record. No CRM sync = no pipeline visibility. |

| HRIS | Workday, BambooHR, Rippling, Gusto, Personio | Employee lifecycle data feeds compliance, access provisioning, and workforce planning. HRIS sync is a hard requirement for SOC 2 compliance. |

| Accounting / ERP | NetSuite, QuickBooks Online, Xero, Sage Intacct | Financial data integration is non-negotiable for audit trails, journal entries, and revenue ops. |

| Ticketing / ITSM | Jira, ServiceNow, Zendesk, Freshdesk | Support and engineering workflows rely on bidirectional status sync. Security tools must route alerts directly into these systems. |

| Identity / Directory | Okta, Azure AD (Entra ID), Google Workspace | SSO and SCIM provisioning are literally required for enterprise security reviews. |

| File Storage | SharePoint, Google Drive, Box, Dropbox | Document access is a baseline expectation for content-heavy workflows. |

| Communication | Slack, Microsoft Teams | Real-time notifications and conversational UIs depend on messaging platform hooks. |

The pattern is clear: enterprise buyers do not evaluate your product in isolation. They evaluate it as a node in their existing graph of data. If you are building a sales tool, check our breakdown of the most requested integrations for B2B sales tools for specific coverage priorities.

What has changed from previous years is the depth of integration expected. Checking the box with a basic logo on your marketing page is no longer sufficient. Enterprise buyers have been burned by shallow integrations. A one-way, nightly sync of basic contact records is not an integration — it is a scheduled export.

Buyers now conduct deep technical evaluations of your integration capabilities. They demand:

- Bidirectional sync — write data back to the source system, not just read it

- Custom field support — enterprise Salesforce orgs have hundreds of custom fields; your integration must handle them dynamically

- Real-time or near real-time data — batch syncs that run once a day are increasingly rejected during technical evaluations

- Granular permission models — per-tenant OAuth scoping, not a shared API key

They will ask how you handle API rate limits, how you map their bespoke custom fields to your data model, and what happens when an OAuth refresh token expires over a holiday weekend.

Which Integration Categories to Build First

If you cannot build everything at once (and you cannot), here is the priority order based on deal velocity and breadth of enterprise use cases:

Tier 1 - Build these before your first enterprise pilot:

- CRM (Salesforce, HubSpot, Dynamics 365) - CRM is the system of record for revenue. Every sales-adjacent tool, marketing platform, and customer success product needs CRM connectivity. It is the single most requested integration category across all B2B SaaS verticals.

- Identity / Directory (Okta, Azure AD/Entra ID, Google Workspace) - SSO and SCIM provisioning are non-negotiable in enterprise security reviews. Without these, your product will not pass the InfoSec gate.

Tier 2 - Build these to close mid-market and enterprise deals:

- HRIS (Workday, BambooHR, Rippling) - Any product that touches employee data - access management, headcount planning, benefits, compliance - needs HRIS sync. It is a hard requirement for SOC 2 and workforce planning.

- Ticketing / ITSM (Jira, ServiceNow, Zendesk) - Bidirectional ticket sync is expected for any tool in the support, security, or engineering operations space.

Tier 3 - Build these to expand deal size and move upmarket:

- Accounting / ERP (NetSuite, QuickBooks, Xero, Sage Intacct) - Financial data integration is mandatory for audit trails, journal entries, and revenue recognition workflows. Enterprise Resource Planning platforms are forecast to register a 17.75% CAGR through 2031, propelled by AI-enabled manufacturing digitization.

- File Storage (SharePoint, Google Drive, Box) - Required for document-heavy workflows and content management integrations.

- Communication (Slack, Microsoft Teams) - Notification and conversational UI integrations are expected by end users in every category.

The key insight: Tier 1 integrations unblock deals. Tier 2 integrations close deals. Tier 3 integrations expand deal size and reduce churn.

The AI Mandate: Why Real-Time Data Access Is Non-Negotiable

AI is no longer a future consideration. It has permanently altered the integration landscape.

Spending on AI-native apps soared 75.2% year-over-year, according to Zylo, as companies increasingly rely on tools like ChatGPT and Microsoft Copilot. Gartner predicts that 40% of enterprise applications will be integrated with task-specific AI agents by the end of 2026, up from less than 5% in 2025.

In 2025, companies began to experiment with AI agents — advanced AI systems designed to autonomously reason, plan, and execute complex tasks based on high-level goals. 44% of companies were either deploying or assessing agents last year. Enterprises have seen those experimentations become full-fledged deployments in early 2026.

This changes the integration equation fundamentally. AI agents require context to function. An AI customer support agent cannot resolve a billing dispute if it cannot instantly read the customer's latest invoice from Stripe or their contract terms from Salesforce. An AI copilot that summarizes a customer account is useless if it is working off data that was cached 24 hours ago and does not reflect the call that happened this morning.

This creates a massive architectural constraint: AI agents require real-time API access, not stale, cached data.

When an AI agent needs to look up a contact's latest deal stage in Salesforce, query their open tickets in Zendesk, and check their subscription status in Stripe — all within a single LLM function call — you need a real-time pass-through layer, not a stale cache.

sequenceDiagram

participant Agent as AI Agent

participant App as Your SaaS Product

participant Layer as Integration Layer

participant CRM as Salesforce

participant Ticket as Zendesk

participant Acct as Stripe

Agent->>App: "Summarize account for Acme Corp"

App->>Layer: Fetch contact, deals, tickets, billing

par Real-time fan-out

Layer->>CRM: GET /contacts?company=acme

Layer->>Ticket: GET /tickets?org=acme

Layer->>Acct: GET /subscriptions?customer=acme

end

CRM-->>Layer: Contact + deal data

Ticket-->>Layer: Open tickets

Acct-->>Layer: Subscription status

Layer-->>App: Unified response

App-->>Agent: Structured account summaryIf your integration architecture relies on batch jobs that sync data every four hours, your AI features will hallucinate or act on outdated state. If an AI agent reads a cached ticket status from three hours ago and replies to an angry enterprise customer based on that stale data, you have created a liability, not a feature.

During enterprise technical evaluations in 2026, the AI readiness of your integration architecture is an explicit evaluation criterion. InfoSec teams are asking: "Where does the data live? Is it cached on a third party's servers? What is the data retention policy?" If your integration layer stores customer data in its own cache, you just added a third-party data processor to the compliance review — which can add weeks to the deal cycle.

Why Legacy Integration Approaches Fail at Enterprise Scale

As engineering teams scramble to build these required connections, they often evaluate third-party integration infrastructure. But most legacy platforms fail enterprise technical evaluations due to fundamental architectural flaws.

Cache-First Unified APIs: The Compliance Trap

Many legacy unified API providers operate on a cache-first model. They poll third-party APIs constantly, ingest your customers' raw data (including PII, financial records, and employee details), normalize it, and store it in their own databases. You then query their database instead of the actual third-party API.

Enterprise InfoSec Risk: Cache-first architectures require you to replicate your enterprise customers' sensitive data into a third-party vendor's database. When an enterprise CISO asks "where does our Salesforce data live?" and you explain it is cached indefinitely in a startup's multi-tenant database, your security review will fail immediately.

This breaks down for enterprise buyers who need real-time data for AI agents, zero third-party data retention to pass SOC 2 and GDPR reviews, and custom field support that does not require waiting for the vendor to update their normalized schema. The compliance friction alone is a dealbreaker — every enterprise prospect's security team has to review that vendor as an additional data sub-processor.

Code-First Platforms: The Maintenance Nightmare

Code-first integration platforms let developers write custom TypeScript or Python scripts for each integration. This gives maximum control and works great for a handful of connectors.

The problem is linear scaling cost. Third-party APIs deprecate endpoints, change pagination schemas, and alter their rate limits constantly. If you have 10 integrations, you are maintaining 10 sets of custom scripts. At 50, you have a full-time team doing nothing but fixing pagination edge cases, handling API deprecations, and debugging OAuth token refresh failures. As we detailed in our comparison of architectural approaches for enterprise SaaS, this approach creates compounding maintenance debt that diverts engineering resources away from your core product. You have simply moved your technical debt from your own repository to a vendor's platform.

Embedded iPaaS: The Workflow Swamp

Embedded iPaaS platforms rely on visual drag-and-drop workflow builders. These are excellent tools for internal IT teams automating back-office processes. They are terrible for multi-tenant B2B SaaS products.

When you have 500 enterprise customers, you cannot manage 500 bespoke visual workflows. You need a centralized, code-driven configuration that applies globally but allows for dynamic, per-tenant overrides for custom fields. Visual builders lack the version control, automated testing, and scalable deployment mechanisms required by senior engineering teams.

Build-It-Yourself: The Math Is Brutal

Plenty of teams still build integrations from scratch. The first two or three feel manageable. By integration number ten, you have a spaghetti codebase of provider-specific HTTP clients, each with its own authentication flow, pagination logic, rate limiting strategy, and error handling. By number twenty, your best engineers are spending half their time on integration maintenance instead of building features that differentiate your product.

An engineering team that builds one integration per quarter at a fully loaded cost of $150K per engineer-quarter will spend $1.5M to reach 10 integrations — and that does not include ongoing maintenance, which typically runs 20–30% of initial build cost annually.

The enterprise integration tax: If your engineering team is spending more than 15% of total capacity on integration maintenance, you are paying an unsustainable tax on your product roadmap. This is the most common reason teams start evaluating third-party integration infrastructure.

Architecting for Enterprise Scale with Zero Integration-Specific Code

The pattern that actually works at enterprise scale is a declarative, real-time proxy architecture — one that handles the mechanical complexity of third-party APIs through configuration rather than custom code per integration.

The Real-Time Proxy Execution Pipeline

Instead of caching data, a modern unified API acts as a high-performance proxy. When your application requests a list of CRM contacts, the infrastructure translates your unified request into the provider-specific format, executes the request against the third-party API in real time, normalizes the response, and returns it to your application.

flowchart LR

A[Your App] -->|Unified API Call| B[Integration Layer]

B -->|Real-time proxy| C[Salesforce]

B -->|Real-time proxy| D[HubSpot]

B -->|Real-time proxy| E[Workday]

B -->|Real-time proxy| F[Zendesk]

B -->|Real-time proxy| G[NetSuite]

style B fill:#f0f4ff,stroke:#4a6cf7This architecture guarantees zero data retention. The integration layer processes the payload in memory and passes it back to your application. Because no customer data is persisted to disk, enterprise InfoSec teams and SOC 2 auditors approve the architecture rapidly. Your enterprise prospect's security team reviews one sub-processor that holds zero customer data, instead of a sub-processor sitting on a cached copy of their entire CRM.

Handling Custom Enterprise Fields Dynamically

No two enterprise instances of Salesforce or Jira are identical. Every enterprise customer has a unique schema with custom objects, bespoke field names, and specific validation rules.

Legacy platforms break when they encounter custom fields because their unified models are rigid. A modern architecture solves this using a declarative config override hierarchy. Instead of writing custom code for each tenant, your application defines a declarative mapping configuration. When a specific enterprise tenant authenticates their Salesforce instance, the infrastructure maps their custom industry_vertical__c field to your unified category field dynamically.

// Example: Declarative mapping via JSONata

{

"unified_model": "crm_contact",

"provider": "salesforce",

"mapping": {

"id": "Id",

"first_name": "FirstName",

"last_name": "LastName",

"email": "Email",

"custom_attributes": {

"industry": "industry_vertical__c",

"lead_score": "enterprise_score__c"

}

}

}This transformation happens in the execution pipeline. Your application code remains completely agnostic to the fact that Customer A uses Salesforce and Customer B uses HubSpot. You write business logic against one unified model, and the infrastructure handles the provider-specific quirks. Different enterprise customers use the same SaaS platform differently, so the integration layer must support per-tenant schema customization without code deploys.

Normalizing the Pain of Third-Party APIs

Enterprise integrations fail in production not because the initial API call is hard, but because the edge cases are brutal. A robust proxy layer must abstract away the operational realities of interacting with hundreds of different vendors:

- Pagination Normalization: HubSpot uses cursor-based pagination. Salesforce uses offset-based pagination. Workday uses page numbers. Your engineering team should not have to write three different

whileloops. The infrastructure must expose a single, unified pagination cursor, automatically translating it into the provider's specific requirement under the hood. - OAuth Token Management: Refresh tokens expire, get revoked by enterprise IT admins, or fail due to transient network errors. The platform must handle exponential backoff, automatic token refreshes shortly before expiry, and distributed locking to ensure concurrent requests do not trigger race conditions that invalidate the token. Token expiry is the #1 cause of integration outages in production.

- Rate Limit Protection: Enterprise APIs have strict rate limits. The infrastructure must read the

Retry-Afterheaders, queue requests, and apply circuit breakers automatically, preventing your application from being blacklisted by the provider.

This is the approach Truto takes. Every integration — whether it is Salesforce, BambooHR, or a GraphQL-based provider — flows through the same generic declarative execution pipeline. There is no integration-specific code to maintain. Adding a new provider is a configuration change, not an engineering sprint.

For AI agent builders: A real-time proxy architecture is the natural fit for LLM tool calling and function execution. The AI agent calls a unified endpoint, receives live data from the source system, and never hits stale cache. Truto supports this natively through both REST APIs and Model Context Protocol (MCP) integration.

How to Build Your 2026 Enterprise Integration Roadmap

If you are a product manager or engineering leader looking at this problem, here is the pragmatic playbook:

1. Audit your lost deals. Pull the last 12 months of closed-lost enterprise opportunities and tag them by the integrations that were missing. This gives you an evidence-based priority list, not a guess.

2. Start with CRM and HRIS. These two categories cover the widest range of enterprise use cases. If you are a sales tool, CRM is obvious. If you touch employee data at all, HRIS is mandatory. Our guide on building integrations your sales team actually asks for breaks down the prioritization framework.

3. Plan for the long tail. Enterprise buyers do not all use Salesforce. Some use Dynamics 365. Some use Zoho. Some use a custom-built CRM running on top of a Postgres database. Your integration strategy for moving upmarket needs to account for the long tail, not just the top three logos.

4. Evaluate your build-vs-buy threshold. If you need fewer than five integrations and they are all in the same category, building in-house may make sense. If you need 15+ integrations across multiple categories — especially if you are targeting enterprise — the math almost always favors using a dedicated integration layer.

5. Make AI readiness a first-class requirement. When evaluating integration infrastructure, test whether it can serve real-time data to an LLM function call. If the answer is "we can do that, but you will get cached data from 6 hours ago," that is not enterprise-grade for 2026.

Quick Action Checklist for PMs and GTM Teams

Here is the checklist you can hand directly to your product, sales engineering, or GTM team this week:

- Tag your last 20 closed-lost deals by the specific integrations that were missing or cited as blockers during evaluation

- Map your current coverage against the category table above - identify gaps in Tier 1 (CRM, Identity) and Tier 2 (HRIS, Ticketing) first

- Add integration status to your sales deck - enterprise buyers want to see a live integrations page with logos, not a "coming soon" roadmap slide

- Run a 30-minute InfoSec pre-check on your integration architecture - can you answer "where does our customer data live?" and "what is your data retention policy?" without hesitation?

- Test your AI readiness - make a single API call through your integration layer and measure latency; if the response includes cached data older than 5 minutes, flag it as a blocker for AI agent use cases

- Document your custom field handling - if a prospect asks "how do you handle our custom Salesforce fields?" during a demo, your team should have a live answer, not a roadmap promise

- Set a 90-day integration target - pick two Tier 1 or Tier 2 platforms and commit to shipping them in the next quarter, either in-house or through a dedicated integration layer

Stop Losing Deals to Missing Integrations

Enterprise buyers in 2026 expect your software to be a seamless participant in their broader data ecosystem. They expect real-time synchronization, support for their custom fields, and absolute data security. Gartner predicts 40% of enterprise applications will be integrated with task-specific AI agents by the end of 2026. The enterprise software ecosystem is getting more complex, not less — and the products that win are the ones that connect into the buyer's existing world without friction.

The uncomfortable truth: if your integration coverage is thin and your architecture relies on stale data or custom code per connector, you are already losing enterprise deals. You may not see it directly in your CRM, because prospects who spot a thin integrations page often self-select out before even requesting a demo.

Building these capabilities in-house is a distraction from your core product. Relying on legacy cache-first APIs introduces unacceptable compliance risks. Relying on code-first platforms creates an endless maintenance burden.

The good news is that you do not need a 20-person integrations team to fix this. A declarative, real-time integration layer can take you from five integrations to fifty without proportional engineering investment — and without the compliance overhead of cached data. Architect for scale, guarantee zero data retention, and ship the connections your buyers are demanding today.

FAQ

- What integrations do enterprise buyers expect in 2026?

- Enterprise buyers expect native integrations with CRM (Salesforce, HubSpot, Dynamics 365), HRIS (Workday, BambooHR), accounting/ERP (QuickBooks, NetSuite), ticketing (Jira, Zendesk, ServiceNow), identity providers (Okta, Azure AD), and increasingly, AI agent compatibility with real-time data access.

- Why do enterprise buyers reject cache-first unified APIs?

- Cache-first architectures require replicating sensitive enterprise data into a third-party vendor's database. This creates significant InfoSec risks, adds a data sub-processor to compliance reviews, and often causes SaaS platforms to fail strict enterprise SOC 2 and GDPR security reviews.

- How many SaaS applications does the average enterprise use?

- According to Zylo's 2025 data, large enterprises with over 10,000 employees actively use and manage an average of 660 different SaaS applications, while the average company across all sizes uses 275 apps.

- Why do AI agents require real-time API integrations?

- AI agents need live data from source systems to generate useful output. Gartner predicts 40% of enterprise apps will feature AI agents by end of 2026. Cached or stale data makes AI workflows unreliable — an agent acting on outdated information creates liabilities, not features.

- What is the difference between cache-first and real-time unified APIs?

- Cache-first unified APIs store third-party data on their own servers and serve responses from that cache, which introduces data staleness and compliance overhead. Real-time unified APIs proxy requests directly to the source API, returning live data without storing customer payloads.