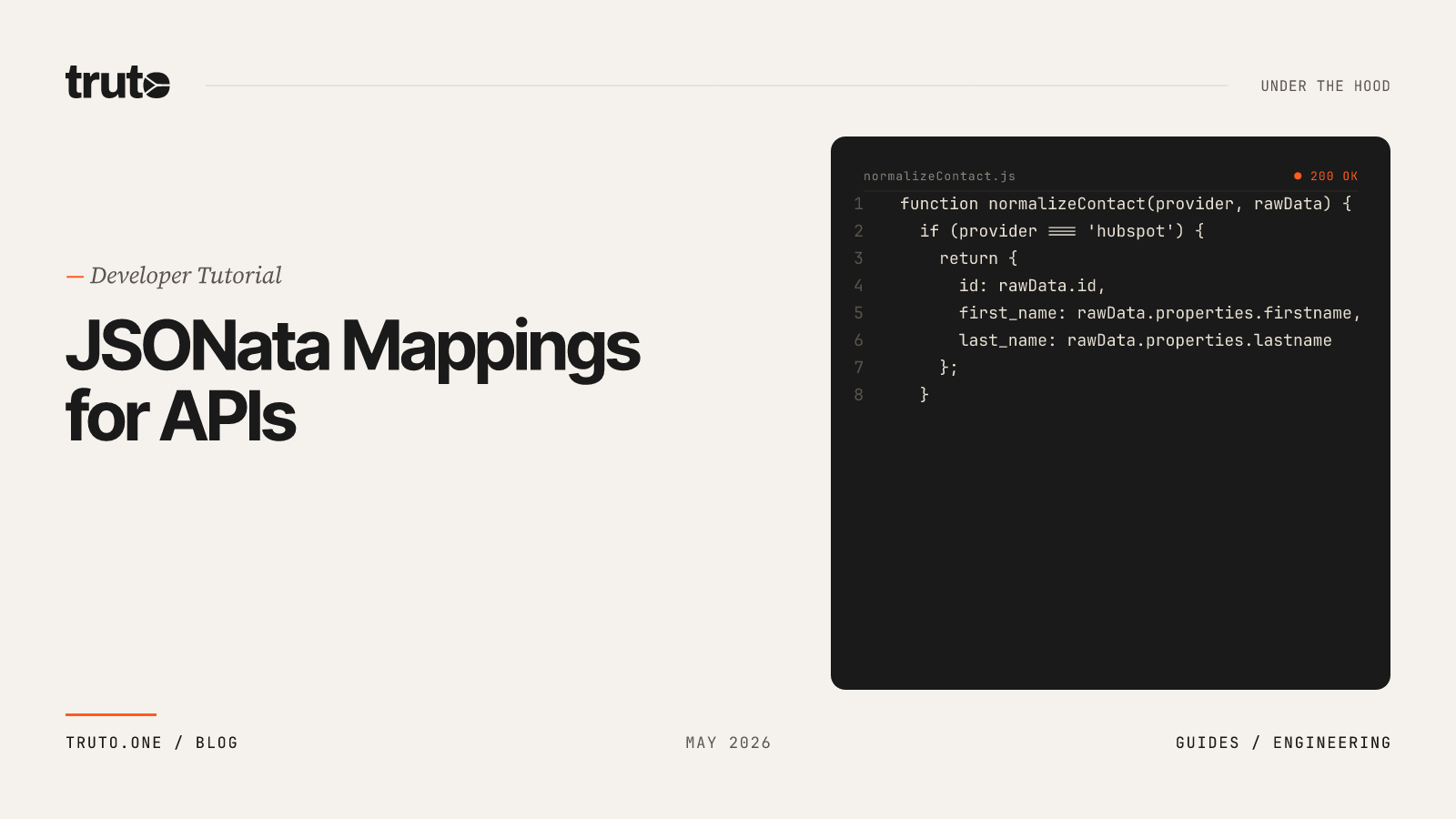

Mapping Custom Objects with JSONata: A Step-by-Step Developer Guide

Learn how to replace hardcoded API integration scripts with declarative JSONata configuration to handle enterprise custom objects and fields at scale.

If you are building B2B software, you will eventually hit a wall where standard API integrations are no longer enough. Your technical evaluation goes perfectly, the demo is flawless, and the enterprise prospect is ready to sign. Then their Salesforce administrator sends over their organization's schema.

It contains 147 custom fields on the Contact object, a highly modified custom object with nested relationships that drives their partner pipeline, and a rollup field that powers their quarterly board decks. If you want the contract, your software needs to read and write to all of it.

This is the exact moment where traditional integration strategies break down. You are forced to choose between abandoning your standardized data model or losing a six-figure deal. If you're integrating with enterprise CRMs and need to handle custom fields like Salesforce's __c or HubSpot's arbitrary properties without writing per-customer code, JSONata is the most practical transformation language available today.

This article is a step-by-step developer guide: mapping custom objects with JSONata to replace hardcoded integration scripts with declarative configuration. We will explore how to handle enterprise edge cases without draining developer resources, why rigid API schemas fail, and how to architect a system that scales to hundreds of custom integrations.

The Enterprise Integration Trap: Why Custom Objects Break APIs

API schema normalization is the process of translating disparate data models from different third-party APIs into a single, canonical JSON format. It is arguably the hardest problem in B2B product integrations because software vendors fundamentally disagree on how to model reality.

Custom objects are the default state of enterprise SaaS deployments, not the exception. The moment you integrate with Salesforce, you hit the wall of custom fields and custom objects, which is exactly why unified data models break on custom Salesforce objects. Customer A has Industry_Vertical__c on their Account object. Customer B calls it Sector__c. Customer C has a completely custom object called Deal_Registration__c with 47 fields that don't exist anywhere else. Your integration code, which worked perfectly in your test org, breaks the moment it encounters a real enterprise deployment.

To understand the difficulty of standardizing API data mapping, look at how different platforms define a simple "Contact".

Every custom object you create in Salesforce gets the __c suffix automatically - that's how Salesforce distinguishes them from standard objects. In SOQL and Apex you must use the API names (with __c) to reference custom fields and objects. Integrations and metadata tooling rely on these suffixes to programmatically detect and process custom elements.

HubSpot is just as messy, but in a different way. Custom properties live in a flat properties object alongside standard ones, with no naming convention to distinguish them. Zoho, Pipedrive, and Dynamics 365 each have their own idiosyncratic approaches.

When you build direct integrations, your codebase absorbs this domain complexity. If your integration code hardcodes field names, you're signing up for one of two painful outcomes:

- The YAML deployment treadmill: Defining custom object mappings in declarative YAML files, version-controlled and deployed via CI/CD pipelines, keeps the configuration out of your application code but introduces massive friction. If a customer's Salesforce admin adds a new custom field on a Tuesday, your engineering team has to update a YAML file, open a pull request, wait for CI/CD checks, and deploy to production before the integration can recognize the new field. If you have 100 enterprise customers with active Salesforce admins, you're merging YAML pull requests weekly.

- The

if/elsespaghetti: You add conditional branches per customer or per CRM directly into your application. You end up with brittle conditional logic scattered across your application. Adding support for a new custom field requires a pull request, code review, and a production deployment.

Industry data shows that medium complexity SaaS MVPs with third-party integrations cost between $50,000 and $150,000 to build, covering both engineering efforts and customer success management. Annual maintenance typically runs 10% to 20% of that initial development cost - meaning $5,000 to $10,000 per integration per year in pure upkeep. The bulk of this cost comes from maintaining custom objects and handling edge cases that break lowest-common-denominator unified API data models.

The fix isn't to build a bigger common data model, but rather to adopt a unified API that doesn't force standardized data models on custom objects. It's to treat your mapping logic as data that can be changed at runtime without touching your codebase.

What is JSONata? (And Why It Beats jq for API Mapping)

JSONata is a declarative, Turing-complete query and transformation language designed specifically for JSON data. Created by Andrew Coleman at IBM, JSONata is an open-source query language that lets you extract, transform, and map data from JSON documents using concise syntax. Inspired by the 'location path' semantics of XPath 3.1, it allows sophisticated queries to be expressed in a compact and intuitive notation.

Many developers default to command-line tools like jq for JSON manipulation. While jq is excellent for local bash scripts, JSONata is vastly superior for production API mapping in backend services:

| Feature | JSONata | jq |

|---|---|---|

| Runtime | JavaScript (browser + Node.js) | C (CLI-first) |

| Array handling | Implicit iteration; arrays and single values treated uniformly | Explicit iteration required |

| Embeddability | Ships as an NPM package, embeds directly in your app | Requires shelling out or FFI binding |

| Expression storage | Pure string - store in a DB column, evaluate at runtime | Typically piped via CLI |

| Ecosystem | Implementations in JS, Go, Rust, Java, Python, .NET | Primarily C with Go port |

JSONata is mostly used as an NPM package for browser- and Node.js-based integration applications. From a language point of view, jq and JSONata are quite similar, but they were inspired with different use cases in mind. For jq, it was having a JSON-aware command line tool. For JSONata, it was integrating RESTful applications.

Here is why JSONata is the industry standard for API mapping:

- Native JavaScript Integration: JSONata is implemented in JavaScript and ships via NPM. It embeds directly into Node.js, edge runtimes, and web browsers.

- Lenient Array Handling: JSONata is more lenient in terms of how arrays are treated. When writing an expression, JSONata intuitively does the right thing when it encounters an array vs a simple type. jq requires you to explicitly iterate over arrays, otherwise an error is raised. This matters when dealing with CRM data where a contact might have one email, five emails, or none.

- Functional Programming Paradigm: JSONata embraces functional programming concepts like map, filter, and reduce, enabling developers to write concise, declarative code to dynamically extract custom fields without knowing their names in advance.

- Side-Effect Free: Expressions are pure functions. They transform input to output without modifying application state, making them completely safe to store in a database and execute dynamically.

- Industry Adoption: JSONata has over 750,000 weekly NPM downloads. Platforms dealing with complex B2B data routing - like AWS Step Functions, Stedi for EDI mappings, Notehub for IoT payloads, and Kestra for workflow orchestration - have adopted JSONata as their standard transformation engine.

JSONata Syntax Quick Primer

If you're seeing JSONata for the first time, here's a fast walkthrough of the syntax patterns used throughout this guide.

Path expressions navigate JSON structures. response.FirstName extracts the FirstName field. Nested paths chain naturally: response.properties.email.

String concatenation uses the & operator:

FirstName & " " & LastNameConditionals use ternary ? : syntax. Omitting the else branch returns undefined, which JSONata silently drops from output:

Email ? { "email": Email, "is_primary": true }Variable binding uses := inside parenthesized blocks:

(

$knownFields := ["firstname", "lastname", "email"];

properties ~> $sift(function($v, $k) {

$not($k in $knownFields)

})

)Object construction uses { } with quoted keys. When applied to an array, it maps over each element automatically - no explicit loop required:

response.{

"id": Id,

"name": FirstName & " " & LastName

}The chain operator ~> pipes a value into a function. These two are equivalent:

$sift($, fn)

$ ~> $sift(fn)Regex matching uses ~> with a regex pattern. This tests if a key name ends with __c:

$k ~> /__c$/iFunctions you'll use most:

| Function | Purpose | Example |

|---|---|---|

$string(v) |

Coerce to string | $string(Id) |

$number(v) |

Coerce to number | $number("87") returns 87 |

$join(arr, sep) |

Join strings | $join(["a","b"], " ") returns "a b" |

$filter(arr, fn) |

Filter array elements | $filter(phones, function($v) { $v.number }) |

$sift(obj, fn) |

Filter object keys | $sift($, function($v,$k) { $k ~> /__c$/ }) |

$map(arr, fn) |

Transform each element | $map(items, function($i) { $i.name }) |

$merge(arr) |

Merge objects into one | $merge([{"a":1}, {"b":2}]) returns {"a":1,"b":2} |

$lookup(obj, key) |

Look up value by key | $lookup($statusMap, "Web") |

$split(str, sep) |

Split string into array | $split("a;b;c", ";") returns ["a","b","c"] |

$boolean(v) |

Truthy check | Filters out null, "", 0 |

$exists(v) |

Check if defined | Returns true/false |

With these building blocks, you can handle any field mapping pattern you'll encounter in CRM integrations.

By moving transformation logic out of your Node.js application code and into JSONata expressions, you turn integration maintenance into a data operation rather than a code deployment.

Step-by-Step Developer Guide: Mapping Custom Objects with JSONata

Let's work through a real-world scenario. You're building a product that reads contact data from your customers' CRMs. Customer A uses Salesforce. Customer B uses HubSpot. Both have heavily customized their schemas.

Step 1: Analyze the Raw Input Payloads

Here is a simplified version of what Salesforce returns:

{

"Id": "003Dn00000F1ABCXYZ",

"FirstName": "Sarah",

"LastName": "Chen",

"Email": "sarah@acmecorp.com",

"Phone": "+1-415-555-0142",

"MobilePhone": "+1-415-555-0199",

"CreatedDate": "2024-03-15T10:30:00.000+0000",

"LastModifiedDate": "2025-11-20T14:15:00.000+0000",

"Industry_Vertical__c": "Financial Services",

"Lead_Score__c": 87,

"Preferred_Language__c": "en-US"

}And here is HubSpot's version of the same person:

{

"id": "551",

"properties": {

"firstname": "Sarah",

"lastname": "Chen",

"email": "sarah@acmecorp.com",

"phone": "+1-415-555-0142",

"mobilephone": "+1-415-555-0199",

"createdate": "2024-03-15T10:30:00.000Z",

"hs_lastmodifieddate": "2025-11-20T14:15:00.000Z",

"industry_vertical": "Financial Services",

"lead_score": "87",

"preferred_language": "en-US"

}

}Notice the differences: different field names, different nesting depth, different casing conventions, different types (HubSpot stores lead_score as a string). Your unified schema needs to normalize all of this.

Step 2: Define Your Target Unified Schema

We want our application to consume a predictable format, regardless of whether the data came from Salesforce, HubSpot, or Pipedrive. We also need a dedicated object to hold any dynamic custom fields the enterprise customer has created, as we cannot know their keys at build time.

{

"id": "string",

"first_name": "string",

"last_name": "string",

"name": "string",

"email_addresses": [{ "email": "string", "is_primary": "boolean" }],

"phone_numbers": [{ "number": "string", "type": "string" }],

"created_at": "ISO 8601 string",

"updated_at": "ISO 8601 string",

"custom_fields": "object (dynamic)"

}Step 3: Write the Salesforce JSONata Mapping

Hardcoding Industry_Vertical__c is an anti-pattern. If the customer adds a new custom field tomorrow, our integration will drop it. Instead, we use JSONata's $sift function to dynamically extract any field ending in __c.

response.{

"id": $string(Id),

"first_name": FirstName,

"last_name": LastName,

"name": $join([FirstName, LastName], " "),

"email_addresses": [

Email ? { "email": Email, "is_primary": true }

],

"phone_numbers": $filter([

{ "number": Phone, "type": "work" },

{ "number": MobilePhone, "type": "mobile" },

{ "number": HomePhone, "type": "home" }

], function($v) { $v.number }),

"created_at": CreatedDate,

"updated_at": LastModifiedDate,

"custom_fields": $sift($, function($v, $k) {

$k ~> /__c$/i and $boolean($v)

})

}A few things to unpack:

$string(Id)coerces the ID to a string, ensuring type consistency regardless of the source.$join([FirstName, LastName], " ")safely concatenates the first and last name.$filter([...], function($v) { $v.number })removes phone entries where the number is null or undefined. No more empty objects polluting your arrays.$sift($, function($v, $k) { $k ~> /__c$/i and $boolean($v) })- this is the key line. It dynamically captures every custom field without knowing any of their names upfront.

Step 4: Write the HubSpot JSONata Mapping

HubSpot doesn't have a __c suffix convention, so we define the known standard properties explicitly and capture everything else with $sift.

(

$standardProps := ["firstname", "lastname", "email", "phone",

"mobilephone", "createdate", "hs_lastmodifieddate"];

response.{

"id": $string(id),

"first_name": properties.firstname,

"last_name": properties.lastname,

"name": $join([properties.firstname, properties.lastname], " "),

"email_addresses": [

properties.email

? { "email": properties.email, "is_primary": true }

],

"phone_numbers": $filter([

{ "number": properties.phone, "type": "work" },

{ "number": properties.mobilephone, "type": "mobile" }

], function($v) { $v.number }),

"created_at": properties.createdate,

"updated_at": properties.hs_lastmodifieddate,

"custom_fields": properties ~> $sift(function($v, $k) {

$not($k in $standardProps) and $boolean($v)

})

}

)The output from both expressions is identical:

{

"id": "003Dn00000F1ABCXYZ",

"first_name": "Sarah",

"last_name": "Chen",

"name": "Sarah Chen",

"email_addresses": [{ "email": "sarah@acmecorp.com", "is_primary": true }],

"phone_numbers": [

{ "number": "+1-415-555-0142", "type": "work" },

{ "number": "+1-415-555-0199", "type": "mobile" }

],

"created_at": "2024-03-15T10:30:00.000+0000",

"updated_at": "2025-11-20T14:15:00.000+0000",

"custom_fields": {

"Industry_Vertical__c": "Financial Services",

"Lead_Score__c": 87,

"Preferred_Language__c": "en-US"

}

}Two completely different API response shapes. Two different JSONata expressions. One unified output.

Step 5: Execute in Node.js

The mapping expression is just a string. You can store it in a database, evaluate it at request time, and change it without redeploying anything:

const jsonata = require('jsonata');

async function mapResponse(rawResponse, mappingExpression) {

const expression = jsonata(mappingExpression);

return expression.evaluate({ response: rawResponse });

}

// mappingExpression comes from config, not from code

const result = await mapResponse(salesforcePayload, savedMappingString);This is the architectural insight that separates declarative mapping from hardcoded integration scripts. The mapping is data. The runtime engine is generic.

Handling Custom Salesforce Fields and Objects: A Complete Before/After Example

The step-by-step guide above covers the basics with a simplified payload. Real enterprise Salesforce orgs are messier. They have picklist fields returning API names as plain strings, standard relationship lookups nested inline, and custom child relationships (__r) with their own subquery result format. Let's map all of these in a single expression.

The Raw Salesforce Payload

This is what Salesforce returns when you query a Contact with related objects using SOQL like SELECT Id, FirstName, LastName, Email, Phone, MobilePhone, AccountId, Account.Name, Account.Industry, LeadSource, Subscription_Tier__c, Industry_Vertical__c, Lead_Score__c, Preferred_Language__c, (SELECT Id, Name, Status__c, Partner_Tier__c, Registration_Date__c FROM Partner_Registrations__r) FROM Contact:

{

"Id": "003Dn00000F1ABCXYZ",

"FirstName": "Sarah",

"LastName": "Chen",

"Email": "sarah@acmecorp.com",

"Phone": "+1-415-555-0142",

"MobilePhone": "+1-415-555-0199",

"AccountId": "001Dn00000G2DEFXYZ",

"Account": {

"attributes": { "type": "Account", "url": "/services/data/v62.0/sobjects/Account/001Dn00000G2DEFXYZ" },

"Id": "001Dn00000G2DEFXYZ",

"Name": "Acme Corp",

"Industry": "Financial Services"

},

"LeadSource": "Web",

"Subscription_Tier__c": "Enterprise",

"Industry_Vertical__c": "Financial Services",

"Lead_Score__c": 87,

"Preferred_Language__c": "en-US",

"Partner_Registrations__r": {

"totalSize": 1,

"done": true,

"records": [

{

"attributes": { "type": "Partner_Registration__c" },

"Id": "a01Dn00000H3GHIXYZ",

"Name": "PR-2025-0142",

"Status__c": "Approved",

"Partner_Tier__c": "Gold",

"Registration_Date__c": "2025-01-10"

}

]

},

"CreatedDate": "2024-03-15T10:30:00.000+0000",

"LastModifiedDate": "2025-11-20T14:15:00.000+0000"

}Three things to notice: the Account relationship is inline (a standard lookup - you just navigate into it with dot notation), Partner_Registrations__r is a custom child relationship subquery with its own totalSize, done, and records array, and LeadSource is a picklist returning the API name as a plain string.

The JSONata Mapping Expression

(

$sourceMap := {

"Web": "inbound_web",

"Phone Inquiry": "inbound_phone",

"Partner Referral": "partner",

"Trade Show": "event",

"Other": "other"

};

response.{

"id": $string(Id),

"first_name": FirstName,

"last_name": LastName,

"name": $join([FirstName, LastName], " "),

"email_addresses": [

Email ? { "email": Email, "is_primary": true }

],

"phone_numbers": $filter([

{ "number": Phone, "type": "work" },

{ "number": MobilePhone, "type": "mobile" }

], function($v) { $v.number }),

"account": Account ? {

"id": $string(Account.Id),

"name": Account.Name,

"industry": Account.Industry

},

"lead_source": $lookup($sourceMap, LeadSource)

? $lookup($sourceMap, LeadSource)

: LeadSource,

"partner_registrations": Partner_Registrations__r.records.{

"id": $string(Id),

"name": Name,

"status": Status__c,

"partner_tier": Partner_Tier__c,

"registered_at": Registration_Date__c

},

"created_at": CreatedDate,

"updated_at": LastModifiedDate,

"custom_fields": $sift($, function($v, $k) {

$k ~> /__c$/i and $boolean($v)

})

}

)Key patterns in this expression:

- Picklist normalization:

$lookup($sourceMap, LeadSource)maps Salesforce picklist API names to your internal enum values. The fallback? ... : LeadSourcepasses through unmapped values rather than silently dropping them. - Standard relationship flattening:

Account.Namenavigates directly into the inlineAccountobject that Salesforce returns for parent lookups. No special handling needed. - Custom child relationship extraction:

Partner_Registrations__r.records.{ ... }iterates over therecordsarray inside the__rsubquery result and maps each record into a clean object. - Dynamic custom field capture: The

$siftcall catchesSubscription_Tier__c,Industry_Vertical__c,Lead_Score__c, andPreferred_Language__cwithout naming any of them.

The Normalized Output

{

"id": "003Dn00000F1ABCXYZ",

"first_name": "Sarah",

"last_name": "Chen",

"name": "Sarah Chen",

"email_addresses": [{ "email": "sarah@acmecorp.com", "is_primary": true }],

"phone_numbers": [

{ "number": "+1-415-555-0142", "type": "work" },

{ "number": "+1-415-555-0199", "type": "mobile" }

],

"account": {

"id": "001Dn00000G2DEFXYZ",

"name": "Acme Corp",

"industry": "Financial Services"

},

"lead_source": "inbound_web",

"partner_registrations": [

{

"id": "a01Dn00000H3GHIXYZ",

"name": "PR-2025-0142",

"status": "Approved",

"partner_tier": "Gold",

"registered_at": "2025-01-10"

}

],

"created_at": "2024-03-15T10:30:00.000+0000",

"updated_at": "2025-11-20T14:15:00.000+0000",

"custom_fields": {

"Subscription_Tier__c": "Enterprise",

"Industry_Vertical__c": "Financial Services",

"Lead_Score__c": 87,

"Preferred_Language__c": "en-US"

}

}The raw Salesforce payload with its attributes metadata, __c suffixes, __r subqueries, and picklist strings is now a clean, predictable structure your application can consume without any Salesforce-specific logic.

Advanced JSONata Techniques: $sift, $map, and Dynamic Resolution

Once you move beyond basic field mapping, JSONata offers powerful functional tools for handling complex API quirks.

Catching All Custom Fields with $sift

The $sift(object, function) function filters an object's key/value pairs, keeping only those where the predicate function returns true. It's the object-level equivalent of $filter for arrays.

For Salesforce, the regex-based approach works perfectly because of the __c convention:

$sift($, function($v, $k) {

$k ~> /__c$/i

})This captures Revenue_Forecast__c, Deal_Registration__c, and any other custom field or object - including ones the customer's admin created yesterday - without you knowing about them in advance.

For platforms without a naming convention (HubSpot, Pipedrive), you define an exclusion list as demonstrated in Step 4.

Reshaping Nested Structures with $map

Some APIs return custom fields as arrays of key-value pairs rather than flat dictionaries. Dynamics 365 or a ticketing system, for example, might return something like:

{

"customAttributes": [

{ "key": "region", "value": "APAC" },

{ "key": "tier", "value": "Enterprise" }

]

}You can reshape this into a clean dictionary using $merge and $map:

$merge(

$map(customAttributes, function($item) {

{ $item.key: $item.value }

})

)Result: { "region": "APAC", "tier": "Enterprise" }

Safely Falling Back with $firstNonEmpty

Different integrations store the same conceptual data in different places. A contact's primary phone number might be under phone, mobilephone, or hs_whatsapp_phone_number. Using a custom function like $firstNonEmpty (or chaining ternary operators) allows you to build resilient fallbacks.

{

"primary_phone": $firstNonEmpty([mobilephone, phone, company_phone])

}Dynamic Resource Resolution

Sometimes the mapping challenge isn't just about fields - it's about which API endpoint to call in the first place. Consider a unified "list contacts" operation. HubSpot has three different endpoints depending on what the caller needs:

/crm/v3/objects/contactsfor basic listing/crm/v3/objects/contacts/searchwhen filters are applied/marketing/v1/contact-lists/{id}/contactswhen a specific list (view) is requested

This can be expressed as a JSONata routing rule stored alongside your field mappings:

rawQuery.view.id ? 'contact-list-results'

: rawQuery.search_term ? 'contacts-search'

: 'contacts'The runtime evaluates this expression, gets back the resource identifier, and uses it to look up the endpoint configuration. No switch statement. No provider-specific branching in your codebase.

Handling Picklists and Type Conversions

Salesforce picklists return the API name as a plain string. A LeadSource picklist with display label "Phone Inquiry" returns "Phone Inquiry" in the API response. This is fine until your application needs standardized enum values across multiple CRMs.

**Single-

FAQ

- What is JSONata and how is it used for API mapping?

- JSONata is a declarative query and transformation language for JSON. For API mapping, it lets you write concise expressions that reshape vendor-specific JSON responses into a unified schema. Because expressions are just strings, they can be stored in a database and evaluated at runtime without code deployments.

- How do you handle Salesforce custom fields (__c) dynamically?

- You can use JSONata's $sift function with a regex predicate to filter the incoming JSON payload and automatically extract any keys ending in the __c suffix: $sift($, function($v, $k) { $k ~> /__c$/i }). This catches every custom field without knowing their names in advance.

- Why is JSONata better than jq for backend API mapping?

- JSONata is natively implemented in JavaScript, making it easy to embed in Node.js backends. It handles arrays implicitly, is side-effect free, and its expressions are pure strings that can be stored in databases, making it better suited for runtime API mapping than the CLI-first jq.

- What is a 3-level override architecture for integrations?

- It is a configuration pattern where API mapping rules are stored in a database at the Platform, Environment, and Account levels. Each level's JSONata expression is deep-merged at runtime, allowing per-customer schema customizations without deploying new code.