How to Build a Coupa MCP Integration (2026 Architecture Guide)

A highly technical, step-by-step architectural guide to building a Coupa MCP server. Learn how to expose enterprise procurement data to AI agents safely.

If you are reading this, your engineering team is likely staring down a hard requirement to expose enterprise spend management data to an AI agent. You are trying to connect an LLM to Coupa's procurement platform using the Model Context Protocol (MCP), and you are about to discover why enterprise ERPs are among the hardest APIs to expose to AI.

Exposing Coupa's legacy, highly complex Core REST API directly to an LLM via naive function calling is a guaranteed failure. Coupa's API returns XML by default, enforces a strict 50-record pagination ceiling, publishes zero documentation on rate limits, and produces payloads so bloated they can blow past an LLM's context window in a single response. You need a standard way to connect Claude, ChatGPT, or a custom LLM to a customer's Coupa instance without hardcoding a brittle, point-to-point integration.

You need to create a Coupa-specific MCP integration guide for your internal architecture review. You need a middleware layer—an MCP server—to translate the LLM's JSON-RPC tool calls into safe, normalized API requests.

This guide provides the architectural roadmap your team needs. We cover the specific Coupa API challenges that make MCP integration painful, the protocol-level decisions you must get right, how to structure your MCP tool schemas, and the build-vs-buy tradeoffs that determine whether this project takes a week or a quarter.

The Rise of Agentic Procurement in 2026

Enterprise software buyers no longer accept isolated data silos, and procurement is currently the highest-stakes vertical for agentic AI. Procurement is not just adopting AI—it is the leading enterprise function for AI adoption.

According to research by AI at Wharton, generative AI adoption in procurement nearly doubled from 50% to 94% between 2023 and 2024, making procurement the leading enterprise function for AI adoption, ahead of product development, marketing, and operations.

But individual adoption and organizational transformation are not the same thing. The Hackett Group's 2025 Key Issues Study found that procurement workloads are projected to increase by 10% while budgets grow just 1%, creating a 9% efficiency gap that only technology can close. That gap is precisely where AI agents come in—not as chatbot overlays, but as autonomous workflows that can discover suppliers, read purchase orders, validate invoices against contracts, and flag spend anomalies across supplier networks without human initiation at every step.

The market trajectory backs this urgency. Mordor Intelligence reports that the procurement software market size is projected to expand from USD 9.81 billion in 2025 and USD 10.74 billion in 2026 to USD 17.11 billion by 2031, registering a CAGR of 9.76%. Gartner predicts 40% of enterprise applications will include task-specific AI agents directly embedded into operational workflows by the end of 2026, up from less than 5% today.

If your B2B SaaS platform touches finance, ERP, AP automation, supplier risk, or supply chain data, an LLM integration is no longer a roadmap stretch goal. Your enterprise customers will soon expect AI agents to read and write directly to their Coupa instance. It is a baseline expectation.

Why Coupa API Integrations Are Notoriously Difficult

Before you write a single line of MCP server code, your team must understand the specific constraints of the Coupa Core REST API. Coupa is designed to handle the financial operations of Fortune 500 companies. It is an ERP-adjacent platform, not a modern, developer-friendly API you can wire up over a weekend.

If you expose Coupa directly to an AI agent, the agent will hallucinate, time out, or crash due to context window exhaustion. Here are the specific pain points your team will face.

1. XML-by-Default Responses and Massive Payloads

Coupa's API returns deeply nested, massive data objects by default. If you query an invoice, Coupa will return the invoice, the associated purchase order, the full supplier details, the user details, and the complete approval chain.

Coupa's official API documentation explicitly warns developers about this behavior, stating that returning full objects for associations causes performance degradation and unnecessary consumption of resources for customers that do not need the extraneous data.

The fix is the return_object parameter. On create and update, supported values are: none, limited, and shallow. On query calls, supported values are: limited and shallow.

If you use return_object=limited, Coupa strips the response down to just the record ID. But here is the problem for AI agents: an LLM cannot reason about a response that contains only {"id": 1}. You need shallow to get useful fields—but shallow still returns nested association objects that can balloon to thousands of tokens per record.

This forces you to build a response-filtering layer between Coupa and the LLM. Without it, a simple "list all purchase orders" call fills the entire context window with XML or JSON bloat the model does not need.

2. The Strict 50-Record Pagination Ceiling

LLMs are notoriously bad at handling pagination states. By default, Coupa fetches 50 records per call. This is not just a default—it is effectively a hard ceiling. Coupa only allows you to extract 50 records at a time.

Coupa uses offset-based pagination, which means your MCP server must track offsets, handle the case where Coupa returns zero results to signal the end of data, and somehow communicate pagination state back to the LLM across multiple tool calls.

For AI agents, this is especially painful. An LLM that needs to find a specific invoice across 10,000 records must make 200+ sequential API calls, each returning a page that consumes context window tokens. Your MCP tool schemas need explicit next_cursor or offset parameters with clear instructions that tell the model exactly how to paginate—or the agent will silently drop data or hallucinate completion.

3. OAuth 2.0 Client Credentials with Instance-Specific URLs

Coupa uses OAuth 2.0. Each Coupa customer has their own subdomain ({customer_name}.coupahost.com), so your integration must manage per-tenant OAuth tokens, each with its own token endpoint (POST https://{your-instance}.coupahost.com/oauth2/token), client ID, and secret.

Tokens expire, and you will need to handle refresh logic gracefully. An expired token mid-agent-workflow is a terrible user experience. Your MCP server must not require the LLM to manage these access tokens.

4. Undocumented Rate Limits and 429 Errors

Coupa does not publish unified, account-wide rate limit documentation for its Core API because limits are often negotiated per enterprise contract. Your team will discover limits empirically through HTTP 429 (Too Many Requests) responses.

For an AI agent that can fire dozens of API calls per minute to perform an audit, this is a real operational risk. When Coupa returns a 429, your MCP server should not silently retry in an infinite loop. Instead, pass the rate limit status back to the caller cleanly. Normalize the upstream rate limit data into standardized headers (ratelimit-limit, ratelimit-remaining, ratelimit-reset) per the IETF draft specification, and force the agentic client or orchestrator to handle the backoff.

5. Why Coupa Is the Representative Example for Enterprise MCP

Coupa is not just one hard API - it is a compressed version of every challenge you will encounter across enterprise procurement and ERP systems. Per-tenant OAuth with instance-specific URLs is the same pattern you face with NetSuite, SAP Ariba, and Oracle Fusion. The 50-record pagination ceiling mirrors similar restrictions in Workday and SAP. Undocumented, contract-negotiated rate limits are standard across Oracle, SAP, and most legacy ERPs. And the XML-by-default, deeply nested response format is common to SOAP-era enterprise platforms that bolted on REST APIs after the fact.

If your MCP server architecture handles Coupa cleanly - per-tenant auth, strict pagination, payload trimming, opaque rate limits - it will handle virtually any enterprise procurement or ERP API with minimal adaptation. That is why this guide uses Coupa as the design target: solve it once, and the pattern transfers to the rest of your enterprise integration portfolio.

What Is an MCP Server and Why Does Coupa Need One?

The Model Context Protocol (MCP) is an open standard and open-source framework introduced by Anthropic in November 2024 to solve the N×M integration problem for AI agents. It standardizes the way artificial intelligence systems like LLMs integrate and share data with external tools, systems, and data sources.

By March 2026, all major providers were on board, and Anthropic reported over 10,000 active public MCP servers and 97 million monthly SDK downloads across Python and TypeScript.

Without MCP, connecting an AI agent to Coupa means building custom function-calling definitions for OpenAI, separate tool schemas for Claude, and yet another integration for Gemini. Instead of building custom point-to-point connectors, you build a single MCP server.

The server exposes a standardized JSON-RPC 2.0 interface. Any MCP-compatible client can connect to this server, request a list of available tools, and invoke them. For Coupa, the MCP server acts as an intelligent proxy. It abstracts the OAuth 2.0 Client Credentials flow, normalizes Coupa's eccentric payload structures into clean JSON schemas, and provides the LLM with highly specific, constrained tools (e.g., list_coupa_invoices instead of a generic GET /api/invoices).

How to Create a Coupa-Specific MCP Integration

To build a production-grade Coupa MCP server, you must implement a strict architectural boundary between the LLM protocol layer and the Coupa execution layer. Here is the step-by-step architectural guide.

Step 1: Map Coupa Resources to MCP Tool Definitions

Every MCP server exposes a set of tools—callable operations that an LLM can discover and invoke. Coupa has hundreds of resources. Hardcoding them creates an impossible maintenance burden when API versions change.

You need to decide which API resources to expose and what operations are allowed on each. A practical starting set for procurement workflows:

| Coupa Resource | MCP Tools | Risk Level |

|---|---|---|

/api/purchase_orders |

list, get | Read-only, low risk |

/api/invoices |

list, get, create | Write operations need approval gates |

/api/suppliers |

list, get | Read-only, low risk |

/api/expense_reports |

list, get | Read-only, low risk |

/api/contracts |

list, get | Read-only, sensitive data |

/api/requisitions |

list, get, create | Write operations need approval gates |

Ideally, your MCP server should dynamically generate tool definitions from API documentation or OpenAPI specs. For every available Coupa method, generate a descriptive snake_case tool name and a strict JSON schema for the inputs.

Security Boundary: Never allow the LLM to dictate the base URL or raw HTTP method. The LLM should only pass arguments mapped to your predefined JSON schema. Furthermore, do not expose update or delete operations on financial records to AI agents without explicit human-in-the-loop approval. A misconfigured agent that modifies purchase orders can cause real financial damage.

Step 2: Build JSON Schemas That LLMs Can Actually Use

Each MCP tool needs a JSON Schema that tells the LLM what parameters are available. This is where most custom MCP implementations fail—the schema is either too vague for the model to use correctly, or too complex for it to reason about.

Here is what a well-designed tool schema looks like for listing Coupa invoices, combining strict typing with semantic prompt instructions:

{

"name": "list_coupa_invoices",

"description": "Fetch a list of invoices from Coupa. Returns paginated results. Use the offset parameter to retrieve subsequent pages of 50 records each.",

"inputSchema": {

"type": "object",

"properties": {

"offset": {

"type": "integer",

"description": "The starting record offset for pagination. First page is 0. Each page returns up to 50 records. Always send back exactly the cursor value you computed without modifying it."

},

"status": {

"type": "string",

"description": "Filter by invoice status. Values: draft, pending_approval, approved, voided."

},

"updated_after": {

"type": "string",

"description": "ISO 8601 datetime. Only return invoices updated after this date."

},

"return_object": {

"type": "string",

"enum": ["shallow", "limited"],

"description": "Response detail level. Use 'shallow' for useful field data, 'limited' for IDs only."

}

}

}

}Notice the explicit instruction on the offset property. LLMs will frequently attempt to parse, decode, or guess pagination tokens. You must explicitly prompt the model to treat the cursor or offset exactly as instructed.

Step 3: Handle Authentication and Token Lifecycle

Your MCP server URL itself should act as the authentication boundary. Generate a unique cryptographic token for each connected Coupa account (e.g., https://your-api.com/mcp/abc-123). When the LLM calls this URL, your infrastructure should look up the associated Coupa OAuth credentials, refresh them if necessary, and execute the request.

sequenceDiagram

participant Agent as AI Agent (MCP Client)

participant MCP as MCP Server

participant Auth as Token Manager

participant Coupa as Coupa API

Agent->>MCP: tools/call (list_coupa_invoices)

MCP->>Auth: Get valid token for tenant

alt Token expired or missing

Auth->>Coupa: POST /oauth2/token<br>(client_credentials)

Coupa-->>Auth: access_token + expires_in

Auth->>Auth: Cache token with TTL

end

Auth-->>MCP: Valid access_token

MCP->>Coupa: GET /api/invoices?return_object=shallow<br>Authorization: Bearer {token}

Coupa-->>MCP: Invoice data (JSON/XML)

MCP->>MCP: Filter response, trim payload for LLM

MCP-->>Agent: MCP result (trimmed JSON)The token manager should refresh tokens proactively—slightly before expiry—rather than waiting for a 401 response mid-workflow. A failed auth in the middle of a multi-step agent task forces the LLM to retry, wasting tokens and potentially confusing the conversation state.

For enterprise security, implement a Time-to-Live (TTL) on these MCP server URLs. If a contractor or temporary AI workflow needs access to Coupa data, generate an MCP server URL that automatically expires and deletes its underlying credentials after 24 hours.

Step 4: Build a Response Filtering Layer

This is the most overlooked part of Coupa MCP integration. Raw Coupa responses, even with return_object=shallow, contain deeply nested association objects that are irrelevant to most agent queries. A single invoice response might include full supplier objects, full account objects, and full approval chain objects—all nested inside each line item.

Your MCP server needs a transformation layer that:

- Strips nested associations down to reference IDs (e.g.,

{"supplier": {"id": 123, "name": "Acme Corp"}}instead of the full supplier object). - Flattens key fields to the top level so the LLM can reference them directly.

- Enforces a maximum response size—if a list response exceeds a token budget, truncate and include a clear

"has_more": trueindicator.

Without this layer, you will burn through context window budget on data the agent never references, and your tool calls will be slower and more expensive than they need to be.

Step 5: Protocol Handling and Tool Execution

When the LLM client sends a tools/call request, your MCP router must intercept the JSON-RPC payload, extract the arguments, and map them to the correct Coupa HTTP request. Your proxy layer must construct the actual Coupa request, forcefully injecting the OAuth token and the mandatory return_object parameter to protect the downstream systems.

Connecting Your Coupa MCP Server to Claude and ChatGPT

Once you have a working MCP server URL—whether self-built or managed—connecting it to the major AI clients is straightforward.

Claude (Desktop or Web): Go to Settings > Connectors > Add custom connector, paste your MCP server URL, and Claude discovers tools automatically via the MCP protocol. Custom connectors via remote MCP are available on Free, Pro, Max, Team, and Enterprise plans.

ChatGPT: Go to Settings > Apps > Advanced settings, enable Developer mode, add a new MCP server with your URL and a label. Developer mode is available on Pro, Plus, Business, Enterprise, and Education accounts.

Both clients will call tools/list to discover your Coupa tools, then display them as available actions in the conversation. The LLM handles tool selection and parameter construction based on the JSON Schemas you defined.

Coupa MCP Server in Practice: Scoping, Auth, and Execution

The architectural steps above describe what to build. This section shows what it looks like when running - concrete API calls, scoping decisions, and operational behavior you will encounter in production.

Scoping a Read-Only Procurement MCP Server

A production Coupa MCP server should never expose all resources with all methods. Procurement data is financially sensitive - a misconfigured write operation on purchase orders or invoices can cause real damage. The safest starting point is a read-only server scoped to procurement-specific resources.

When creating the MCP server, restrict it to read methods (which match get and list operations) and tag it to procurement resources only:

curl -X POST https://api.truto.one/integrated-account/{coupa_account_id}/mcp \

-H "Authorization: Bearer {truto_api_token}" \

-H "Content-Type: application/json" \

-d '{

"name": "Coupa Procurement - Read Only",

"config": {

"methods": ["read"],

"tags": ["procurement"]

},

"expires_at": "2026-05-18T00:00:00Z"

}'The response includes the MCP server URL - a single cryptographic URL that encodes the account scope, method restrictions, and expiration:

{

"id": "mcp-abc-123",

"name": "Coupa Procurement - Read Only",

"config": {

"methods": ["read"],

"tags": ["procurement"]

},

"expires_at": "2026-05-18T00:00:00Z",

"url": "https://api.truto.one/mcp/a1b2c3d4e5f6..."

}That URL is the only thing an MCP client needs to connect. The server resolves the associated Coupa OAuth credentials, enforces the read-only restriction, and handles token refresh automatically. Setting expires_at ensures the server self-destructs after a defined window - useful for contractor access, temporary audit workflows, or time-boxed AI experiments.

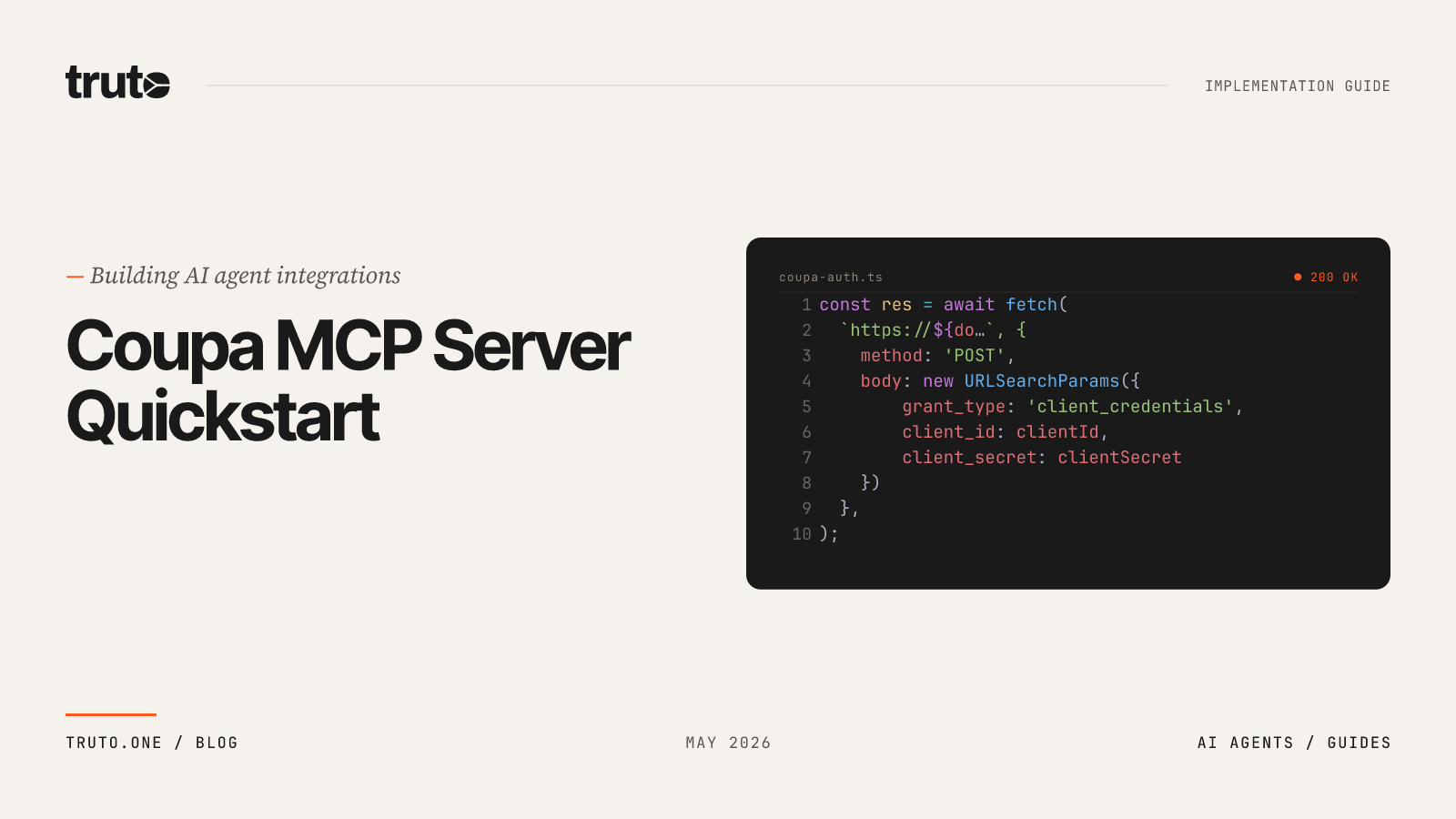

Coupa OAuth 2.0 Flow Inside the MCP Server

Coupa uses OAuth 2.0 with OpenID Connect (OIDC) for API authentication. API keys are deprecated and no longer issued. Each Coupa tenant has a unique token endpoint at https://{tenant}.coupahost.com/oauth2/token, and scopes follow Coupa's service.object.right format (e.g., core.purchase_order.read). Access tokens expire after 24 hours (86,399 seconds), and Coupa recommends renewing every 20 hours.

When the MCP server receives a tools/call request, the authentication flow is entirely invisible to the LLM:

- The platform looks up the connected Coupa account's client credentials (client ID, client secret, instance URL, scopes).

- If the access token is still valid, it proceeds to execute the Coupa API call.

- If the token is nearing expiry, the platform proactively refreshes it - posting

grant_type=client_credentialsto the tenant's/oauth2/tokenendpoint for a fresh access token before the old one expires. - If a refresh fails (revoked credentials, rotated client secret), the account is flagged as

needs_reauth, a webhook event (integrated_account:authentication_error) fires, and subsequent tool calls return a clear error until someone re-establishes credentials.

The LLM never sees OAuth tokens, client secrets, or instance URLs. From the agent's perspective, it calls list_all_coupa_purchase_orders and gets a result.

Example: Listing Purchase Orders via tools/call

Here is a complete JSON-RPC exchange showing an AI agent listing purchase orders through the MCP server. The MCP client sends:

{

"jsonrpc": "2.0",

"id": 1,

"method": "tools/call",

"params": {

"name": "list_all_coupa_purchase_orders",

"arguments": {

"status": "pending_receipt",

"limit": "50",

"updated_after": "2026-04-01T00:00:00Z"

}

}

}The MCP server translates this into a Coupa API request - injecting the OAuth token, adding return_object=shallow to control payload size, and mapping limit to Coupa's offset-based pagination. The response:

{

"jsonrpc": "2.0",

"id": 1,

"result": {

"content": [{

"type": "text",

"text": "{\"result\":[{\"id\":\"4501\",\"po_number\":\"PO-2026-0042\",\"status\":\"pending_receipt\",\"total\":\"24500.00\",\"currency\":{\"code\":\"USD\"},\"supplier\":{\"id\":\"891\",\"name\":\"Acme Industrial\"},\"created_at\":\"2026-04-03T14:22:00Z\"}],\"next_cursor\":\"eyJvZmZzZXQiOjUwfQ==\",\"request_id\":\"req-7f3a2b\"}"

}]

}

}Note the next_cursor value. This is an opaque pagination token. To fetch the next page, the LLM sends another tools/call with "next_cursor": "eyJvZmZzZXQiOjUwfQ==" in the arguments. The tool schema's description for next_cursor explicitly tells the model: "Always send back exactly the cursor value you received without decoding, modifying, or parsing it." This instruction is critical - LLMs will frequently attempt to base64-decode or numerically increment pagination tokens if not told otherwise.

If the tool call fails (expired token, Coupa error), the response includes isError: true in the result content with a descriptive error message, allowing the agent to decide whether to retry or escalate.

Method Filtering and Tag-Based Scoping for Procurement

Method filtering and tag filtering work together to create precise access boundaries for your Coupa MCP server.

Method filtering restricts which operation types the server exposes:

| Filter Value | Operations Exposed |

|---|---|

"read" |

get, list |

"write" |

create, update, delete |

"list" |

Only list (exact match) |

"custom" |

Non-CRUD operations (e.g., download, approve) |

Categories can be combined. Setting "methods": ["read", "custom"] exposes get, list, and any custom methods - but blocks create, update, and delete.

Tag filtering restricts which resource groups appear. A Coupa integration might tag resources like this:

{

"tool_tags": {

"purchase_orders": ["procurement", "spend"],

"invoices": ["procurement", "ap"],

"suppliers": ["procurement", "vendor-management"],

"expense_reports": ["expenses"],

"contracts": ["procurement", "legal"],

"requisitions": ["procurement"]

}

}Creating a server with "tags": ["procurement"] exposes tools for purchase orders, invoices, suppliers, contracts, and requisitions - but excludes expense reports (tagged only as "expenses"). Combining "methods": ["read"] with "tags": ["procurement"] gives you a server that can only list and retrieve procurement data. No writes, no off-category resources.

The platform validates at creation time that the intersection of your method and tag filters produces at least one tool. You cannot accidentally create an MCP server with zero available operations.

Dual Authorization: MCP URL Token + Truto API Token

By default, anyone with the MCP server URL can call its tools. For enterprise deployments where the URL might appear in logs, config files, or CI pipelines, add a second authentication layer:

curl -X POST https://api.truto.one/integrated-account/{coupa_account_id}/mcp \

-H "Authorization: Bearer {truto_api_token}" \

-H "Content-Type: application/json" \

-d '{

"name": "Coupa Procurement - Secured",

"config": {

"methods": ["read"],

"tags": ["procurement"],

"require_api_token_auth": true

}

}'With require_api_token_auth enabled, every MCP request must include a valid Truto API token in the Authorization header. Two conditions must both be true for a tool call to succeed:

- The MCP URL token must be valid and unexpired.

- The caller must present a valid Truto API token proving they are an authenticated team member.

A tools/list request to this secured server looks like:

curl -X POST https://api.truto.one/mcp/a1b2c3d4e5f6... \

-H "Authorization: Bearer {truto_api_token}" \

-H "Content-Type: application/json" \

-d '{

"jsonrpc": "2.0",

"id": 1,

"method": "tools/list"

}'Possession of the URL alone is no longer sufficient. This is the recommended configuration for any Coupa MCP server that touches production procurement data.

Operational Notes: Retries and Rate-Limit Behavior

Rate limits: When Coupa returns an HTTP 429, the MCP server does not silently retry in a loop. It normalizes the upstream rate limit data into standardized response headers per the IETF draft specification and returns the rate-limited status to the caller. The orchestrating agent or framework is responsible for implementing backoff - this keeps the MCP server stateless and prevents runaway retry storms against Coupa's undocumented, per-contract limits.

Token refresh failures: If a Coupa OAuth token refresh fails with a retryable server error (HTTP 500+), the platform schedules a retry after a configurable interval. If the failure is non-retryable (HTTP 401, invalid_grant - meaning the client secret was rotated or credentials were revoked), no retry is scheduled. The account is marked as needs_reauth, a webhook event fires, and all subsequent tool calls return a clear error until credentials are re-established.

Pagination safety: Coupa's 50-record ceiling means agents will make many sequential calls for large datasets. Each page returned includes next_cursor and request_id fields. If a page request fails mid-sequence, the agent can resume from the last successful cursor without re-fetching previous pages.

Coupa's 24-hour token window: Coupa access tokens expire after 24 hours (86,399 seconds). Coupa themselves recommend renewing every 20 hours. The platform handles this by scheduling proactive token refresh well before expiry - randomized within a window before the token expires to spread load across accounts. This means a long-running agent workflow that spans hours will never encounter an expired Coupa token mid-execution.

Build vs. Buy: Custom MCP Servers vs. Managed Platforms

Building a custom Coupa MCP server from scratch is technically straightforward for a senior backend engineer, but maintaining it is a multi-month nightmare. Here is an honest breakdown of the engineering work involved:

| Component | Custom Build Effort | Managed Platform |

|---|---|---|

| OAuth 2.0 CC token lifecycle | 1-2 weeks + ongoing maintenance | Handled automatically |

| Tool schema definitions for 6+ resources | 2-3 weeks of schema design + testing | Auto-generated from API docs |

| Response filtering / payload trimming | 1-2 weeks | Built into proxy layer |

| Pagination state management | 1 week | Declarative config |

| Rate limit handling | 1 week | Normalized headers |

| MCP protocol compliance (JSON-RPC 2.0) | 1-2 weeks | Native implementation |

| Ongoing maintenance (Coupa releases 3x/year) | Continuous | Platform absorbs changes |

| Total initial effort | 8-12 weeks | Hours to days |

Coupa releases three major updates annually. Oracle Fusion updates every quarter, and Oracle EBS issues monthly patches. Each Coupa release can change API behavior, add new fields, or deprecate existing ones. If you built your MCP tool schemas by hand, every release is a potential breakage point.

If you build this in-house, your engineering team is now responsible for tracking Coupa's API deprecations, managing OAuth token refresh failures across hundreds of enterprise tenants, and scaling the infrastructure to handle LLM-driven traffic spikes.

Managed unified API platforms have completely abstracted this problem. Modern integration infrastructure allows you to connect a customer's Coupa instance and instantly generate a secure, fully compliant MCP server URL with zero custom code.

When evaluating a managed solution, prioritize platforms that offer:

- Zero Data Retention: The platform must act as a pure proxy. Enterprise security teams will immediately block your deal if your integration provider caches Coupa spend data at rest.

- Dynamic Tool Generation: Tools should be derived directly from the provider's documentation. A tool only appears if it has a documentation entry, acting as both a quality gate and a curation mechanism, ensuring the LLM always has the most accurate schema.

- Standardized Rate Limiting: The platform should normalize Coupa's opaque rate limits into standard IETF headers, allowing your agent to back off gracefully.

What Your Coupa MCP Architecture Should Look Like

Putting it all together, here is the target architecture for a production-ready deployment:

flowchart LR

subgraph AI Clients

A[Claude Desktop]

B[ChatGPT]

C[Custom Agent]

end

subgraph MCP Server

D[JSON-RPC 2.0<br>Endpoint]

E[Tool Registry<br>& Schema Engine]

F[Auth Manager<br>OAuth 2.0 CC]

G[Response Filter<br>& Payload Trimmer]

end

subgraph Coupa

H[Coupa Instance<br>tenant.coupahost.com]

end

A -->|MCP Protocol| D

B -->|MCP Protocol| D

C -->|MCP Protocol| D

D --> E

D --> F

D --> G

G --> H

F --> HThe MCP server sits as a thin, stateless proxy between any MCP client and Coupa. It handles authentication, tool discovery, response filtering, and protocol compliance. Zero procurement data is stored at rest—every request passes through and the response goes directly back to the caller.

Where to Go From Here

The engineering decisions that matter most for a Coupa MCP integration are not about the protocol itself—MCP is well-specified and well-documented. The hard parts are all Coupa-specific: managing bloated XML/JSON payloads, working around strict pagination limits, handling undocumented rate limits, and keeping up with three major releases per year.

If your team has the bandwidth for a multi-month integration project and wants full control over every layer, building from scratch is viable. But for most B2B SaaS teams—especially those who need Coupa alongside other procurement and ERP systems like NetSuite, SAP, or Oracle—a managed MCP platform that auto-generates tools and handles the API complexity is the faster path to production.

The 94% GenAI adoption rate among procurement executives tells you where the market is headed. The teams that ship working Coupa AI integrations this quarter will close the deals that their competitors are still scoping.

FAQ

- What are the biggest challenges with the Coupa API for AI agents?

- Coupa returns massive payloads by default (full nested association objects), enforces a strict 50-record pagination ceiling, uses XML as the default response format, and publishes no official rate limit documentation. These create specific context window exhaustion and hallucination problems for LLMs.

- How do you prevent Coupa API payloads from exceeding LLM context windows?

- Always append the return_object=limited or return_object=shallow parameter to Coupa API requests to strip deeply nested association data. Additionally, your MCP server needs a response filtering layer to flatten key fields and truncate massive lists before passing them to the LLM.

- What is the best way to handle Coupa API pagination with LLMs?

- Coupa enforces a hard 50-record limit and uses offset-based pagination. You must explicitly document the offset parameter in your MCP tool's JSON Schema, instructing the LLM to pass the cursor state back unmodified between sequential tool calls.

- How long does it take to build a custom Coupa MCP server?

- A custom Coupa MCP server typically requires 8-12 weeks of initial engineering effort, covering OAuth token management, tool schema design, response filtering, pagination handling, and MCP protocol compliance. Ongoing maintenance is required as Coupa releases three major updates per year.